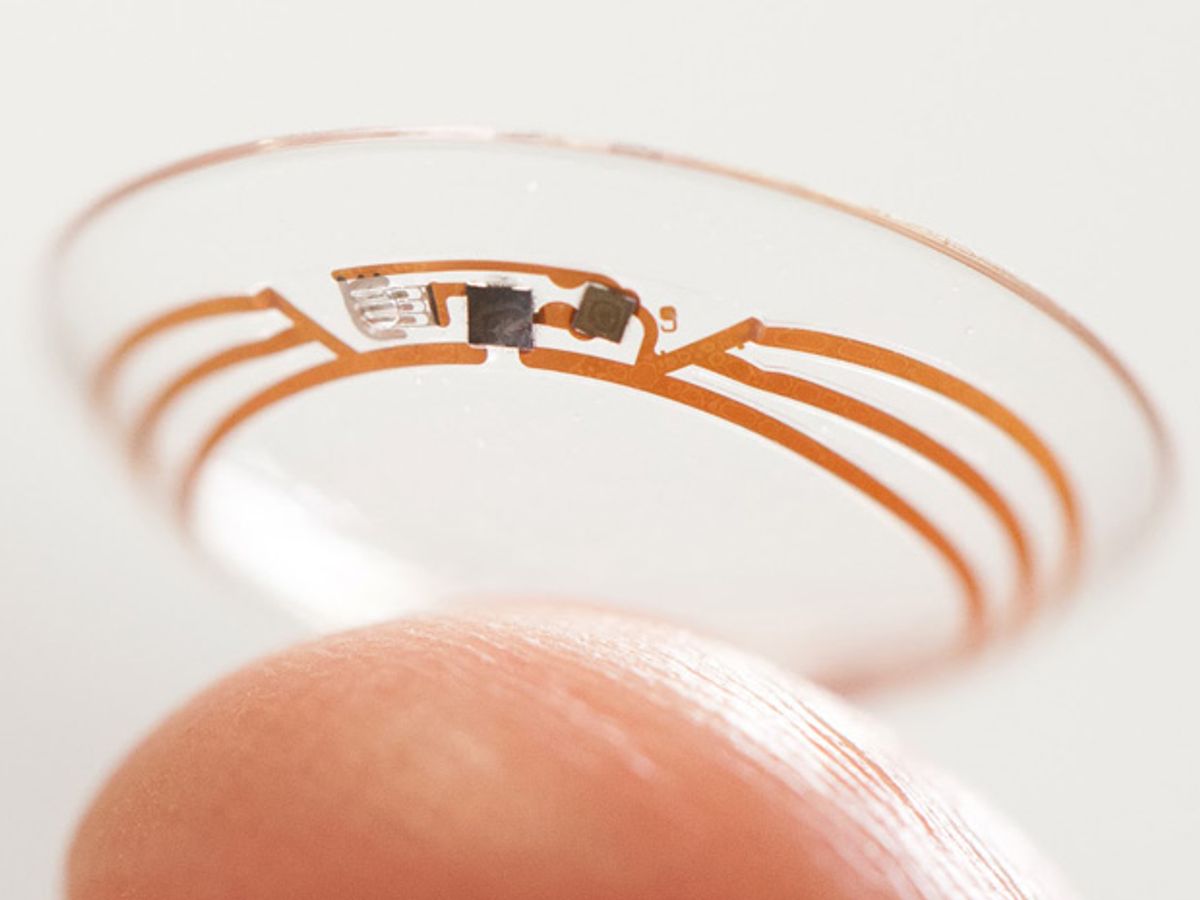

Google X lab is developing a smart contact lens that can measure glucose levels in tears using a small wireless chip and a miniaturized glucose sensor, according to the company’s blog. One in 10 people in the world are expected to have diabetes by 2035, according to the International Diabetes Federation.

The chip and sensors, shrunken to the size of flecks of glitter, are embedded between two layers of soft contacts. The prototypes can generate one reading per second, and the developers are working on integrating miniature LED lights that could tell the wearer if glucose levels have gone above or below a set threshold.

“It’s still early days for this technology,” wrote the project co-founders, Brian Otis and Babak Parviz, “but we’ve completed multiple clinical research studies which are helping to refine our prototype.”

Before joining Google, Parviz was working at the University of Washington in Seattle on contact lenses that could be used for noninvasive monitoring and visual enhancement. He knew the market could evolve substantially in just a few years.

“The glucose detectors we’re evaluating now are a mere glimmer of what will be possible in the next 5 to 10 years,” he wrote in IEEE Spectrum in 2009. Some of the challenges he noted then have already been overcome, such as integrating the monitoring components onto the soft contact lens.

Five years ago, Parviz noted that another challenge was integrating LEDs onto contact lenses, because some are made with toxic materials such as arsenic. Google is still “exploring” adding tiny LEDs to the lenses.

Google is hardly alone in developing contact lenses for novel medical purposes. A Swiss company Sensimed has a soft contact lens with micro-sensors that monitor eye pressure for glaucoma patients. It is being used in Europe but is not yet for sale in the United States.

The market for all wearable technology is expected to be at least US $6 billion by 2016, according to IMS Research. Health and medical applications will lead, with fitness and wellness and infotainment not far behind.

As Google Glass has shown, there is a huge interest in the mass market for glasses or contact lenses that can provide augmented reality. But Google is just one player. Major companies, such as Medtronic, Nike, Adidas, Sony, and Garmin International are all developing wearable tech for different applications.

At CES this year, iOptik showed off its high resolution augmented reality display prototypes that rely on a contact lens as well as a wearable display. Without the glasses, the contact lenses allow the user to see the world as he usually would. Throw on the glasses, however, and the wearer sees a screen with a 60-degree field of view. Currently, Google Glass has a field of view of about 13 degrees.

Infotainment wearable tech still has a long way to go, but it has one less hurdle than medical devices in the United States: they need not seek U.S. Food and Drug Administration (FDA) approval. “We’re in discussions with the FDA, but there’s still a lot more work to do to turn this technology into a system that people can use,” Otis and Parviz wrote of their research, adding that it cannot just be an in-house effort. “We’re not going to do this alone: we plan to look for partners who are experts in bringing products like this to market.”