Over the past few years, we’ve seen 3D printers used in increasingly creative ways. There’s been a realization that fundamentally, a 3D printer is a full-fledged, multi-axis robotic manipulation system—which is an extraordinarily versatile thing to have in your home. Rather than just printing static objects, folks are now using 3D printers as pick-and-place systems to manufacture drones, and as custom filament printers to make objects out of programmable materials, to highlight just two examples.

In an update to some research first presented at the end of 2019, researchers from Meiji University in Japan have developed one of the cleverest 3D printer enhancements that we’ve yet seen. Called Functgraph, it turns a conventional 3D printer into a “personal factory automation” system by printing and manipulating the tools required to do complex tasks entirely on the print bed. A paper on Functgraph, by Yuto Kuroki and Keita Watanabe, was presented at the Conference on 4D and Functional Fabrication 2020 in October.

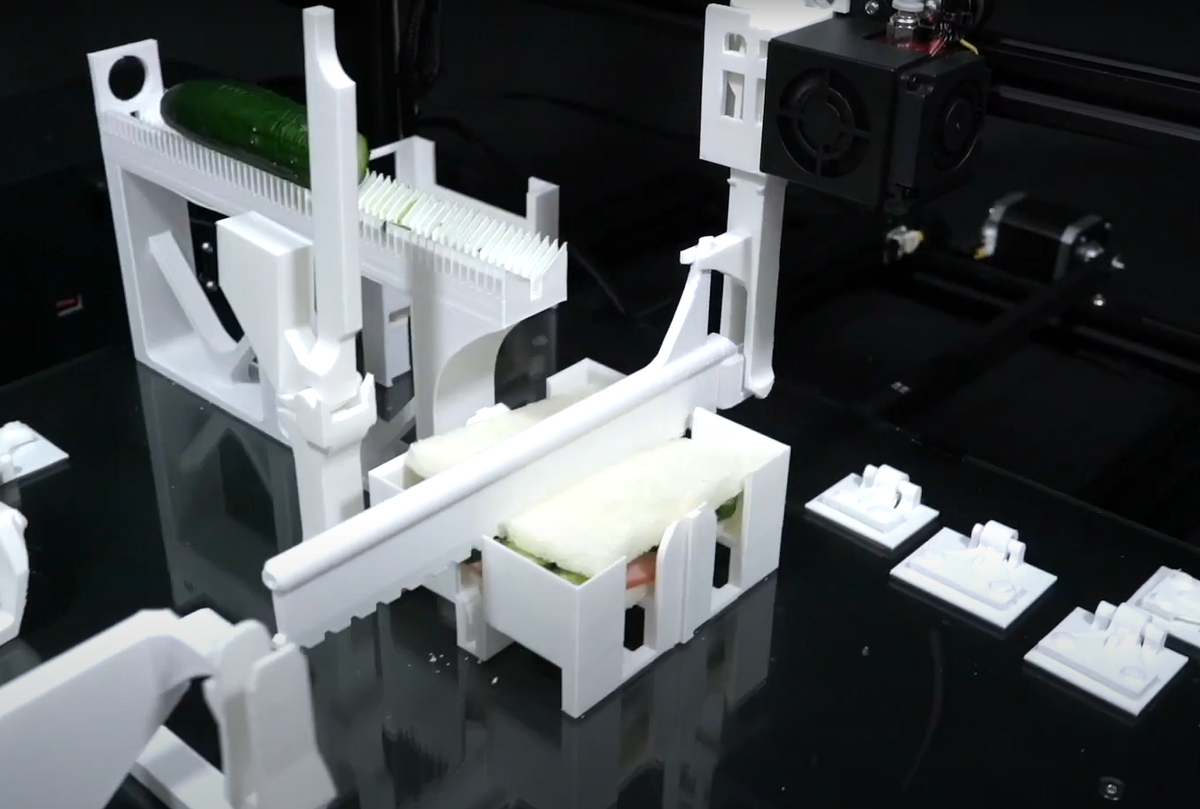

Far as I can tell, this is a bone-stock 3D printer with the exception of two modifications, both of which it presumably printed itself. The first is a tool holder on the print head, and the second is a tool release mechanism that sits off to the side. These two things, taken together, give Functgraph access to custom tools limited only by what it can print; and when used in combination with 3D printed objects designed to interact with these tools (support structures with tool interfaces to snap them off, for example), it really is possible to print, assemble, manipulate, and actuate entire small-scale factories.

Yuto Kuroki, first author on the paper describing Functgraph, describes his inspiration for some of the particular tasks shown in the demo video:

The future that Functgraph aims for is as a new platform that downloads apps like smartphones and provides physical support in the real world— the realization of personal factory automation.

When it comes to sandwich apps, there are many ways to look at recipes, but in the end, humans have to make them. I made a prototype based on the idea of how easy it would be if I could wake up in the morning saying "OK Google, make a breakfast sandwich."

Regarding the rabbit factory, it’s an application that mass-produces and packs rabbit figures. The box on the right is an interior box to prevent the product from slipping, and the box on the left is an exterior box that is placed in the store and catches the eyes of customers. This is a realization that the manufactured figure is packed as it is and ready for shipment. In this video, two are packed in a row, so in principle it is possible to make hundreds or thousands of them in a row.

The reason for making a prototype of an app to make a car is a strange story, but the idea is that if you send a 3D printer to a remote place like space, it will be able to generate what you need on the spot. Even if you’re exploring the Moon and your car breaks, I think that you can procure it on the spot again if you have a 3D printer, even without specialized knowledge, dedicated machines, and human hands. This research shows that 3D printers can realize individual desires and purposes unattended and automatically. I think that 3D printers can truly evolve into ‘machines that can do anything’ with Functgraph.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.