Functional magnetic resonance imaging (fMRI) is a remarkable technology: it can be used to do everything from recording your dreams on video to teaching you new skills while you sleep. It's also good for controlling robots, and Israeli researchers have managed to get a robot to move around a room just by thinking about it.

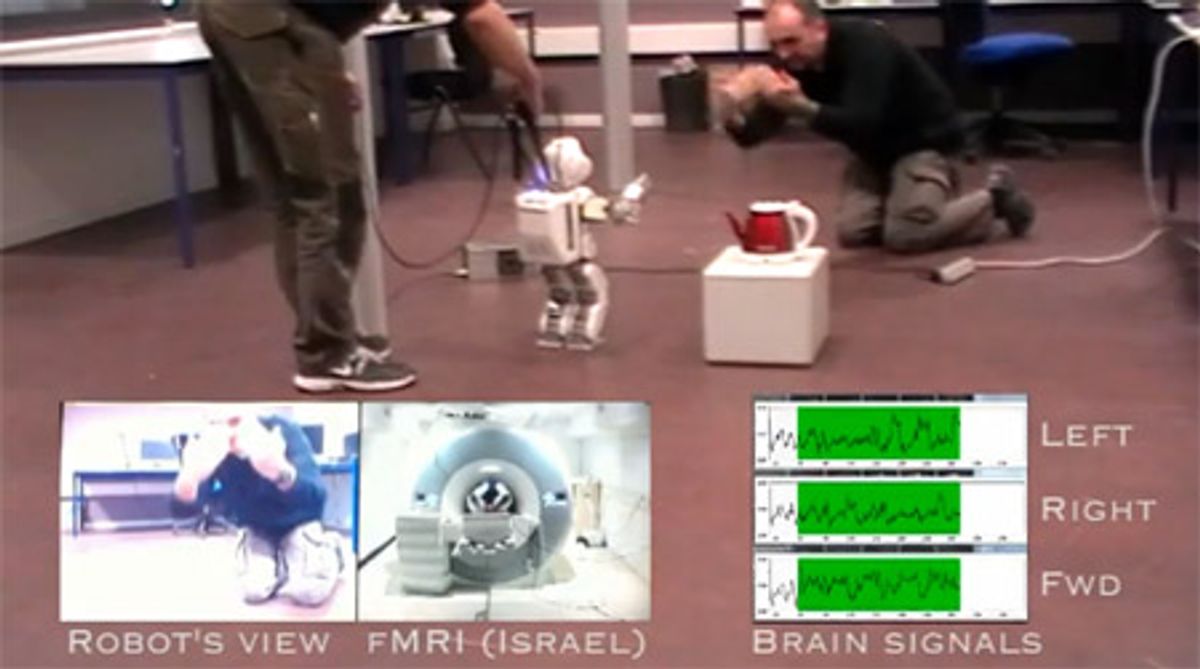

An fMRI machine detects changes in blood flow to measure brain activity in real time with a very fine degree of spatial resolution. It can detect changes so subtle that it's possible to differentiate between the activity patterns created when you think about turning left versus when you think about turning right, which is the basis for the experiment shown in the video below. In Israel, a researcher inside an fMRI machine thinks "walk forward" or "move right" or "move left," and a thousand kilometers away in France, a robot performs the movement based on the researcher's thoughts alone while sending back first-person video for an avatar-like experience:

There are a few different reasons why this method of brain control is different from (and arguably better than) other methods of brain control that we've seen in the past. Other tools, like Emotiv's EPOC headset, can detect specific patterns of brainwaves that can then be used to send commands to a robot, but to get that to work, you have to train your brain to reliably create those brainwaves. fMRI, on the other hand, can (sort of) read your thoughts directly, with a vaguely alarming degree of accuracy, meaning that very little training is necessary: just picture a robot doing something, the fMRI will suck that picture straight out of your brain, and then get the robot to do the same thing. The other big advantage is that you don't need any sort of implant or anything, just a ridiculously expensive machine. But who knows, maybe at some point in the future, baseball caps and sunglasses will all come with fMRI systems inside them.

Obviously, we're looking at just some preliminary proof of concept research here, but there's a lot of potential that this technology could eventually realize. The overall goal of the project is to enable people with physical disabilities to be able to control robotic systems, which would be awesome, but there are also plenty of ways in which direct fMRI control could be used to enhance robots in the commercial and military sectors. All we really need is for someone to come up with a DIY fMRI kit in the couple hundred bucks range, and we'll be good to go.

[ VERE Project ] via [ New Scientist ] and [ Extreme Tech ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.