Technology Priorities for the White House

What issues related to technology will the next U.S. President confront?

In a few short weeks, U.S. voters will head for the polls to choose a new President. Much has changed in the technological world since Bill Clinton took office in 1993. Back then there was no talk of dotcoms or Linux or the New Economy. People could surf the Web, true, but not too far and not too fast.

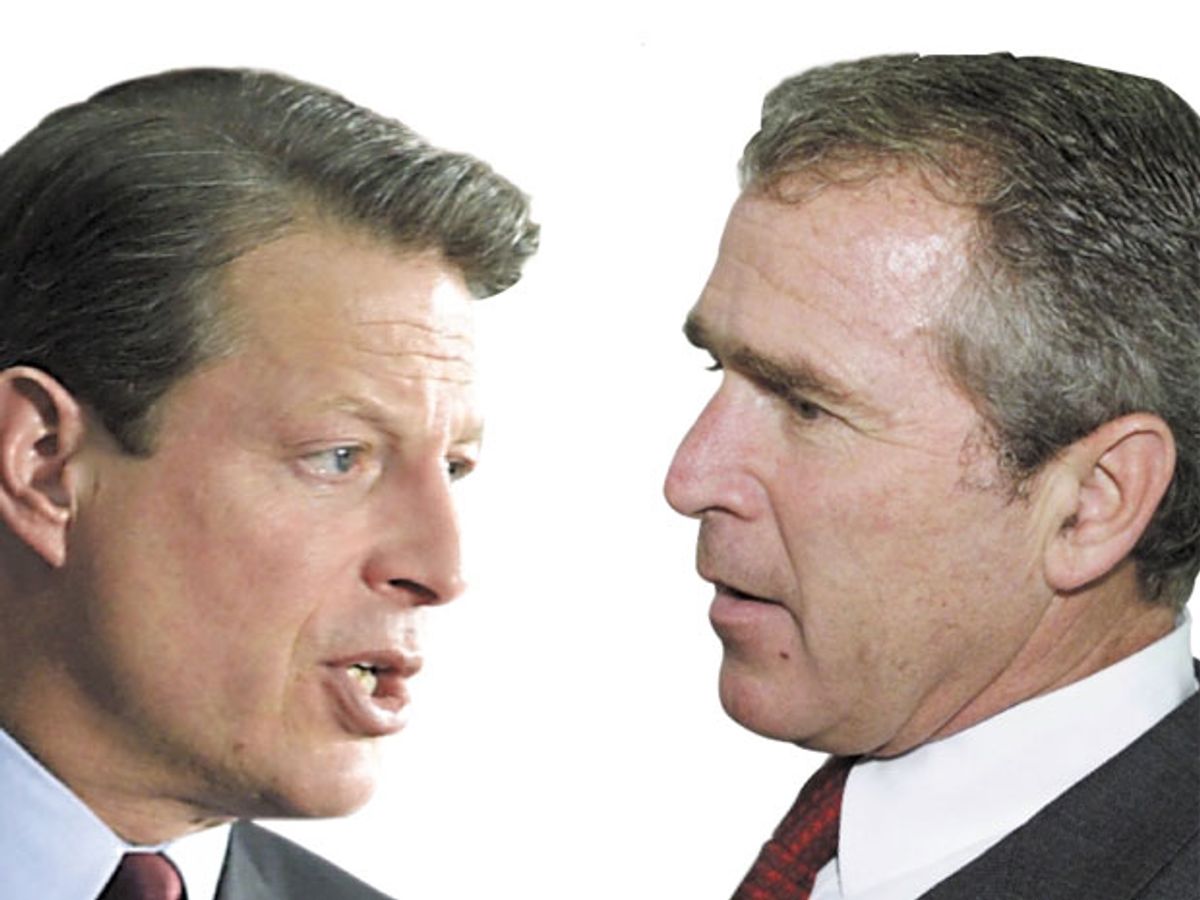

Since then, the U.S. economy has undergone an unprecedented turnaround, fueled largely by technological innovation. That's the conventional wisdom, at least, and it has also been a kind of chirping refrain in the campaigns of the leading presidential candidates: Vice President Al Gore and Texas Governor George W. Bush. Both offer up glowing words about the social, political, and economic wonders wrought by technology. Both promise, if elected, to keep that spirit of innovation alive. And both have marshaled an impressive cadre of campaign advisers from the high-tech industry. In Gore's camp are venture capitalist John Doerr, Genentech's Arthur Levinson, and Netscape cofounder Marc Andreessen. Bush supporters include Dell Computer's Michael Dell, Cisco Systems' John Chambers, and Intel chairman Andy Grove.

That said, the average U.S. citizen is unlikely to vote solely on the basis of a candidate's views on technology. "The issues of greatest concern to Americans--like social security and taxes--either are not rooted in science and technology or are not perceived as rooted in them," observed Jon M. Peha, an expert on computer and telecommunications policy at Carnegie Mellon University, Pittsburgh. "Serendipity may drive the extent to which science and technology issues become part of the 2000 campaign. If high gas prices persist, the candidates will debate energy policy....If there is an egregious privacy violation, they will debate privacy."

And where do Bush and Gore stand on technology? Some onlookers complain of the candidates' too great similarity, and certainly their views on Internet taxation, immigration, free trade, and more are remarkably alike [Table 1]. But there are differences, too, according to Gary Chapman, a technology policy expert at the University of Texas, Austin. "Gore's agenda is similar to what the Clinton administration has been advocating over the last eight years, which is a combination of rising support for R&D and a handful of targeted investments in civilian technologies," Chapman told IEEE Spectrum, "whereas Governor Bush's emphasis is more on the private sector agenda, and a fairly significant rise in military R&D." The assumption is that a Bush administration would give business a somewhat freer hand by, for example, easing up on antitrust laws; so far, though, the candidate has avoided commenting on the Microsoft Corp. case.

In terms of tech-savvyness, Gore is hard to match. He has written a sound book on global warming and prides himself on his wide knowledge of the issues. He can also point to a long track record, first as a U.S. senator and then as Vice President, of supporting technological initiatives, including the Internet, telecommunications reform, and encryption software.

Less conversant with science and technology, Bush defers many of the policy details to his campaign advisers. On foreign policy, he looks to Soviet expert Condoleeza Rice, whose hawkish views crystalized during her tenure with the Bush administration. The campaign's senior technology adviser is former Congressman Robert S. Walker, who, as the controversial chair of the House Committee on Science in the mid-1990s, cut funding for high-performance computing and renewable energy research and tried to kill off the Department of Commerce's civilian technology programs. "Walker's got a very distinct record of opposing just about everything that Al Gore believes in," Chapman said.

Of the so-called third-party candidates, Green Party nominee Ralph Nader has had the most to say about technology--not just in this election but throughout his career. Back in the '60s, the consumer advocate made his mark questioning unsafe cars, and he has also pushed for safety devices like airbags. "Nader and his organizations have been strongly for open access regulation [for telecommunications services], strongly for breaking up Microsoft, and withering in their criticism of corporate control over the technology agenda," noted Chapman.

Pat Buchanan, the Reform Party's choice, has tended to address technology only where it intersects with his protectionist views--for example, objecting to the hiring of offshore computer programmers and normalizing trade relations with China. Representing the Natural Law Party is Harvard-educated physicist John Hagelin, the only contender with a technical background. His views, though, are more New Age than New Economy; in his platform for "prevention-oriented government," he endorses renewable energy and organic farming, and opposes genetically engineered crops.

Just how far the next President will be able to push his agenda will of course hinge on who controls Congress and who gets appointed to the cabinet and other senior posts. Indeed, "it's critically important for the new President to make science and technology appointments early," said Erich Bloch, a former head of the National Science Foundation, in Arlington, Va. That would not only allow the new appointees to take advantage of the transition period but also "send the right signal--that science and technology is indeed important."

Regardless of who wins in November, the next Administration will confront a raft of technology-related problems. Some of these, like biotechnology and on-line privacy, have only begun to be taken up in policy circles. In more mature areas, like energy, air quality, and telecommunications, recent Federal rules may have unexpected downstream effects. And in issues that cross national borders, such as global warming, the so-called digital divide, and military policy, whatever path the new government takes will likely influence the course of events worldwide.

Here, then, are some of the major technology issues that will confront the next occupant of the Oval Office.

The politics of genomics

The three billion genetic instructions that form a blueprint for human life have now been logged, or nearly so. Most immediately, the human genome map will help biotechnology and drug companies spot medically relevant genes and target them for new tests and treatments. And the day cannot be far off when screening a person's genetic profile will be as routine as a cholesterol test. In the absence of solid protections, though, such information could become a tool for discrimination in hiring and insurance coverage.

Then there is the matter of ownership. The patenting of life forms--whether human, animal, or plant--is now standard practice in the biotech industry. (Just how standard was seen last March, when President Bill Clinton and UK Prime Minister Tony Blair announced that genetic data about humans "should be freely

available to scientists everywhere"--triggering a massive sell-off of biotech stocks.) Some time before the end of this year, the U.S. Patent and Trademark Office, in Arlington, Va., will issue detailed new guidelines for gene-related patents. Companies welcome the new rules, said Charles Craig of the Biotechnology Industry Organization, a trade group in Washington, D.C., but also harbor some reservations about their enforcement and whether the patent office has enough funding to handle the burgeoning load of genomics patent applications.

The use of biotechnology in agriculture is a thornier issue. Fears that genetically modified foods are unhealthy to eat, bad for the environment, or both, have sparked widespread opposition in Europe, and such sentiments are building in the United States, too. Complicating matters is that biotech regulation now falls to three U.S. agencies, which can make oversight uneven.

The myriad costs and benefits--environmental, ethical, and economic--of genetic research have yet to be articulated in a comprehensive Federal policy. Managing a future in which humans increasingly "play God" will therefore require clear-eyed consideration by the next President.

The deepening digital divide

Nearly half of all U.S. households now own a computer, and as many as 90 million individuals use the Internet regularly, at work, school, or home. But a yawning gap still separates the digital haves and have-nots. According to the Department of Commerce, people in the highest income bracket are seven times more likely to own a computer than those in the bottom bracket.

Nor does the digital divide stop at U.S. borders. At the latest Group of 8 summit in Japan, a major theme was the growing global imbalance in digital access. With its enormous power to spread information and create communities, the Internet can indeed help level the proverbial playing field--but not if the field remains off limits to billions of people.

Local, state, and Federal government and the private sector all recognize the social and economic sense in closing the digital divide. To date, efforts have largely focused on wiring schools and libraries. That's too simplistic, argues Langdon Winner, a professor of science and technology studies at Rensselaer Polytechnic Institute in Troy, N.Y. "Politicians have seized upon the idea of the Internet revolution as a talisman, without thinking deeply about what children need," Winner said. "We'd much rather talk about the hardware and software of education than its human dimensions." Gender, geography, education, age, occupation, and race all factor into who has access and who does not. And access means more than just a computer, a phone line, and a modem--it also means having the finances, technical know-how, and knowledge to get at relevant content.

What more will the next Administration do to close the digital divide, both at home and abroad?

The heat is on

Over the last decade, a scientific consensus has formed that the man-made threat to Earth's climate is real. Today many countries, particularly in Europe, are targeting the problem through serious research and policy-making. What's more, a number of major car and oil companies, including British Petroleum, Royal Dutch Shell, and Ford Motor, that once actively campaigned against climate change initiatives have now pledged to radically cut their greenhouse-gas emissions--in some cases beyond those mandated by the 1997 Kyoto Protocol.

Contrast that with the fairly meager U.S. response. "What should be a thoughtful debate about the best way to approach the problem has turned into a crazy discussion over whether or not the science is real," noted M. Granger Morgan, a professor of engineering and public policy at Carnegie Mellon. The United States has yet to ratify the Kyoto Protocol, and an aggressive strategy to counter global warming is still absent, despite public concern and industry receptiveness.

Time is running out. This fall, the Government will release an assessment of the country's progress on global warming. It's unlikely that the report will find much to praise. The next President will need to consider the report's findings, and the growing body of scientific evidence, to decide what comprehensive steps should be taken.

Waiting to inhale

The Environmental Protection Agency (EPA), Washington, D.C., has estimated that implementing its most recent regulations on ozone and particulate matter, taken under the 1990 amendments to the Clean Air Act of l970, will cost some US $50 billion annually. The Supreme Court is now weighing whether EPA should factor such costs into its regulatory calculus. Some 40 leading U.S. economists, organized by Robert E. Litan at the Brookings Institution and Robert W. Hahn of the American Enterprise Institute for Public Policy Research (both in Washington, D.C.), have entered an amicus brief in the case, American Trucking Association vs Carol M. Browner, EPA Administrator, asking the justices to overturn a lower court ruling that barred the agency from taking into account such cost considerations.

The high court's impending action "will be a big deal," Hahn told Spectrum. Not having to consider rigorous cost-benefit analysis has given EPA unfettered authority to set the nation's energy policies, he argued.

One telling example is the EPA's vigilant efforts to clean up coal power. Last fall, it sued seven of the country's largest utility companies for failing to install antipollution devices on upgraded coal-burning plants. It's a massive action, involving $25 000-a-day fines, plus outlays for new equipment. Such a move could also radically alter the country's energy balance, according to the Electric Power Research Institute, Palo Alto, Calif. Under current policies, the institute estimated, the share of coal in U.S. electricity production will drop from 50 percent to 10 percent by the year 2020, while natural gas will rise from 15 percent to 60 percent. The cost, from construction of new plants and delivery systems, rising gas and oil prices, and so on, could come to $160 billion.

Unquestionably, though, regulations have led to cleaner air. Carbon monoxide levels have fallen by 60 percent over the last two decades, sulfur dioxide by 55 percent, and ozone by 30 percent. Even so, air pollution still contributes to something like 50 000 to 100 000 deaths each year, from emphysema, lung cancer, and other diseases. And these days, pinpointing why the air is bad and what to do about it is a far more subtle and complex affair. What price should be placed on human health and the environment?

The next Administration will need to consider how well our air quality rules are working, in both the short and long term. And if current regulations are to be rolled back, what will take their place?

Getting pumped

When gasoline prices spiked earlier this year, U.S. car owners took notice. In nationwide polls, more than half said the price hikes were a serious threat to the economy, and four in 10 said the increase had caused them hardship. Meanwhile, residents of California, the first state to deregulate its electric utilities, have seen their electricity bills triple over several months' time, prompting the California Independent System Operator, in Folsom, to halve wholesale energy price caps.

Such scares come as a much-needed reminder that the United States lacks a clear strategy for meeting its energy needs beyond the near future. The restructuring of the power grid that is now taking place, not just in California, but nationwide, is intended to bring about more competition and lower prices. The problem, according to John Anderson, executive director of the Electricity Consumers Resource Council in Washington, D.C., is that "big players still would rather manipulate markets to their advantage than build the infrastructure needed to meet growing demands." As things stand, he said, "Utilities want to get bribed to invest money in new capacity."

So who will ensure that the power grid's infrastructure is adequately built up and reliably maintained, and that consumers don't get gouged? Is that a role for the Federal or state governments, or should it be left to the power industry itself?

Privacy in a networked world

When ToySmart.com Inc., an on-line toy seller based in Waltham, Mass., went into bankruptcy, it did what most failing companies do: sold off its assets. Among the lot was a computerized database, containing the names, addresses, and purchase histories of 250 000 customers. Never mind the company Web site's promise that "personal information voluntarily submitted by visitors to our site...is never shared with a third party."

This case speaks to a growing concern about life in the Digital Age: the ease with which computer technology can be used to track and record an individual's actions, with or without that person's knowledge or permission. "We are seeing technological trends that jeopardize personal privacy more and more every day," noted Carnegie Mellon's Jon Peha. "But there has yet to be a single earth-shaking event that makes it obvious to everyone just how powerful and intrusive the technology can be." When that happens, as it undoubtedly will, public debate will begin in earnest. Already, the Federal Trade Commission, Washington, D.C., estimates that buyers' concerns over on-line privacy translated into $2.8 billion in lost sales last year. If nothing is done to boost consumer confidence, that figure could grow to $18 billion by 2002.

A partial solution may lie in privacy-enhancing technology, such as data encryption and smarter browsers that reject activity-tracking "cookies." To be truly useful, though, everybody would need to adopt them, including those who profit from the current setup. Some larger companies in the dotcom world have offered their own privacy guidelines, through a consortium called the Network Advertising Initiative. "But it's not the big players you worry about," Peha said. "All you need is one company to be defrauding consumers to jeopardize the system."

Some baseline legislation will still be needed, said Peha. But regulations will need to be crafted very narrowly, he added, so that appropriate behavior is rewarded and not punished, and compliance by industry is not too expensive. "Regulating badly would be worse than not regulating at all."

The best defense?

The U.S. government wants to build a national missile defense (NMD) system. The technology of such an apparatus has proved difficult to nail down, and the very idea of it, highly controversial. Though the proposed system would hardly strain the Pentagon's $310 billion yearly budget, the fact that it is being pursued at all has alarmed even the country's closest allies. In the worst case, critics fear, it could derail ongoing arms control efforts and trigger a new arms race. Given the controversy, and the upcoming elections, President Clinton is expected to defer a decision on deployment, leaving the final say to his successor.

The NMD debate has become a pivotal one in security and foreign policy circles, said Spurgeon Keeny, executive director of the Arms Control Association in Washington, D.C., and a presidential adviser on national security from the Eisenhower through the Carter administrations. Putting in place a national missile defense system--whether of the limited kind currently in the works, or of a far more ambitious variety as envisioned by Governor Bush--would set the tone for all future negotiations with Russia and China on arms control and security matters. "The question to ask is, What's this going to do to our relations with Russia and China, and to the future of arms control agreements? I don't think [a national missile defense system] is in our best interests," Keeny said. If the United States goes ahead with the system, it will also need to contend with strong opposition from NATO allies. "We can afford a missile defense, sure, but the big cost will be the consequences on the actions and attitudes of other countries," he said.

Resolving the missile defense question will mean figuring out how the United States should comport itself as the sole superpower in a post-Cold War world. "Regardless of who is elected," Keeny predicted, "this story is far from over."

Telecom Competition

The Telecommunications Act of 1996, which will be five years old this February, was intended to stir up competition in the telephone and cable industries. By lifting long-standing barriers that had, for example, kept long-distance companies from offering local service, it anticipated the formation of vertically oriented companies, offering a service mix of local, long-distance, broadband, and so on. That in turn would lead to lower prices and better service.

By most accounts, though, reform has been slow, and much of it has favored business users over consumers. Rates for both cable and telephone service have in fact risen for many households.

Consumer advocates have called for rewriting the 1996 act. That "would be opening up a can of worms," argued Robert W. Crandall, a senior fellow at the Brookings Institution. Newer unregulated technologies, be it wireless, optical fiber to the home, cable, or Internet telephony, may soon take care of the problem. "As whole networks go over to packet switching with always-on connections, the incremental costs of providing bundled and unlimited local telephone connections will become so small, the whole traditional telephone rate structure collapses," Crandall said.

And what of the spate of industry consolidations? Since 1996, SBC Communications has gobbled up Ameritech and Pacific Bell, and Bell Atlantic has acquired Nynex and GTE. A number of transnational carrier mergers are also in the works. A recent bill in Congress sought to block the purchase of Voicestream by Deutsche Telecom, because the latter is partly owned by the German government. The fate of such deals will depend on how protectionist the new Administration is, said Vik Grover, a telecommunications analyst at Kaufman Bros. L.P. in New York City. "This is really a foreign policy question."

The next President will certainly want to assess the impact of the telecommunications act and similar legislation. Is the recent wave of consolidations and price increases simply a transition to a more competitive market? And does the current regulatory framework need fine-tuning or something more drastic?

The new U.S. deficit?

According to the Information Technology Association of America (ITAA), Arlington, Va., 600 000 positions in the U.S. high-technology sector will go unfilled this year. There simply are too few qualified U.S. workers to go around, the ITAA argues; the only answer is to hire more foreigners. A bill currently circulating through Congress would lift the annual 115 000 cap on H-1B visas for skilled workers to 195 000 for the next three years.

The bill's critics, however, believe the worker shortage has been exaggerated; the real purpose of hiring more temporary workers is to hold wages down. Far better to retrain experienced U.S. engineers and scientists to work in the New Economy, they contend.

Whether or not the high-tech workforce shortage is as dire as the ITAA claims, what is undeniable is that the number of U.S. college students opting for scientific or technical majors has fallen off dramatically in recent years. Even in fields that have seen no significant drop, such as computer science, the supply of graduates will not meet projected demand in the coming decade. With fewer U.S. students choosing scientific and technical careers, what should the U.S. government be doing to ensure that there is a next generation of scientists and engineers?

A unifying vision

If nothing else, the Cold War gave the United States a coherent theme for its scientific and technological efforts. Since the fall of the Soviet Union, though, no other unifying vision has emerged to take its place. "What we have now is a bunch of disconnected programs, with not much of a public purpose," said Gary Chapman of the University of Texas. "In a sense, we've reverted to the 'black box' model of science and technology: you pour in money and hope that something good will come out."

And while overall Federal R&D spending has been rising, the distribution of funds has been very spotty, noted Carnegie Mellon's Granger Morgan. The National Institutes of Health, Bethesda, Md., for example, have seen a 47 percent rise in funding since 1994, while the Energy Department budget has fallen 4 percent. Spending on alternative and renewable energy--which covers everything from wide bandgap semiconductors for power electronics, superconducting cable, advanced materials for fuel cells, and technology for carbon separation--is dangerously low, Morgan said. "We're spending less money now than in the past, at a time when we need it most."

A new vision for science and technology could focus on global warming or environmental sustainability, Chapman suggested. This time around, he added, we could aim for broader participation in the policy-making process, "beyond the priesthood of science experts to the citizenry as a whole."

Willie D. Jones, Samuel K. Moore, and William Sweet did reporting for this article.