Electronic Realism

A cadre of students and educators is using the latest digital techniques to animate art and humanize technology

Wood that stirs to life whenever someone walks by. A pedestal that plays music, inspired by the scattering of clear cubes across its top. A train skylight through which subway passengers can see past the dirt and concrete to the streets above.

Students and researchers at the Interactive Telecommunications Program (ITP) in New York University's Tisch School of the Arts are using the availability of cheap microcontrollers, multimedia computers, and ubiquitous data networks to create artworks with a surprising depth of imagination and responsiveness. Their creations are part of an effort to fit technology to human needs. Along the way have come new approaches to enabling the disabled and educating the young and the old.

The program employs video, Web, and other interactive technologies. Those attracted to ITP range from artists who want to work in a new medium to a pediatrician who wants to use child-friendly technologies in her medical practice.

Red Burns, a founder of the program and its current chair, explained the ITP's goal. "We want to nurture a new kind of communications professional," she said, "people who can be concrete and yet imaginative, who can question, and whose knowledge of technology is really informed by a very strong ethical and aesthetic sense." For Burns, such an approach is vital to dealing with new technologies, because every one of them brings with it a Faustian bargain of unintended consequences. But "the fact that technology has a downside doesn't mean we shouldn't do it, it means we have to be aware of [that downside]," she said.

Burns wants people to think about using technology. To illustrate her point, she described a visit to a medical school to discuss a collaboration with the ITP. There she witnessed a laparoscopic procedure, during which a monitor mounted on the ceiling displayed video images from inside the patient. The surgeon had to manipulate his instruments while craning his neck and looking up at the monitor above. When Burns asked why the monitor was not placed at eye level, the surgeon responded, "Oh! Can you move the monitor? That would be great!" He had never thought to challenge how the laparoscopic technology was being implemented.

It is a willingness to criticize and explore different facets of technology that attracts funding from the ITP's sponsors, which include companies such as Intel and Microsoft. But sponsors do not direct research and do not receive any deliverables. According to Daniel Rozin, director of research and an adjunct professor with the program, these companies realize that they are narrowly focused on competing in today's market. "I don't think they can afford to...explore little niches [but] some of these niches turn out to be the mainstream of tomorrow. It's important to have artists like us exploring these niches. When they come and see what we've done, it inspires them."

The ITP was founded over 20 years ago as an outgrowth of early experiments with public cable and broadcast television systems. ITP is a graduate program, and students come with backgrounds ranging from electrical engineering to music composition. Their unifying credentials are "curiosity and imagination."

The program's credo is that artists and humanists have a great deal to contribute to providing society with a more informed view of technology. A critical and human-centered approach is "the only defense [against bad or inappropriate technology] I can think of," she explained.

The following pages present a selection of works from the ITP.

Wooden Mirror

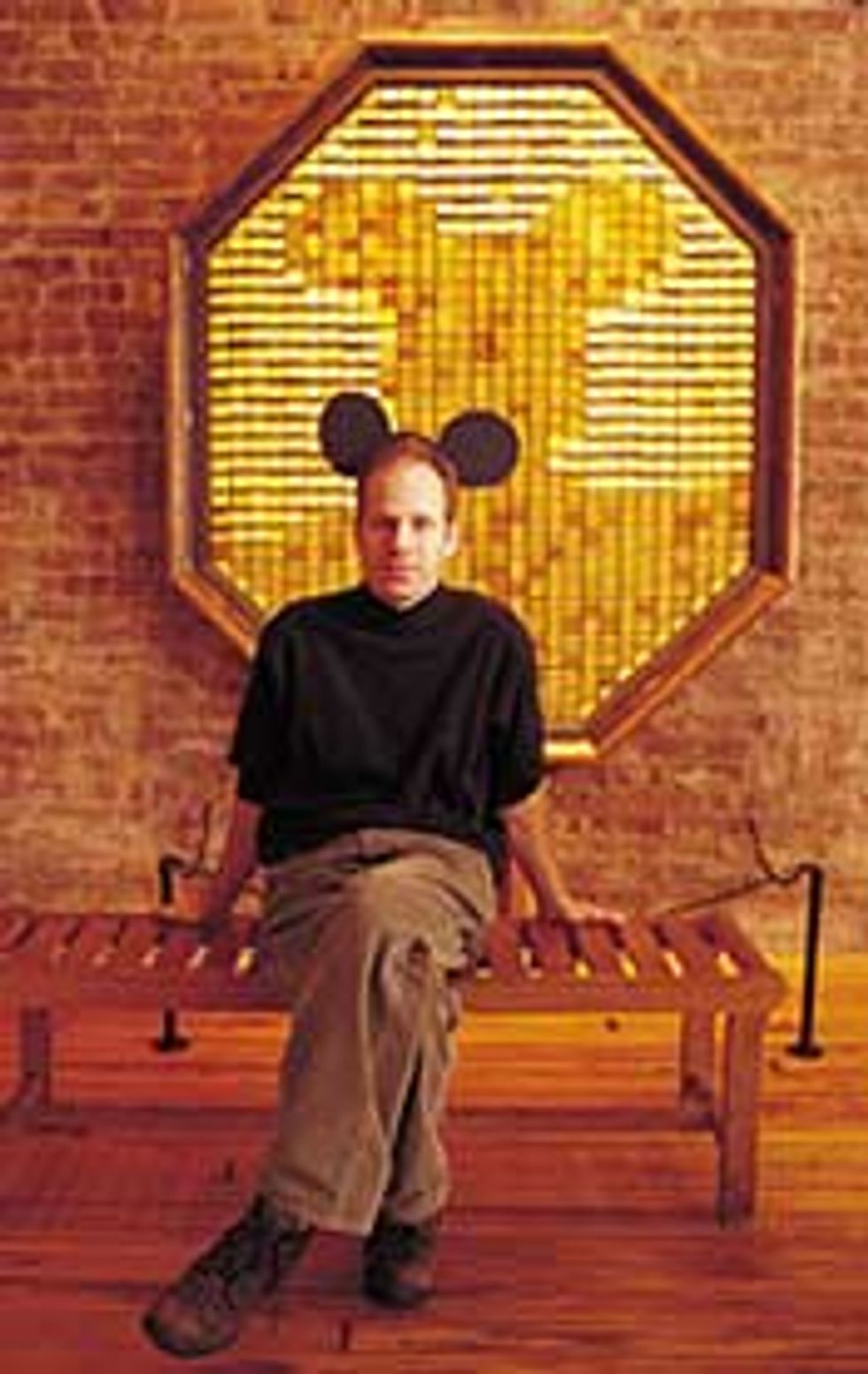

Daniel Rozin

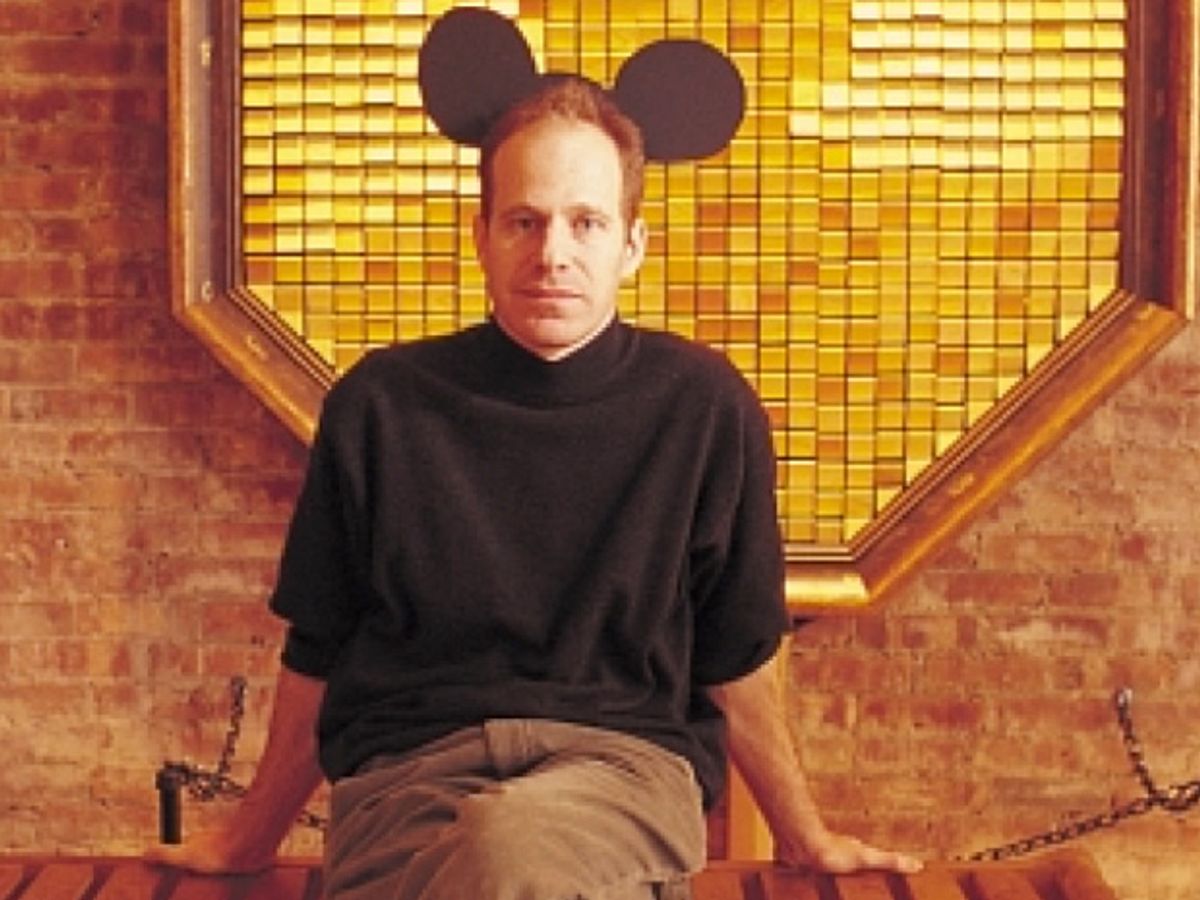

The Wooden Mirror is an impressive physical presence, over 2 meters tall and 1.5 meters wide. Move in front of it, and its surface of wooden tiles comes alive. The tiles tilt up and down, and the resulting pattern of light and shade creates an image of whatever is before the mirror. Movement is reflected instantly by the tiles in ripples of motion, accompanied by a rustling sound reminiscent of a stiff breeze in a forest. To enable the mirror to create real-time images, artist Daniel Rozin connected a small videocamera in the mirror's center to a Macintosh computer. The image seen by the camera is digitized by the computer and reduced to a 35-by-29-pixel image with an 8-bit grayscale. The computer analyzes the differences between the current image and the previous frame and sends commands only to those tiles that need to be changed, using software written by Rozin. The tiles are tilted by a total of 830 servomotors, one per tile [photo], connected to a series of microcontrollers that are linked by serial lines to the Macintosh. Each tile can take up one of 255 positions to form the image, although in regular lighting conditions typically only 10 or 12 levels of gray can be discerned. Depending on how much activity it is mimicking, the mirror can refresh between 5 and 10 times a second.

"In many ways, this is the essence of what we try to do here: taking the power of digital computation and concealing it to see how it influences something more in touch with the human condition. Wood doesn't want to be very digital, each tile is slightly different. But computation can take all this randomness and messiness and put it into an order....The piece is on the line between analog and physical vs. digital and computational," explained creator Rozin, shown sitting in front of his creation [right].

Rozin also strove to eliminate the concept of an interface. An interface "means putting some sort of membrane between you and the experience. With this, you understand immediately that it's a mirror, you know how to operate it, and no interface is involved."

Easel

Daniel Rozin

Areal piece of canvas sits, glowing, on an artist's Easel with a paintbrush and three paint cans. Dipping the paintbrush into a can selects one of three video feeds. Stroking the brush across the canvas paints a swath from an image frame taken from the chosen feed [right]. Each time the brush is lifted from the canvas, another frame is taken, ready to be applied. The first feed is from a camera pointed directly at whoever is using the Easel. The second feed is from an overhead camera and shows the local surroundings. The final feed is from a cable television channel. Successive strokes overlay different moments from different feeds to create infinitely variable collages [small photographs].

An infrared light-emitting diode (LED) built into the brush emits light through the bristles; when they touch the canvas, they create a spot that is picked up by another camera located inside the Easel. The spot's position is recorded by a personal computer that merges the feeds and creates the working image, which is projected onto the back of the canvas. The paint cans use a simple light beam that is broken whenever the brush is dipped into the can to pick a feed.

"Conceptually it's organized into three spheres. The first one is you, the second is where you are right now, and the third is the [outside world]. The last is a complete mystery because you don't know who or what's on the television," said Daniel Rozin, who also created the Wooden Mirror . "Lifting the brush and [getting a new frame] lets you control time."

Rozin wanted to make a break with the normal way people produce digital objects. "If you start [a word processor or a drawing program], you get a clean slate very easily. But life doesn't give you clean slates that readily." The canvas cannot be cleared by the user. "You start with whatever the people before you were doing."

The choice of video feeds, instead of solid colors, to paint the canvas was made to facilitate expression. "If you just painted colors, people wouldn't use it as happily, they'd be too self-conscious...but with video they just have fun, it sort of releases them," observed Rozin.

Subway Skylights

Greg Shakar, Maya Gorton, & Vardit Gross

Subway Skylights is a prototype system designed for installation in subway cars. A map on the car wall displays the position of the train along its route, while a computer-controlled image on the ceiling acts as a window onto the streets above. In this prototype, the direction and speed of the subway car is dictated by a control lever, but in practice would be determined automatically by the subway's own positioning systems or an internal accelerometer.

The prototype uses a Macintosh G4 with two video cards, one controlling the map and the other the skylight. The computer skips or holds frames from prerecorded video depending on the "speed" indicated by the control lever using a micro-controller to send serial data to the G4.

Greg Shakar, one of the creators of Skylights, said the intention is to give passengers a sense of place. "When you ride the subway, you tend to get on, travel a few miles, and when you get off, you're magically somewhere else. Yet there's so much more of the city to see... It's comforting to have a real sense of space."

Ideally, the system would use live video from surface cameras and interpolate (as a function of position) between them, but "the system is scalable--if you can only afford prerecorded video, that's fine." There are also obvious advertising revenue opportunities for subway authorities from businesses located along the subway route. The artists ultimately intend to propose the system to subway operators in cities around the world.

Imitations

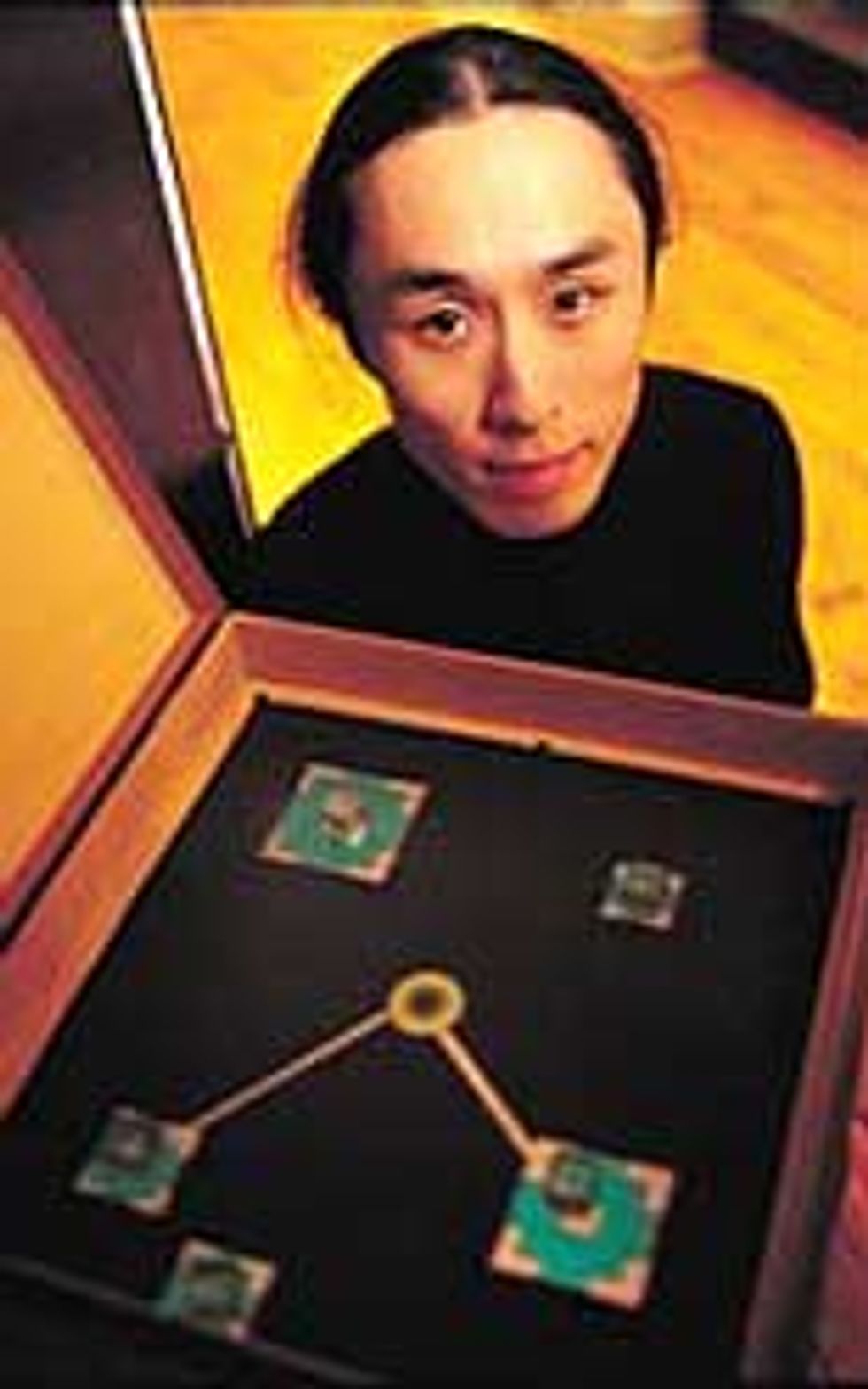

James Tu

At first glance, Imitations is a simple wooden pedestal. Lifting a lid on the top reveals an inky black surface with a pulsing white circle in the middle. Placing one of five small clear plastic cubes onto the surface provokes an immediate response--a multicolored square appears under the cube. A white line streaks from the pulsing center to the square and a note is played. Adding the other cubes creates more colored squares and a short musical sequence, with the pitch of each note determined by the cube's distance from the center. Once the cubes have settled into position, Imitations introduces a second voice, creating canons and other musical transformations from the original notes, until eventually the music dies away, waiting for another throw of the cubes.

A camera beneath the playing surface captures images of the surface and transmits them to a computer, which compares what it sees with a reference frame of an empty surface in order to detect motion. Large motion--such as that due to a hand placing the cubes--is discarded. The computer notes the position of any small objects thus detected, and projects a colored square onto the underside of the playing surface while playing the appropriate tone.

The artist, James Tu [above], was trained as an electrical engineer, but always had a strong musical interest and wanted "to create alternate ways to allow others to play music." He also wanted "a free-form interface...people like that it has a magical quality about it, you move these cubes that have nothing inside them and it actually registers." The musical transformations are computer-selected: "the user is somewhat in control of the composition, but if you gave him or her total control and allowed total randomness, it might not sound as good."

Copper Urchin

Greg Shakar, Tracy Gross, & Katherine Moriwaki

The Copper Urchin is a copper sphere mounted atop a pole. Flexible wires sprout from the surface. Bending one of these wire antennas causes an LED to light up near the antenna's base and a sound to be played that can correspond to a musical note, bird calls, or whatever the artists have chosen.

Each antenna of the Copper Urchin is embedded in a metal cylinder. When an antenna is bent, it makes contact with the cylinder and closes a circuit. Inside the Copper Urchin, 16-channel multiplexers pass the signal to a microcontroller, with one channel per antenna. The microcontroller maps the signal to a MIDI (Musical Instrument Digital Interface) command. The note is placed in a software queue and is then sent to an external MIDI player for conversion into the desired sound or tone. The MIDI protocol describes the note played (and its key), and a number denoting which instrument sound is to be synthesized. By changing which sound corresponds to which instrument number, a MIDI device can sound like any instrument (real or imagined).

Greg Shakar, one of the creators, talked about the concept behind it: "we decided to create a control surface for sound or music that had a very tactile element to it. The antennas let people get their hands entwined with it and manipulate it."

When the Urchin is programmed for musical notes, Shakar feels it's always "good as a musician to come to something new. A control surface like this allows for expressive ideas you might not have had otherwise."

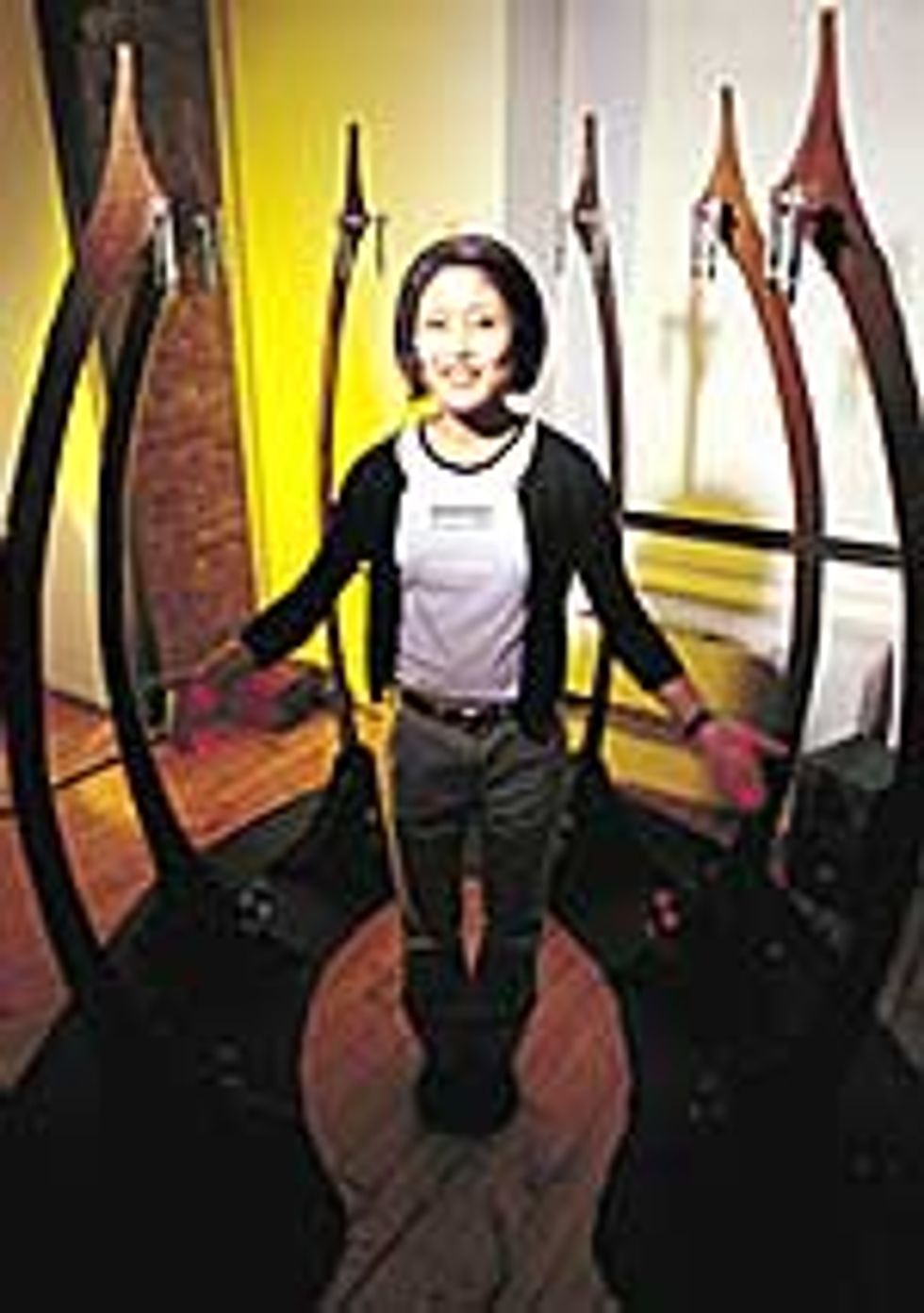

The Ribs

Jen Lewin

The Ribs is composed of six tall, graceful, curving spars. When a performer [right] passes his or her hand through one of two invisible lines that join the top of a spar to its bottom, a musical note is evoked. Varying the speed at which you move your hands or fingers changes the quality of the note, mimicking plucking a normal stringed instrument. Shown here in a half-moon formation, The Ribs can be configured into any pattern the performer desires.

The Ribs is organized around twin pairs of lasers and photocells. A microcontroller detects when a laser beam is broken and measures how long it remains broken. MIDI (Musical Instrument Digital Interface) commands are passed to a synthesizer. To produce each note, the synthesizer mixes about 10 different sounds together, varying this "sound palette" with the length of time the beam is broken. Currently, the basic pitch of each beam is pre-selected, but a future version will also vary pitch based on the height at which a beam is broken.

Jen Lewin created The Ribs because she wanted to combine interactivity with "an organic sculptural form." The work is intended to be both an instrument and a piece of installation art. As an installation piece, it is "tuned to a set of notes that would make it difficult for a musician to play but sound very nice for non-musicians," but any set of notes of sounds can be assigned to the laser beams that are the heart of the project.

Site Traffic

Jonah Brucker-Cohen

Site Traffic has two components, one physical, the other virtual. The physical component is a large cube-shaped box with nine depressible buttons on the top [top right]. The virtual component is a Web site that allows visitors to compose music and assign their tune to a button on the physical device [bottom left]. The Web site also lets users chat with each other and see, through a Web camera, if anyone is interacting with the physical device. When a song is created, its assigned button lights up, and passersby can press it to hear the new composition, which is also echoed back to all the visitors at the Web site. Web site visitors can also listen to compositions by clicking a virtual button.

The Web site employs the Shockwave multimedia multi-user server. Users compose music in a MIDI environment, which allows them to select notes, the key, and different instruments. When they finish a song, it is sent to the Shockwave server, which in turn sends it to a Macintosh in the physical device that plays the song. This computer is also interfaced with a pair of microcontrollers that illuminate the buttons and detect button presses.

Jonah Brucker-Cohen [not pictured], creator of the two-part piece, outlined its rationale. "[I wanted] a way to bring the physical and virtual world together. I was walking down the street when I thought about people who are on-line, what Web sites are they visiting. Maybe they're shopping here or doing something there, but there's no connection between them and me on the street."

Site Traffic is intended to create a physical space that "people who are on-line can transplant themselves into. Then they can interact with people who are [physically present in the space]."

Collaborating with others is an important element of Site Traffic. "The idea is to get people together on-line to share an experience that actually manifests itself somewhere tangible," Brucker-Cohen explained.

Behind the scenes at ITP

Many incoming students at the Interactive Telecommunications Program , having no previous technical experience, are "in awe of computing...and give it a status it ought not to have," said Red Burns, the program's chair. To overcome this fear, students are challenged in a Physical Computing class to make something interactive in which the input device is not a mouse or keyboard, and the output device is anything but a screen. Students generally use microprocessors like the Basic Stamp made by Parallax Inc., Rocklin, Calif., or PIC from Microchip Technology Inc., Chandler, Ariz. "Suddenly they have something and don't realize that they've built a computer," continued Burns.

Dan O'Sullivan runs this part of the ITP's curriculum and sees its effect on students. In his normal programming class, "a lot of the students just struggle with it because they came through an art school," he said. "Their minds don't wrap around programming that well." But when students start the Physical Computing class, "even though it's still computing, still programming, somehow because their fingers are involved...the knowledge finds a different route into their brains and it just gets in easier."

Balancing the attention given to the technology and to the arts is difficult. "It's a constant struggle for us to know at what level to teach the technology," said O'Sullivan. In many of the artworks, a microcontroller is used to handle device input/output, and more complicated processing is handed off to a PC.

The rising tide of technology often makes this approach pay off, allowing what were tasks originally handled by a PC to be handled with embedded technology: "one year's prototype becomes something that can be generally distributed the next year," said O'Sullivan.

To Probe Further

The Interactive Telecommunications Program at New York University has a well-developed Web presence, which includes details of many artworks and projects beyond those featured in this issue, as well as information on how to apply to the program. Look up www.itp.nyu.edu/.