As hard as we’re trying, it’s going to be a very long time before we’re able to build a robotic insect that’s anywhere near as capable or versatile as a real one. So for now, we rely on a cybernetics approach to get real insects to do our bidding instead. Over the past several years researchers have managed to steer large insects using electrical implants, a sort of brute-force method with limited real-world usefulness.

Now engineers at the R&D company Draper, based in Cambridge, Mass., are hoping to overcome those limitations by creating a cybernetic dragonfly that combines “miniaturized navigation, synthetic biology, and neurotechnology.” To steer the dragonflies, the Draper engineers are developing a way of genetically modifying the nervous system of the insects so they can respond to pulses of light. Once they get it to work, this approach, known as optogenetic stimulation, could enable dragonflies to carry payloads or conduct surveillance, or even help honey bees become better pollinators.

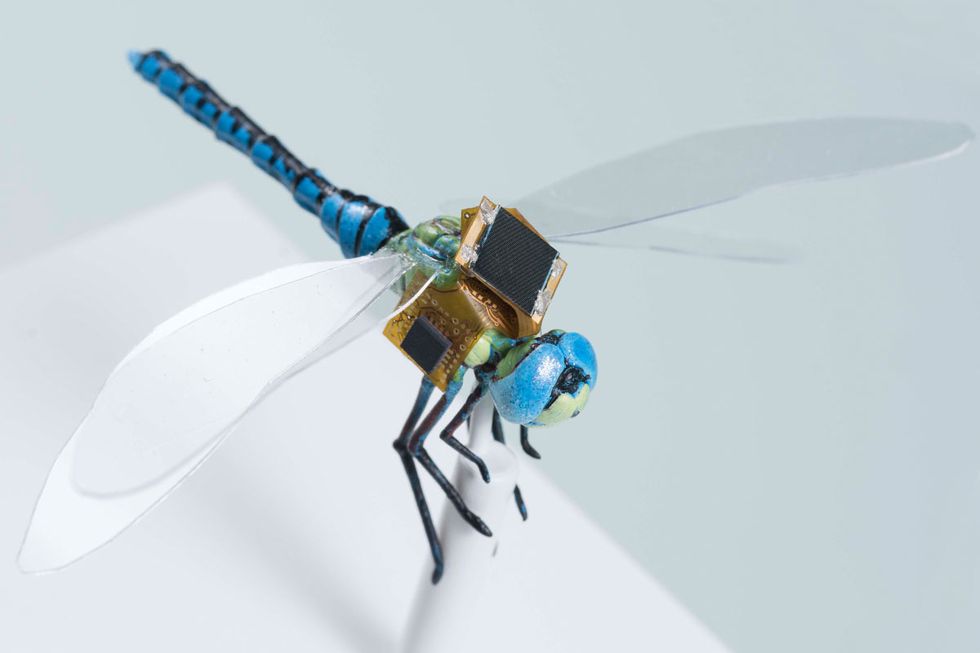

The DragonflEye project is a collaboration between Draper and the Howard Hughes Medical Institute (HHMI) at Janelia Farm. There are several unique technologies that have been implemented here: The group was able to pack all of the electronics into a tiny “backpack,” meaning that small insects (like bees and dragonflies as opposed to large beetles) can fly while wearing it. Some of the size reduction comes from the use of solar panels to harvest energy, minimizing the need for batteries. There’s also integrated guidance and navigation systems, so a fully autonomous navigation is possible outside of a controlled environment.

Another major advance is that, rather than using electrodes to brute-force the muscles of an insect into doing what you want, the Draper engineers are taking a more delicate approach, using what are called optrodes to activate a special type of “steering” neuron with light pulses. These steering neurons act as a bridge between the dragonfly’s sensors and its muscles, meaning that accessing them provides a much more reliable form of control over how the insect moves.

For more details, we spoke with Jesse J. Wheeler, a senior biomedical engineer at Draper and the principal investigator on the DragonflEye program.

IEEE Spectrum: How is your work different from (or related to) some of the cybernetic insects that have been presented in the past?

Previous attempts to guide insect flight used larger organisms like beetles and locusts so that they could lift relatively large electronics systems that weighed up to 1.3 grams. These systems did not include navigation systems and required wireless commands to guide flight. Two approaches were attempted: Spoofing sensory inputs to trigger flight behaviors, and directly stimulating the neurons and muscles that control the wings. The challenge with spoofing sensory inputs is that organisms often adapt and learn to ignore the sensory information that isn’t consistent with other senses. The challenge with directly controlling wings is that it degrades the insect’s inherently elegant neuromuscular control required for sustained stable flight. These systems also used electrical stimulation, which is imprecise and indiscriminately activates any neurons or muscles near to the electrodes.

Our approach is different because we are using dragonflies, which are smaller and more agile fliers. The DragonflEye backpack is designed to navigate autonomously without wireless control, harvest energy from the environment for extended operation, and is a fraction of the weight for smaller insects. Research by our collaborator, Anthony Leonardo, Janelia Research Campus group leader, has studied how special “steering” neurons in the dragonfly control flight direction. These special steering neurons are a type of interneuron, which is neither sensory nor motor. These interneurons are believed to provide steering commands to downstream neuromuscular circuits that coordinate muscle control of the wings and maintain stable flight. These steering neurons will be precisely activated without inadvertently activating nearby neurons and muscle through optogenetic stimulation. This approach will allow us to activate individual neurons with light, which can’t be done with electricity.

How do optrodes work, and what are the advantages of using them to interface with neurons?

Much like an electrode, which creates an electrical interface with neurons, an optrode creates an optical interface, allowing light to be either delivered to neurons for stimulation or be captured from light-emitting neurons for monitoring activity. While neurons in the retina are naturally activated by light, which allows you to see, neurons in the rest of the body are not naturally sensitive to light. By inserting genetic material that encodes special light-sensitive proteins, called opsins, neurons can be modified to be activated, or even inhibited, by different colors of light.

In addition, genetic material can be inserted that causes neurons to emit light when they are active. These new optogenetic tools allow optrodes to both monitor and stimulate neurons with far greater specificity than can be achieved by electrodes. A reason for this improved specificity is that electrical fields interact with all neurons in proximity to the electrode, but light will only interact with neurons that have been genetically modified. Additionally, while electric fields are good at activating neurons, it is more difficult to inhibit them. In contrast, different types of opsins can be used to both activate and inhibit neurons simply by changing the color of light passed through the optrode.

Can you describe the components and capabilities of the backpack guidance system in more detail, and why did you decide to mount it on a dragonfly specifically?

The backpack is designed to navigate autonomously, harvest solar energy for extended operation, deliver light pulses through optrodes to control steering neurons, and wirelessly transmit data to an external base station. This is our first-generation system, which will allow us to develop insect guidance using optogenetic stimulation. Next steps will further reduce the size and weight of the DragonflEye system by developing a custom integrated system-on-chip. Further miniaturization will reduce the payload burden and allow the system to be worn by even smaller insects.

Dragonflies are interesting because they are found worldwide and are very robust and agile fliers for their small size. Future work could extend guidance to other insects, including important pollinators.

Why is a cybernetic insect a good idea as opposed to attempting to develop an insect-size flying robot?

Common dragonflies weigh around 600 milligrams, can reach accelerations up to 9 gs, and are known to migrate over great distances. Mechanical fliers of comparable size are far less efficient at producing lift, stabilizing flight, and storing energy. This inefficiency creates a fundamental challenge: Mechanical fliers can carry only very small power sources, which means that they have enough power to fly for only very brief periods of time. The DragonflEye system doesn’t require a power source for flight, only for navigation. It can operate indefinitely due to the insect’s ability to replenish energy from food and the navigation system’s ability to harvest energy from the environment.

Can you expand a bit on your vision for applications for robots like these?

Tracking insects and small animals will enable researchers to better understand behavior in the wild, monitor the influence of environmental changes, and help to guide policies to protect important ecosystems. Beyond tracking, the DragonflEye system offers new miniaturized technology to equip a wide range of insects with environmental sensors and potentially guide important behaviors, like pollination.

What is the current state of this project?

In order to begin guidance of dragonflies, several key technologies needed to be developed. [HHMI] focused on developing gene delivery methods specific to the dragonfly to make special steering neurons sensitive to light. Draper developed a miniaturized backpack for autonomous navigation and a flexible optrode to control the modified neurons by guiding light around the dragonfly’s tiny nerve cord.

Our first-generation system is based upon early mockup backpacks that were fitted to dragonflies to test ergonomics and weight limits. With these new technologies in hand, we will be equipping dragonflies with the backpack system and begin investigating position tracking, flight control, and optimized optical stimulation.

What are you working on next?

In the first year of the project, we focused on developing core enabling technologies like the backpack, optrode, and synthetic biology tool kit for the dragonfly. As we begin our second year, we are preparing to equip dragonflies with our first-generation backpacks in a motion-capture room that can monitor their precise flight movements as data is captured from navigation system. This will allow us to develop precise onboard tracking algorithms for autonomous navigation.

Next, we will apply optical stimulation from the backpack to trigger flight behaviors, which will allow us to develop autonomous flight control. In parallel, we are working on our second-generation backpack, which will simultaneously increase functionality while significantly reducing weight and size.

[ Draper ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.