Do We Want Robot Warriors to Decide Who Lives or Dies?

As artificial intelligence in military robots advances, the meaning of warfare is being redefined

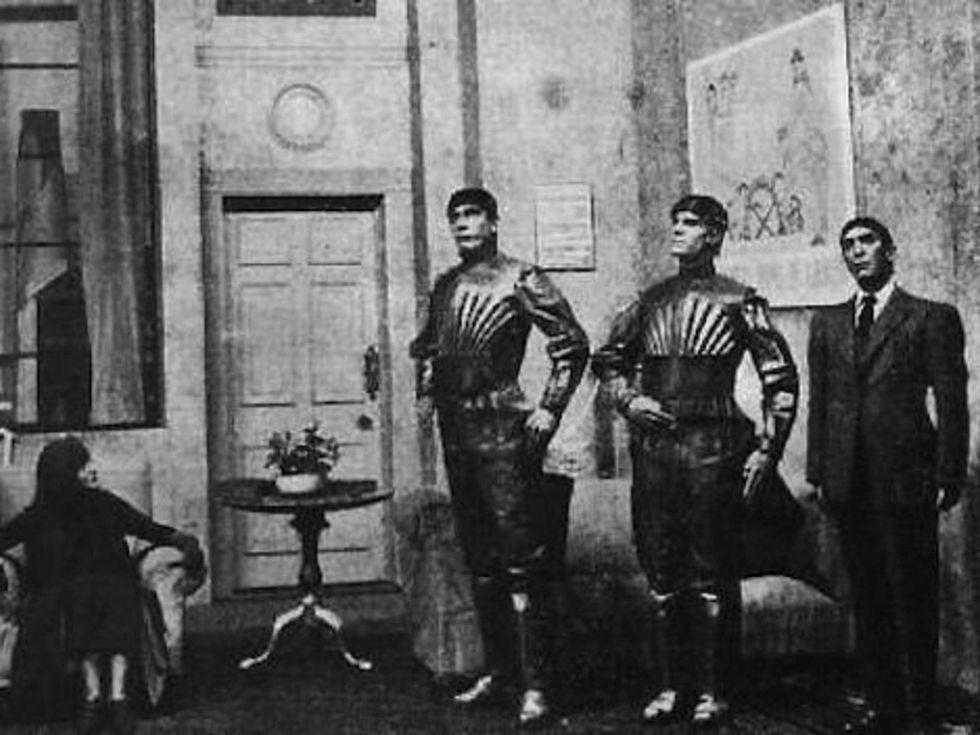

Czech writer Karel Čapek’s 1920 play R.U.R. (Rossum’s Universal Robots), which famously introduced the word robot to the world, begins with synthetic humans—the robots from the title—toiling in factories to produce low-cost goods. It ends with those same robots killing off the human race. Thus was born an enduring plot line in science fiction: robots spiraling out of control and turning into unstoppable killing machines. Twentieth-century literature and film would go on to bring us many more examples of robots wreaking havoc on the world, with Hollywood notably turning the theme into blockbuster franchises like The Matrix, Transformers, and The Terminator.

Lately, fears of fiction turning to fact have been stoked by a confluence of developments, including important advances in artificial intelligence and robotics, along with the widespread use of combat drones and ground robots in Iraq and Afghanistan. The world’s most powerful militaries are now developing ever more intelligent weapons, with varying degrees of autonomy and lethality. The vast majority will, in the near term, be remotely controlled by human operators, who will be “in the loop” to pull the trigger. But it’s likely, and some say inevitable, that future AI-powered weapons will eventually be able to operate with complete autonomy, leading to a watershed moment in the history of warfare: For the first time, a collection of microchips and software will decide whether a human being lives or dies.

Not surprisingly, the threat of “killer robots,” as they’ve been dubbed, has triggered an impassioned debate. The poles of the debate are represented by those who fear that robotic weapons could start a world war and destroy civilization and others who argue that these weapons are essentially a new class of precision-guided munitions that will reduce, not increase, casualties. In December, more than a hundred countries are expected to discuss the issue as part of a United Nations disarmament meeting in Geneva.

Last year, the debate made news after a group of leading researchers in artificial intelligence called for a ban on “offensive autonomous weapons beyond meaningful human control.” In an open letter presented at a major AI conference, the group argued that these weapons would lead to a “global AI arms race” and be used for “assassinations, destabilizing nations, subduing populations and selectively killing a particular ethnic group.”

The letter was signed by more than 20,000 people, including such luminaries as physicist Stephen Hawking and Tesla CEO Elon Musk, who last year donated US $10 million to a Boston-based institute whose mission is “safeguarding life” against the hypothesized emergence of malevolent AIs. The academics who organized the letter—Stuart Russell from the University of California, Berkeley; Max Tegmark from MIT; and Toby Walsh from the University of New South Wales, Australia—expanded on their arguments in an online article for IEEE Spectrum, envisioning, in one scenario, the emergence “on the black market of mass quantities of low-cost, antipersonnel microrobots that can be deployed by one person to anonymously kill thousands or millions of people who meet the user’s targeting criteria.”

The three added that “autonomous weapons are potentially weapons of mass destruction. While some nations might not choose to use them for such purposes, other nations and certainly terrorists might find them irresistible.”

It’s hard to argue that a new arms race culminating in the creation of intelligent, autonomous, and highly mobile killing machines would well serve humanity’s best interests. And yet, regardless of the argument, the AI arms race is already under way.

Autonomous weapons have existed for decades, though the relatively few that are out there have been used almost exclusively for defensive purposes. One example is the Phalanx, a computer-controlled, radar-guided gun system installed on many U.S. Navy ships that can automatically detect, track, evaluate, and fire at incoming missiles and aircraft that it judges to be a threat. When it’s in fully autonomous mode, no human intervention is necessary.

A Harop drone destroys a target during a test conducted by Israel Aerospace Industries.

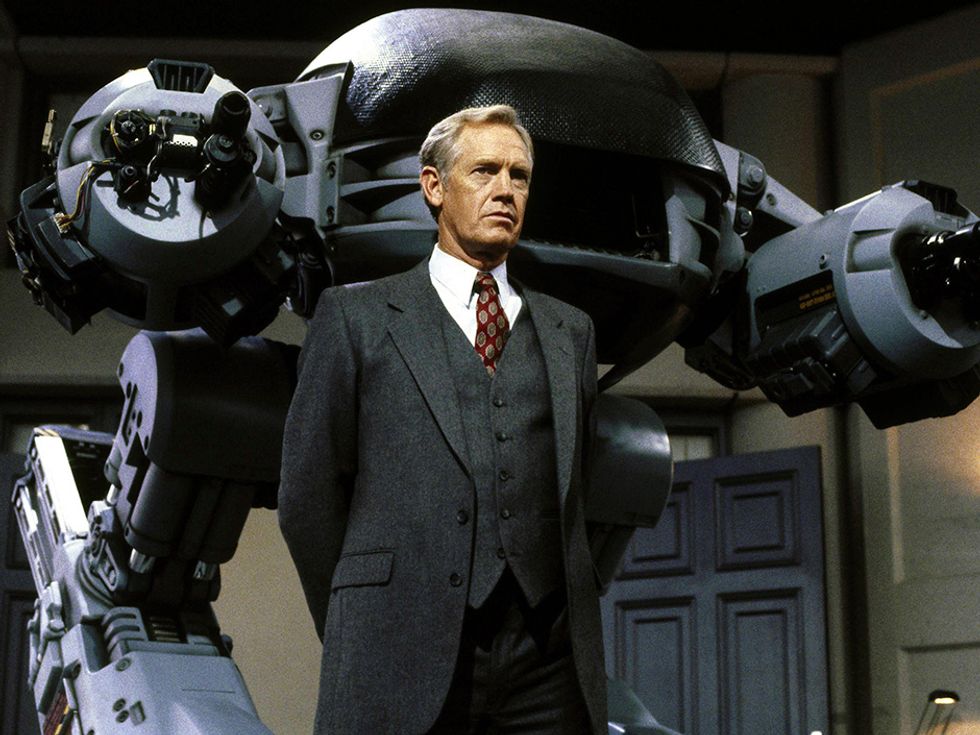

DoDAAM Systems demonstrates the main capabilities of its Super aEgis II sentry robot.

More recently, military suppliers have developed what may be considered the first offensive autonomous weapons. Israel Aerospace Industries’ Harpy and Harop drones are designed to home in on the radio emissions of enemy air-defense systems and destroy them by crashing into them. The company says the drones “have been sold extensively worldwide.”

In South Korea, DoDAAM Systems, a defense contractor, has developed a sentry robot called the Super aEgis II. Equipped with a machine gun, it uses computer vision to autonomously detect and fire at human targets out to a range of 3 kilometers. South Korea’s military has reportedly conducted tests with these armed robots in the demilitarized zone along its border with North Korea. DoDAAM says it has sold more than 30 units to other governments, including several in the Middle East.

Today, such highly autonomous systems are vastly outnumbered by robotic weapons such as drones, which are under the control of human operators almost all of the time, especially when firing at targets. But some analysts believe that as warfare evolves in coming years, weapons will have higher and higher degrees of autonomy.

“War will be very different, and automation will play a role where speed is key,” says Peter W. Singer, a robotic warfare expert at New America, a nonpartisan research group in Washington, D.C. He predicts that in future combat scenarios—like a dogfight between drones or an encounter between a robotic boat and an enemy submarine—weapons that offer a split-second advantage will make all the difference. “It might be a high-intensity straight-on conflict when there’s no time for humans to be in the loop, because it’s going to play out in a matter of seconds.”

The U.S. military has detailed some of its plans for this new kind of war in a road map [pdf] for unmanned systems, but its intentions on weaponizing such systems are vague. During a Washington Post forum this past March, U.S. deputy secretary of defense Robert Work, whose job is in part making sure that the Pentagon is keeping up with the latest technologies, stressed the need to invest in AI and robotics. The increasing presence of autonomous systems on the battlefield “is inexorable,” he declared.

Asked about autonomous weapons, Work insisted that the U.S. military “will not delegate lethal authority to a machine to make a decision.” But when pressed on the issue, he added that if confronted by a “competitor that is more willing to delegate authority to machines than we are...we’ll have to make decisions on how we can best compete. It’s not something that we’ve fully figured out, but we spend a lot of time thinking about it.”

Vladimir Putin observes a “military cyborg” riding an ATV during a demonstration last year.

Maiden flight of the CH-5 drone developed by China Aerospace Science and Technology Corporation.

Russia and China are following a similar strategy of developing unmanned combat systems for land, sea, and air that are weaponized but, at least for now, rely on human operators. Russia’s Platform-M is a small remote-controlled robot equipped with a Kalashnikov rifle and grenade launchers, a type of system similar to the United States’ Talon SWORDS, a ground robot that can carry an M16 and other weapons (it was tested by the U.S. Army in Iraq). Russia has also built a larger unmanned vehicle, the Uran-9, armed with a 30-millimeter cannon and antitank guided missiles. And last year, the Russians demonstrated a humanoid military robot to a seemingly nonplussed Vladimir Putin. (In video released after the demonstration, the robot is shown riding an ATV at a speed only slightly faster than a child on a tricycle.)

China’s growing robotic arsenal includes numerous attack and reconnaissance drones. The CH-4 is a long-endurance unmanned aircraft that resembles the Predator used by the U.S. military. The Divine Eagle is a high-altitude drone designed to hunt stealth bombers. China has also publicly displayed a few machine-gun-equipped robots, similar to Platform-M and Talon SWORDS, at military trade shows.

The three countries’ approaches to robotic weapons, introducing increasing automation while emphasizing a continuing role for humans, suggest a major challenge to the banning of fully autonomous weapons: A ban on fully autonomous weapons would not necessarily apply to weapons that are nearly autonomous. So militaries could conceivably develop robotic weapons that have a human in the loop, with the option of enabling full autonomy at a moment’s notice in software. “It’s going to be hard to put an arms-control agreement in place for robotics,” concludes Wendell Wallach, an expert on ethics and technology at Yale University. “The difference between an autonomous weapons system and nonautonomous may be just a difference of a line of code,” he said at a recent conference.

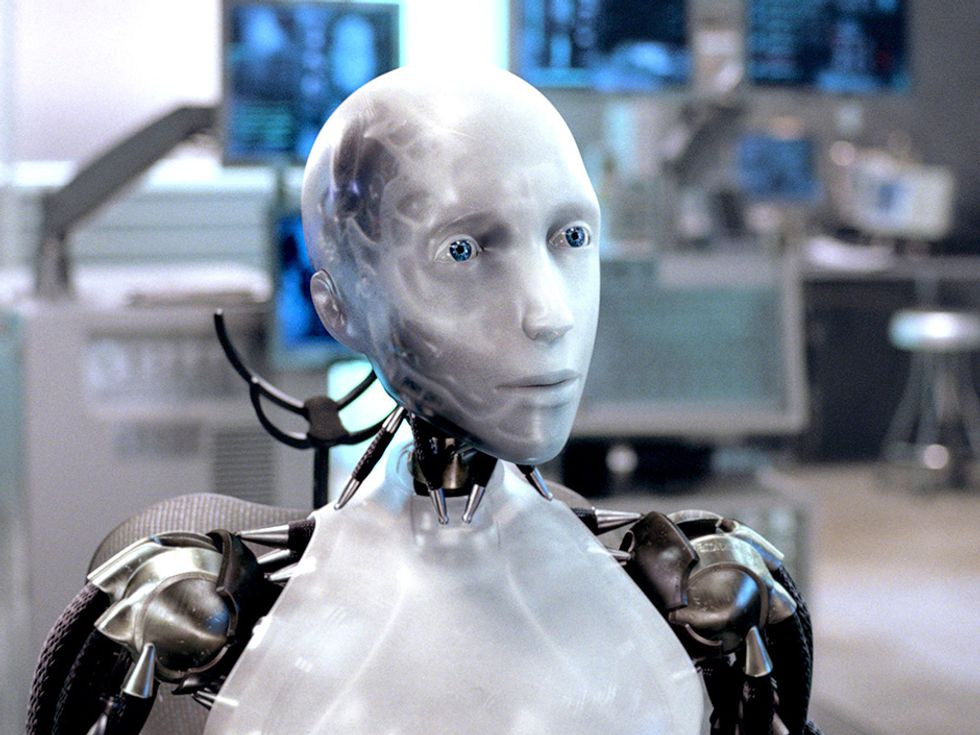

In motion pictures, robots often gain extraordinary levels of autonomy, even sentience, seemingly out of nowhere, and humans are caught by surprise. Here in the real world, though, and despite the recent excitement about advances in machine learning, progress in robot autonomy has been gradual. Autonomous weapons would be expected to evolve in a similar way.

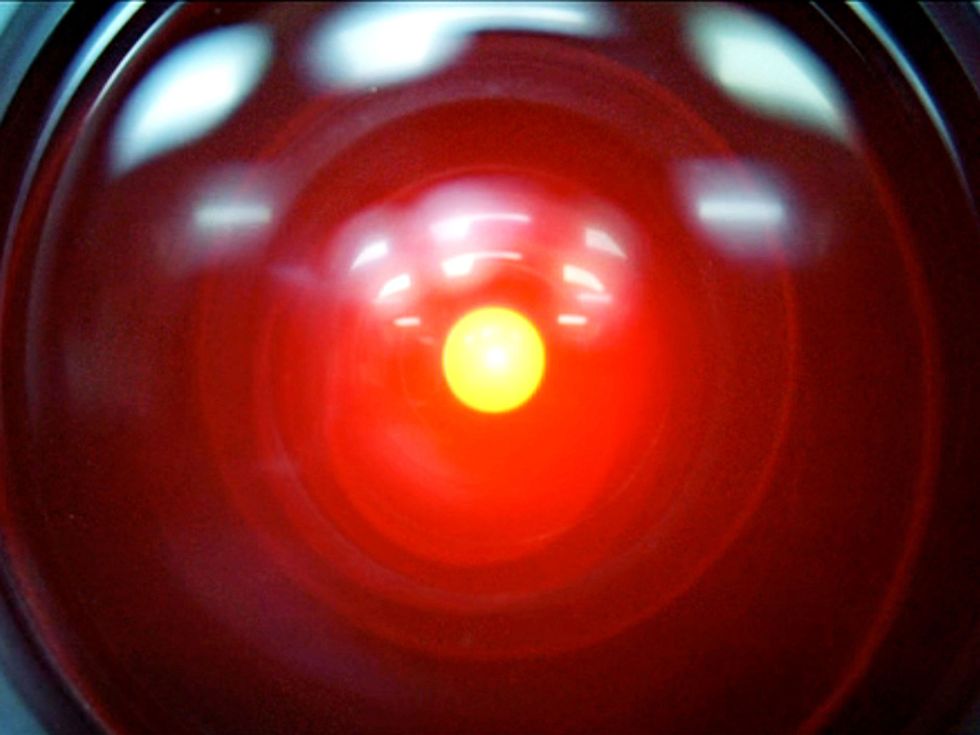

2001: A Space Odyssey: (1968) A spaceship computer became one of the most notorious AI villains ever.

“A lot of times when people hear ‘autonomous weapons,’ they envision the Terminator and they are, like, ‘What have we done?,’ ” says Paul Scharre, who directs a future-of-warfare program at the Center for a New American Security, a policy research group in Washington, D.C. “But that seems like probably the last way that militaries want to employ autonomous weapons.” Much more likely, he adds, will be robotic weapons that target not people but military objects like radars, tanks, ships, submarines, or aircraft.

The challenge of target identification—determining whether or not what you’re looking at is a hostile enemy target—is one of the most critical for AI weapons. Moving targets like aircraft and missiles have a trajectory that can be tracked and used to help decide whether to shoot them down. That’s how the Phalanx autonomous gun on board U.S. Navy ships operates, and also how Israel’s “Iron Dome” antirocket interceptor system works. But when you’re targeting people, the indicators are much more subtle. Even under ideal conditions, object- and scene-recognition tasks that are routine for people can be extremely difficult for robots.

A computer can identify a human figure without much trouble, even if that human is moving furtively. But it’s very hard for an algorithm to understand what people are doing, and what their body language and facial expressions suggest about their intent. Is that person lifting a rifle or a rake? Is that person carrying a bomb or an infant?

Scharre argues that robotic weapons attempting to do their own targeting would wither in the face of too many challenges. He says that devising war-fighting tactics and technologies in which humans and robots collaborate [pdf] will remain the best approach for safety, legal, and ethical reasons. “Militaries could invest in very advanced robotics and automation and still keep a person in the loop for targeting decisions, as a fail-safe,” he says. “Because humans are better at being flexible and adaptable to new situations that maybe we didn’t program for, especially in war when there’s an adversary trying to defeat your systems and trick them and hack them.”

It’s not surprising, then, that DoDAAM, the South Korean maker of sentry robots, imposed restrictions on their lethal autonomy. As currently configured, the robots will not fire until a human confirms the target and commands the turret to shoot. “Our original version had an auto-firing system,” a DoDAAM engineer told the BBC last year. “But all of our customers asked for safeguards to be implemented.... They were concerned the gun might make a mistake.”

For other experts, the only way to ensure that autonomous weapons won’t make deadly mistakes, especially involving civilians, is to deliberately program these weapons accordingly. “If we are foolish enough to continue to kill each other in the battlefield, and if more and more authority is going to be turned over to these machines, can we at least ensure that they are doing it ethically?” says Ronald C. Arkin, a computer scientist at Georgia Tech.

Arkin argues that autonomous weapons, just like human soldiers, should have to follow the rules of engagement as well as the laws of war, including international humanitarian laws that seek to protect civilians and limit the amount of force and types of weapons that are allowed. That means we should program them with some kind of moral reasoning to help them navigate different situations and fundamentally distinguish right from wrong. They will need to have, embodied deep in their software, some sort of ethical compass.

For the past decade, Arkin has been working on such a compass. Using mathematical and logic tools from the field of machine ethics, he began translating the highly conceptual laws of war and rules of engagement into variables and operations that computers can understand. For example, one variable specified how confident the ethical controller was that a target was an enemy. Another was a Boolean variable that was either true or false: lethal force was either permitted or prohibited. Eventually, Arkin arrived at a set of algorithms, and using computer simulations and very simplified combat scenarios—an unmanned aircraft engaging a group of people in an open field, for example—he was able to test his methodology.

Arkin acknowledges that the project, which was funded by the U.S. military, was a proof of concept, not an actual control-system implementation. Nevertheless, he believes the results showed that combat robots not only could follow the same rules that humans have to follow but also that they could do better. For example, the robots could use lethal force with more restraint than could human fighters, returning fire only when shot at first. Or, if civilians are nearby, they could completely hold their fire, even if that means being destroyed. Robots also don’t suffer from stress, frustration, anger, or fear, all of which can lead to impaired judgment in humans. So in theory, at least, robot soldiers could outperform human ones, who often and sometimes unavoidably make mistakes in the heat of battle.

“And the net effect of that could be a saving of human lives, especially the innocent that are trapped in the battle space,” Arkin says. “And if these robots can do that, to me there’s a driving moral imperative to use them.”

Needless to say, that’s not at all a consensus view. Critics of autonomous weapons insist that only a preemptive ban makes sense given the insidious way these weapons are coming into existence. “There’s no one single weapon system that we’re going to point to and say, ‘Aha, here’s the killer robot,’ ” says Mary Wareham, an advocacy director at Human Rights Watch and global coordinator of the Campaign to Stop Killer Robots, a coalition of various humanitarian groups. “Because, really, we’re talking about multiple weapons systems, which will function in different ways. But the one thing that concerns us that they all seem to have in common is the lack of human control over their targeting and attack functions.”

The U.N. has been holding discussions on lethal autonomous robots for close to five years, but its member countries have been unable to draw up an agreement. In 2013, Christof Heyns, a U.N. special rapporteur for human rights, wrote an influential report noting that the world’s nations had a rare opportunity to discuss the risks of autonomous weapons before such weapons were already fully developed. Today, after participating in several U.N. meetings, Heyns says that “if I look back, to some extent I’m encouraged, but if I look forward, then I think we’re going to have a problem unless we start acting much faster.”

This coming December, the U.N.’s Convention on Certain Conventional Weapons will hold a five-year review conference, and the topic of lethal autonomous robots will be on the agenda. However, it’s unlikely that a ban will be approved at that meeting. Such a decision would require the consensus of all participating countries, and these still have fundamental disagreements on how to deal with the broad spectrum of autonomous weapons expected to emerge in the future.

In the end, the “killer robots” debate seems to be more about us humans than about robots. Autonomous weapons will be like any technology, at least at first: They could be deployed carefully and judiciously, or chaotically and disastrously. Human beings will have to take the credit or the blame. So the question, “Are autonomous combat robots a good idea?” probably isn’t the best one. A better one is, “Do we trust ourselves enough to trust robots with our lives?”

This article appears in the June 2016 print issue as “When Robots Decide to Kill.”