Displays Of A Different Stripe

New image-management techniques provide brightness for half the power, easing the brutal tradeoff in video-rich gadgets

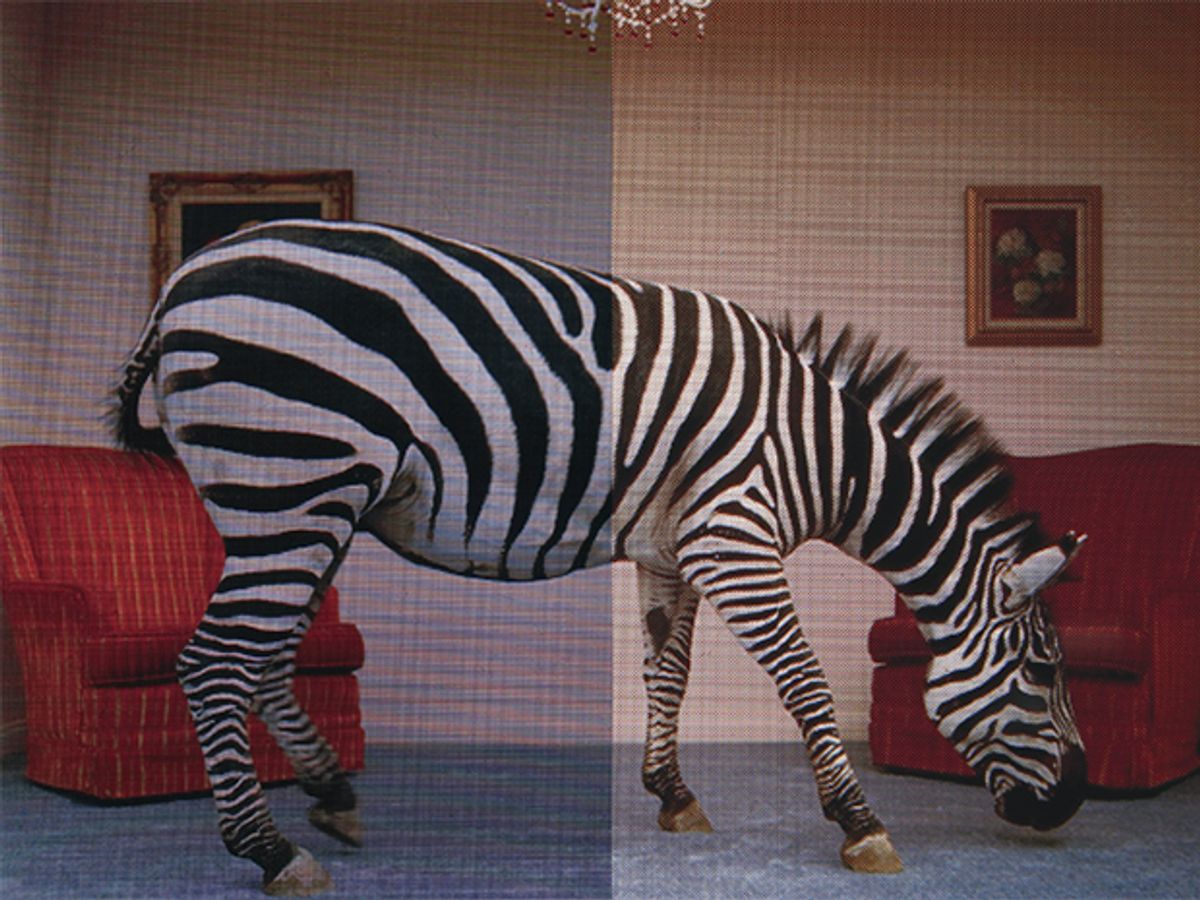

Best By Test: In a comparison of images, at a particular power level, a PenTile red-green-blue-white display (right) will outshine a conventional red-green-blue stripe display (left) hands down, yielding colors that are more vivid and distinct.

Today’s flat-panel displays provide bright, crisp, and vivid images--and they use plenty of power while doing it. It’s a tradeoff that hardly mattered when we rarely watched movies, played games, or surfed the Web on anything other than furniture-size monitors. But that power consumption is a serious engineering constraint today, when more and more of us are getting our visual data on the go, from cellphones, video iPods, and game players like Sony’s PSP. And as serious as the constraint is now, it will soon become downright intolerable as engineers strive to wring far more vivid visual information out of the next generation of portables than can be displayed by anything now on the market.

Fortunately, remarkable power savings--as much as 50 percent--can be achieved by simply redesigning the display to provide no more information than the eye can absorb and the brain can digest. This strategy is called biomimetic, because it deliberately mimics a living system.

Biomimicry has long been used in audio. For many years microphones, amplifiers, and speakers have been designed in sizes and frequency ranges that match the human auditory system. Similarly, the telephone system crams calls through limited carrying capacity by editing the frequencies down to a limited bandwidth. Such compression techniques have also been applied to audio and video software, as seen in the Moving Picture Experts Group (MPEG) and other algorithms. Now it is time to apply biomimicry to displays.

It all begins with the retina, the part of the eye that converts photons into electrochemical signals that are interpreted by the brain as images. The retina’s most discerning photosensitive elements, or photoreceptors, are the cones. Except in a color-blind person, the human eye has three kinds of cones, each having a different type of protein, called a photopigment.

One kind of photopigment is specialized to sense photons in the reddish-yellow band of wavelengths, the second in the greenish-yellow band, and the third in the blue one. Because the typical eye has about 30 red- and green-sensitive cones for every blue one, almost all the work of resolving an image--its luminance, edges, and other structural detail--is done with output from the red and green cones, which also detect color, of course. The blue cones detect only color [see sidebar, " "].

Yet despite the great preponderance of red and green cones in our eyes, most flat-panel displays produced today, like just about every color TV tube produced in the past half century, have a 1:1:1 ratio of red, green, and blue color elements. These elements, called subpixels, are arranged in either a stripe or a delta pattern [see illustration, "Tradeoffs"]. Because the blue subpixels do almost nothing to help the eye resolve images, most of it goes to waste.

Over the years, researchers have come up with ways to minimize the waste. In the 1970s, Bryce Bayer, of Eastman Kodak Co., came up with the Bayer pattern, with a 1:2:1 ratio of red, green, and blue subpixels, with the green subpixels linked diagonally, as in a checkerboard [see illustration, "Easy on the Eyes"]. That pattern was used by General Electric Co., in Fairfield, Conn., in avionics displays in the late 1980s. It made the displays somewhat more efficient, but it had problems. One was that the ratio of colors in the pixels gave the screens a distinct greenish cast. Another was that the scheme could not, for a given density of pixels, render imagery with the highest possible level of detail [see sidebar, " "].

Basically, what we have done at our company, Clairvoyante Inc., in Cupertino, Calif., is to rectify these shortcomings of the Bayer pattern. First, our pattern of subpixels, called the PenTile Matrix, addresses the Bayer’s color imbalance by going easy on the green. In addition, our pattern is rotated with respect to that of the Bayer by 45 degrees; this rotation has the effect of mapping the conventionally square orientation of the incoming pixel data to the high-luminance green subpixels on a one-to-one basis. We also resize the subpixels with respect to each other, making the green ones a lot smaller, a process that renders information with higher resolution.

Another seemingly slight tweak enhances the display’s performance immensely. By adding a white (or clear) subpixel to form a red-green-blue-white pixel, we dispense with one out of four color filters. We thereby boost efficiency: color filters absorb wavelengths from the backlight, so they sap energy from a display. We call this scheme the PenTile RGBW pattern.

We weren’t the first to come up with an RGBW display; past experimenters did it by simply swapping one of the two green subpixels for a white one in every pixel of a Bayer display. What we did differently was to squeeze the subpixels into long rectangles.

Even so, there is still an inherent inefficiency in using four subpixels instead of three. To get around the problem, we found ways to render a pixel with an average of just two subpixels--two-thirds as many as in the conventional RGB pattern. We do it by using software algorithms to create, in effect, virtual pixels. Basically, the algorithm fools the eye. It defines an edge of an object in an image with the red, green, and white subpixels, and adds the requisite dash of blue off to the side, on the ground that the eye cannot discern the exact location of the blue bits, anyway. Such tricks provide very crisp images with good color.

Another trick enhances the color on a single pixel indirectly. The goal is to minimize the number of color subpixels needed to display an image by getting each one to work as hard as possible in resolving the image. And there is a lot of freedom in doing so. For instance, to enhance a red pixel on a gray background, you can add a dash of white--increasing the luminance of the red--and also turn down the surrounding blue and green. The eye perceives this reduction of the blue and green as an enhancement of the red.

The bottom line is that brightness and color can be conveyed in more than one combination of red, green, blue, and white. Orange, for instance, will look the same to a human eye whether it comes from a single, pure wavelength at 600 nanometers or from the combination of two or more wavelengths from the red and yellow bands of the spectrum.

In tests, our PenTile Matrix pattern did well on many kinds of image files, including video, computer-generated graphics, and still and moving images [see illustration, " "]. The PenTile technology, however, works well only in high-resolution formats. If the resolution is too low--below about 185 dots per inch for near-range devices like cellphones--it can produce artifacts, such as texture in the background. The matrix did especially well displaying the higher-performance versions of the Joint Photographic Experts Group (JPEG) and MPEG-2 compression formats. A version of MPEGâ''2 is what is used to compress video data so that a whole movie and more can fit on a DVD. Briefly, MPEG-2 has several versions; the kind used on DVDs is known as 4:2:0, which indicates the ratio of the samples used to convey the moving image’s brightness (the 4) and the color (the 2 and the 0). A superior form of MPEGâ''2 is 4:2:2, because it allows for more detailed color sampling.

Our PenTile Matrix did particularly well with this superior form of MPEG-2. That was encouraging to us, because MPEG 4:2:2 is the compression format used for high-definition video, which is growing in popularity with the proliferation of big-screen TVs and the imminent arrival of high-definition video players, such as those offering the competing Blu-ray and HD DVD formats.

Vertical black-and-white lines show off the advantages of the PenTile RGBW [see illustration, "Two Do the Job of Three"]. While the conventional pattern must turn three adjacent columns on and three other columns off, our display renders the same detail by turning just two on and two off. Because this scheme requires just two-thirds as many subpixels as the conventional one, fewer transistors and drive lines are needed to control the subpixels, and less of the display’s area needs to be obscured by opaque elements. In other words, the aperture ratio increases, letting more light through. This improvement, together with the white subpixel, provides about twice the brightness for a given draw of power. The savings in manufacturing costs more than balances any increase occasioned by the addition of a fourth, clear, color filter.

Engineers can use the gains to save power or to rev up the brightness. In a cellphone with a 2.8-inch display, you can get a luxurious 350-candela-per-square-meter level of brightness for 475 milliwatts. That’s a power level that would give you a meager 175 cd/m2 if you used a conventional pattern. If, however, you are content with the meager brightness, you can make do with 233 mW--and with a backlight having half as many light-emitting diodes.

In anticipation of the expected demand for optimized displays, several manufacturers have already begun incorporating these power-efficient subpixel patterns and rendering algorithms. So far, the following LCD companies have publicly demonstrated PenTile display technology: AU Optronics, BOE Hydis, CPT, LG Innotek, Samsung, and Wintek. Silicon Works and Tomato LSI have also developed chips.

Clairvoyante hopes to see a PenTile display in a commercial product by the end of this year.

The art of designing products to conform to the needs of the body has been dignified by a name, ergonomics. The expansion of information technology into new domains means that engineers must now learn to make products that conform to the needs of the mind and the senses, as well.

About the Author

Joel Pollack is president and chief executive officer of Clairvoyante Inc., a display-architecture company in Cupertino, Calif. He has also worked in a variety of research and management jobs in the display businesses of Sharp, Tektronix, and Xerox.

To Probe Further

The advantages of biomimetic displays are discussed in “Reducing Pixel Count Without Reducing Image Quality,” by C.H.B. Elliott, Information Display, December 1999, Vol. 15, pp. 22–25.

Check out the blog by Greg Hitchcock and Bert Keely, inventors of Microsoft’s ClearType, at https://blogs.msdn.com/fontblog/default.aspx?p=2.

For a discussion on the total list of processing operations required to view a pixel, see “What Is a Pixel?” by J.F. Blinn, in IEEE Computer Graphics and Applications, September-October 2005, Vol. 25, no. 5, pp. 82–87.

More information on subpixel patterns is available in “Subpixel Rendering on Nonstriped Colour Matrix Displays,” by D.S. Messing et al. in Proceedings of the 2003 International Conference on Image Processing, Vol. 2, pp. 949–52.