Inside DARPA’s Subterranean Challenge

What SubT means for the future of autonomous robots

An ANYmal robot from Team Cerberus autonomously explores a cave on DARPA’s Subterranean Challenge course.

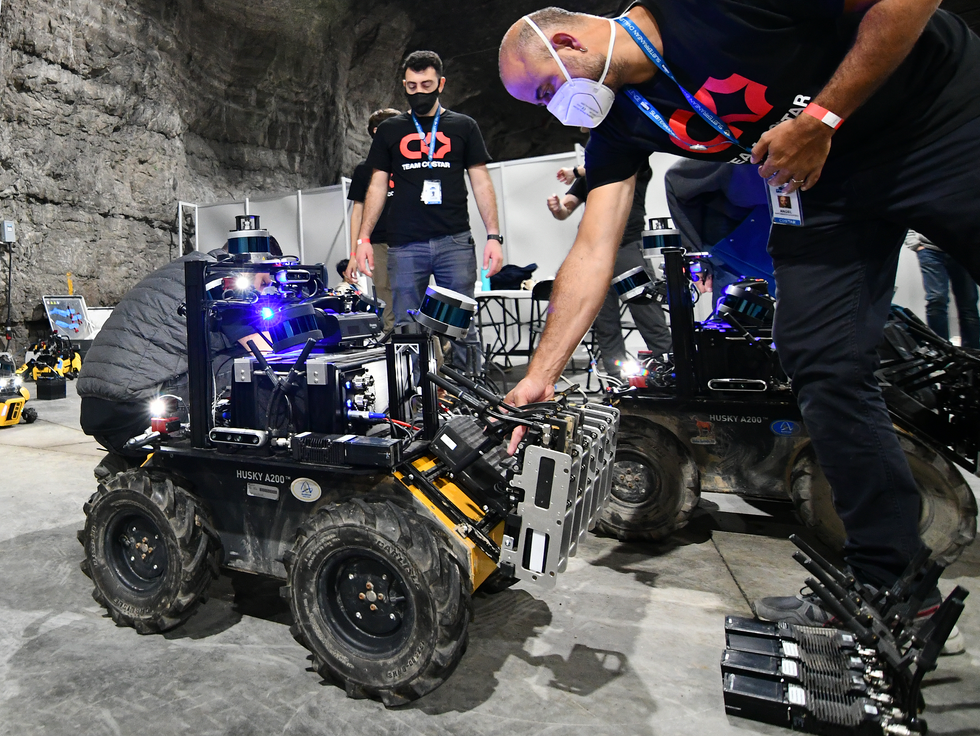

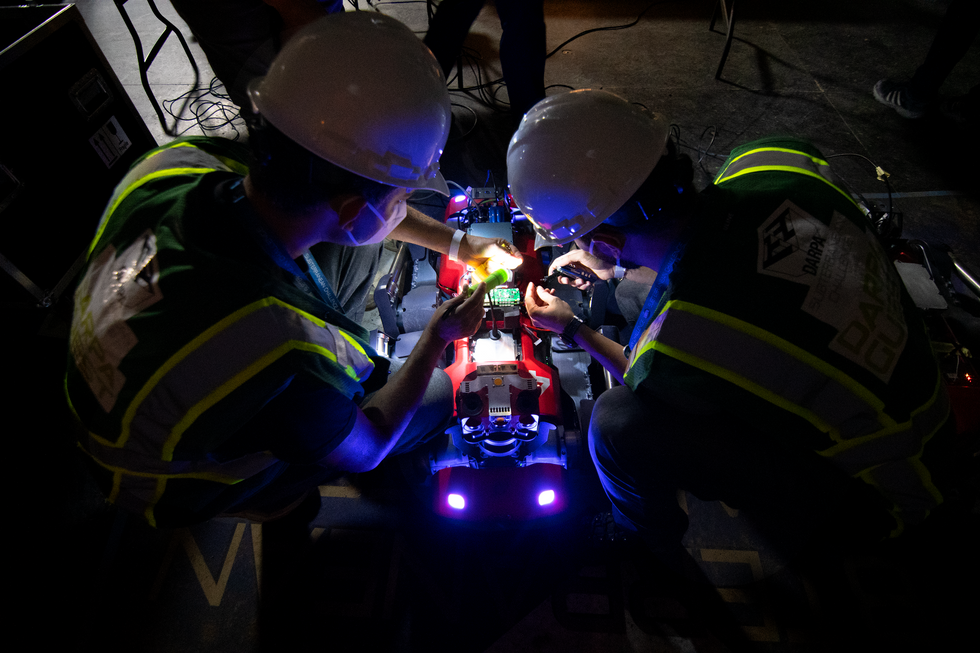

Deep below the Louisville, Ky., zoo lies a network of enormous caverns carved out of limestone. The caverns are dark. They’re dusty. They’re humid. And during one week in September 2021, they were full of the most sophisticated robots in the world. The robots (along with their human teammates) were there to tackle a massive underground course designed by DARPA, the Defense Advanced Research Projects Agency, as the culmination of its three-year Subterranean Challenge.

The SubT was first announced in early 2018. DARPA designed the competition to advance practical robotics in extreme conditions, based around three distinct underground environments: human-made tunnels, the urban underground, and natural caves. To do well, the robots would have to work in teams to traverse and map completely unknown areas spanning kilometers, search out a variety of artifacts, and identify their locations with pinpoint accuracy under strict time constraints. To more closely mimic the scenarios in which first responders might utilize autonomous robots, robots experienced darkness, dust and smoke, and even DARPA-controlled rockfalls that occasionally blocked their progress.

With direct funding plus prize money that reached into the millions, DARPA encouraged international collaborations among top academic institutions as well as industry. A series of three preliminary circuit events would give teams experience with each environment.

During the Tunnel Circuit event, which took place in August 2019 in the National Institute for Occupational Safety and Health’s experimental coal mine, on the outskirts of Pittsburgh, many teams lost communication with their robots after the first bend in the tunnel. Six months later, at the Urban Circuit event, held at an unfinished nuclear power station in Satsop, Wash., teams beefed up their communications with everything from a straightforward tethered Ethernet cable to battery-powered mesh network nodes that robots would drop like breadcrumbs as they went along, ideally just before they passed out of communication range. The Cave Circuit, scheduled for the fall of 2020, was canceled due to COVID-19.

By the time teams reached the SubT Final Event in the Louisville Mega Cavern, the focus was on autonomy rather than communications. As in the preliminary events, humans weren’t permitted on the course, and only one person from each team was allowed to interact remotely with the team’s robots, so direct remote control was impractical. It was clear that teams of robots able to make their own decisions about where to go and how to get there would be the only viable way to traverse the course quickly.

DARPA outdid itself for the final event, constructing an enormous kilometer-long course within the existing caverns. Shipping containers connected end-to-end formed complex networks, and many of them were carefully sculpted and decorated to resemble mining tunnels and natural caves. Offices, storage rooms, and even a subway station, all built from scratch, comprised the urban segment of the course. Teams had one hour to find as many of the 40 artifacts as possible. To score a point, the robot would have to report the artifact’s location back to the base station at the course entrance, which would be a challenge in the far reaches of the course where direct communication was impossible.

Eight teams competed in the SubT Final, and most brought a carefully curated mix of robots designed to work together. Wheeled vehicles offered the most reliable mobility, but quadrupedal robots proved surprisingly capable, especially over tricky terrain. Drones allowed complete exploration of some of the larger caverns.

By the end of the final competition, two teams had each found 23 artifacts: Team Cerberus—a collaboration of the University of Nevada, Reno; ETH Zurich; the Norwegian University of Science and Technology; the University of California, Berkeley; the Oxford Robotics Institute; Flyability; and the Sierra Nevada Corp.—and Team CSIRO Data61—consisting of CSIRO’s Data61; Emesent; and Georgia Tech. The equal scores triggered a tie-breaker rule: Which team had been the quickest to its final artifact? That gave first place to Cerberus, which had been just 46 seconds faster than CSIRO.

Despite coming in second, Team CSIRO’s robots achieved the astonishing feat of creating a map of the course that differed from DARPA’s ground-truth map by less than 1 percent, effectively matching what a team of expert humans spent many days creating. That’s the kind of tangible, fundamental advance SubT was intended to inspire, according to Tim Chung, the DARPA program manager who ran the challenge.

“There’s so much that happens underground that we don’t often give a lot of thought to, but if you look at the amount of infrastructure that we’ve built underground, it’s just massive,” Chung told IEEE Spectrum. “There’s a lot of opportunity in being able to perceive, understand, and navigate in subterranean environments—there are engineering integration challenges, as well as foundational design challenges and theoretical questions that we have not yet answered. And those are the questions DARPA is most interested in, because that’s what’s going to change the face of robotics in 5 or 10 or 15 years, if not sooner.”

This point cloud assembled by Team CSIRO Data61 shows a robotic view of nearly the entire SubT course, with each dot in the cloud representing a point in 3D space measured by a sensor on a robot. Team CSIRO’s point cloud differed from DARPA’s official map by less than 1 percent

CSIRO DATA61

IEEE Spectrum was in Louisville to cover the Subterranean Final, and we spoke recently with Chung, as well as CSIRO Data61 team lead Navinda Kottege and Cerberus team lead Kostas Alexis and about their SubT experience and the influence the event is having on the future of robotics.

DARPA has hundreds of programs, but most of them don’t involve multiyear international competitions with million-dollar prizes. What was special about the Subterranean Challenge?

Tim Chung: Every now and then, one of DARPA’s concepts warrants a different model for seeking out innovation. It’s when you know you have an impending breakthrough in a field, but you don’t know exactly how that breakthrough is going to happen, and where the traditional DARPA program model, with a broad announcement followed by proposal selection, might restrict innovation. DARPA saw the SubT Challenge as a way of attracting the robotics community to solving problems that we anticipate being impactful, like resiliency, autonomy, and sensing in austere environments. And one place where you can find those technical challenges coming together is underground.

The skill that these teams had at autonomously mapping their environments was impressive. Can you talk about that?

T.C.: We brought in a team of experts with professional survey equipment who spent many days making a precisely calibrated ground-truth map of the SubT course. And then during the competition, we saw these robots delivering nearly complete coverage of the course in under an hour—I couldn’t believe how beautiful those point clouds were! I think that’s really an accelerant. When you can trust your map, you have so much more actionable situational awareness. It’s not a solved problem, but when you can attain the level of fidelity that we’ve seen in SubT, that’s a gateway technology with the potential to unlock all sorts of future innovation.

Autonomy was a necessary part of SubT, but having a human in the loop was critical as well. Do you think that humans will continue to be a necessary part of effective robotic teams, or is full autonomy the future?

T.C.: Early in the competition, we saw a lot of hand-holding, with humans giving robots low-level commands. But teams quickly realized that they needed a more autonomous approach. Full autonomy is hard, though, and I think humans will continue to play a pretty big role, just a role that needs to evolve and change into something that focuses on what humans do best.

I think that progressing from human operators to human supervisors will enhance the types of missions that human-robot teams will be able to conduct. In the final event, we saw robots on the course exploring and finding artifacts, while the human supervisor was focused on other stuff and not even paying attention to the robots. That was so cool. The robots were doing what they needed to do, leaving the human free to make high-level decisions. That’s a big change: from what was basically remote teleoperation to “you robots go off and do your thing and I’ll do mine.” And it’s incumbent on the robots to become even more capable so that the transition [of the human] from operator to supervisor can occur.

What are some remaining challenges for robots in underground environments?

T.C.: Traversability analysis and reasoning about the environment are still a problem. Robots will be able to move through these environments at a faster clip if they can understand a little bit more about where they’re stepping or what they’re flying around. So, despite the fact that they were one to two orders of magnitude faster than humans for mapping purposes, the robots are still relatively slow. Shaving off another order of magnitude would really help change the game. Speed would be the ultimate enabler and have a dramatic impact on first-response scenarios, where every minute counts.

What difference do you think SubT has made, or will make, to robotics?

T.C.: The fact that many of the technologies being used in the SubT Challenge are now being productized and commercialized means that the time horizon for robots to make it into the hands of first responders has been far shortened, in my opinion. It’s already happened, and was happening, even during the competition itself, and that’s a really great impact.

What’s difficult and important about operating robots underground?

Navinda Kottege: The fact that we were in a subterranean environment was one aspect of the challenge, and a very important aspect, but if you break it down, what the SubT Challenge meant was that we were in a GPS-denied environment, where you can’t rely on communications, with very difficult mobility challenges. There are many other scenarios where you might encounter these things—the Fukushima nuclear disaster, for example, wasn’t underground, but communication was a massive issue for the robots they tried to send in. The Amazon Rainforest is another example where you’d encounter similar difficulties in communication and mobility. So we saw how each of these component technologies that we would have to develop and mature would have applications in many other domains beyond the subterranean.

Where is the right place for a human in a human-robot team?

N.K.: There are two extremes. One is that you push a button and the robots go and do their thing. The other is what we call “human in the loop,” where it’s essentially remote control through high-level commands. But if the human is taken out of the loop, the loop breaks and the system stops, and we were experiencing that with brittle communications. The middle ground is a “human on the loop” concept, where you have a human supervisor who sets mission-level goals, but if the human is taken off of the loop, the loop can still run. The human added value because they had a better overview of what was happening across the whole scenario, and that’s the sort of thing that humans are super, super good at.

How did SubT advance the field of robotics?

N.K.: For field robots to succeed, you need multiple things to work together. And I think that’s what was forced upon us by the level of complexity of the SubT Challenge. This whole notion of being able to reliably deploy robots in real-world scenarios was, to me, the key thing. Looking back at our team, three years ago we had some cool bits and pieces of technology, but we didn’t have robot systems that could reliably work for an hour or more without a human having to go and fix something. That was one of the biggest advances we had, because now, as we continue this work, we don’t even have to think twice about deploying our robots and whether they’ll destroy themselves if we leave them alone for 10 minutes. It’s that level of maturity that we’ve achieved, thanks to the robustness and reliability that we had to engineer into our systems to be successful at SubT, and now we can start focusing on the next step: What can you do when you have a fleet of autonomous robots that you can rely on?

Your team of robots created a map of the course that matched DARPA’s official map with an accuracy of better than 1 percent. That’s amazing.

N.K.: I got contacted immediately after the final event by the company that DARPA brought in to do the ground-truth mapping of the SubT course. They’d spent 100 person-hours using very expensive equipment to make their map, and they wanted to know how in the world we got our map in under an hour with a bunch of robots. It’s a good question! But the context is that our one hour of mapping took us 15 years of development to get to that stage.

There’s a difference in what’s theoretically possible and what actually works in the real world. In its early stages, our software worked, in that it hit all of the theoretical milestones it was supposed to. But then we started taking it out to the real world and testing it in very difficult environments, and that’s where we started finding all the edge cases of where it breaks. Essentially, for the last 10-plus years, we were trying to break our mapping system as much as possible, and that turned it into a really well-engineered solution. Honestly, whenever we see the results of our mapping system, it still surprises us!

What made you decide to participate in the SubT Challenge?

Kostas Alexis: What motivated everyone was the understanding that for autonomous robots, this challenge was extremely difficult and relevant. We knew that robotic systems could operate in these environments if humans accompanied them or teleoperated them, but we also knew that we were very far away from enabling autonomy. And we understood the value of being able to send robots instead of humans into danger. It was this combination of societal impact and technical challenge that was appealing to us, especially in the context of a competition where you can’t just do work in the lab, write a paper, and call it a day—you had to develop something that would work all the way through the finals.

What was the most challenging part of SubT for your team?

K.A.: We are at the stage where we can navigate robots in normal officelike environments, but SubT had many challenges. First, relying on communications with our robots was not possible. Second, the terrain was not easy. Typically, even terrain that is hard for robots is easy for humans, but the natural cave terrain has been the only time I’ve felt like the terrain was a challenge for humans too. And third, there’s the scale of kilometer-size environments. The robots had to demonstrate a level of robustness and resourcefulness in their autonomy and functionality that the current state-of-the-art in robotics could not demonstrate. The great thing about the SubT Challenge was that DARPA started it knowing that robotics did not have that capacity, but asked us to deliver a competitive team of robots three years down the road. And I think that approach went well for all the teams. It was a great push that accelerated research.

As robots get more autonomous, where will humans fit in?

K.A.: It is a fact now that we can have very good maps from robots, and it is a fact that we have object detection, and so on. However, we do not have a way of correlating all the objects in the environment and their possible interactions. So, although we can create awesome, beautiful, accurate maps, we are not equally good at reasoning.

This is really about time. If we were performing a mission where we wanted to guarantee full exploration and coverage of a place with no time limit, we likely wouldn’t need a human in the loop—we can automate this fully. But when time is a factor and you want to explore as much as you can, then the human ability to reason through data is very valuable. And even if we can make robots that sometimes perform as well as humans, that doesn’t necessarily translate to novel environments.

The other aspect is societal. We make robots to serve us, and in all of these critical operations, as a roboticist myself, I would like to know that there is a human making the final calls.

Do you think SubT was able to solve any significant challenges in robotics?

K.A.: One thing, of which I’m very proud for my team, is that SubT established that legged robotic systems can be deployed under the most arbitrary of conditions. [Team Cerberus deployed four ANYmal C quadrupedal robots from Swiss robotics company ANYbotics in the final competition.] We knew before SubT that legged robots were magnificent in the research domain, but now we also know that if you have to deal with complex environments on the ground or underground, you can take legged robots combined with drones and you should be good to go.

When will we see practical applications of some of the developments made through SubT?

K.A.: I think commercialization will happen much faster through SubT than what we would normally expect from a research activity. My opinion is that the time scale is counted in terms of months—it might be a year or so, but it’s not a matter of multiple years, and typically I’m conservative on that front.

In terms of disaster response, now we’re talking about responsibility. We’re talking about systems with virtually 100 percent reliability. This is much more involved, because you need to be able to demonstrate, certify, and guarantee that your system works across so many diverse use cases. And the key question: Can you trust it? This will take a lot of time. With SubT, DARPA created a broad vision. I believe we will find our way toward that vision, but before disaster response, we will first see these robots in industry.

This article appears in the May 2022 print issue as “Robots Conquer the Underground.”

- Late Nights, Cool Hacks, and More Stories From the DARPA SubT ... ›

- How JPL's Team CoSTAR Won the DARPA SubT Challenge: Urban ... ›

- DARPA SubT Finals: Meet the Teams - IEEE Spectrum ›