Could AI inspire your next ugly holiday sweater?

As odd as it may sound, recent advancements in machine learning have made it possible. CALA, an “operating system for fashion” that helps designers sketch, prototype, and produce new products, is the first service to implement OpenAI’s DALL-E API. Its new generative AI tool is live and free to try.

“The use case is enabling anyone to get their idea across without a full sketch or 3D renders, by having DALL-E generate ideas via text inputs,” says Andrew Wyatt, cofounder and CEO at CALA. “It’s a continuance of us democratizing access in an industry that’s historically been very insular.”

DALL-E for e-fashion?

Founded in 2016, CALA is a fashion platform built for designers looking for an accessible way to turn ideas into tangible products. The service is available through both its website and a mobile app. Anyone can sign up and try the platform for free—so I did.

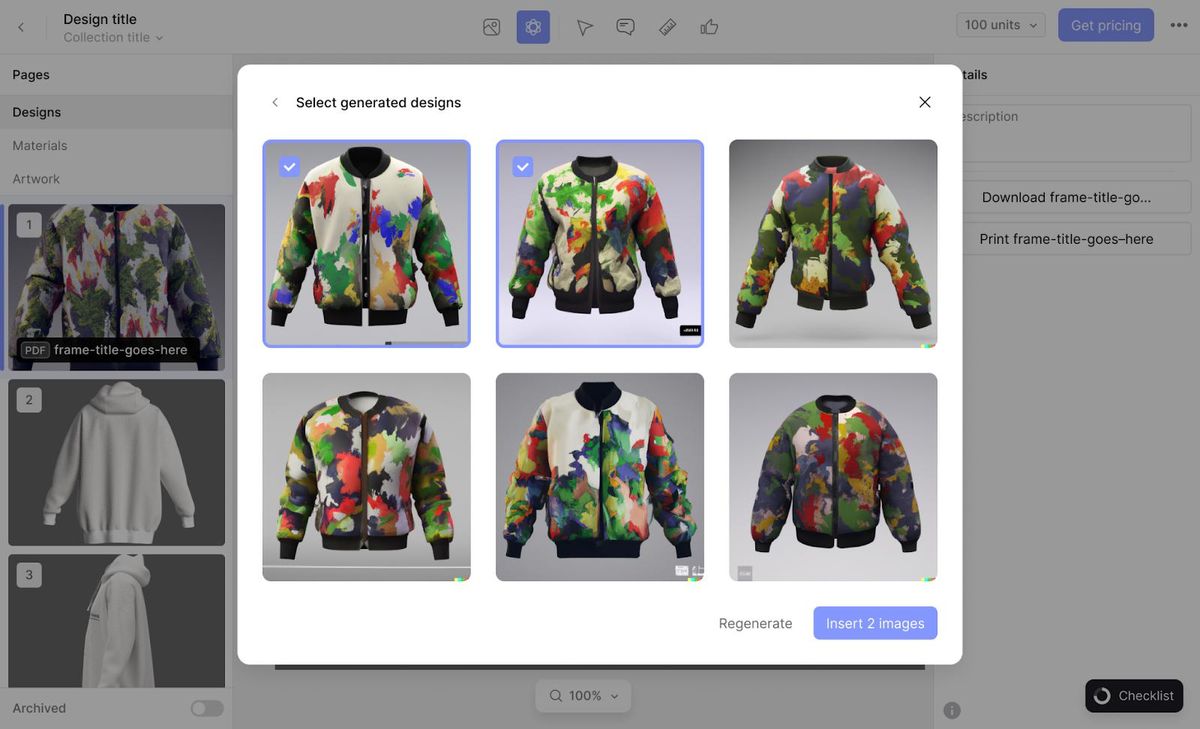

It’s broadly similar to AI art generators such as DALL-E 2 and Stable Diffusion but customized to fit CALA’s platform. Instead of entering a text prompt in a single, long string of text, designers are guided to first select a base style, such as a sweater, blouse, or tote, from a list of 25 options. Designers then use generative AI to modify the style through two textual prompts. The first describes the design based on adjectives and materials, while the other describes desired trims and features such as cuffs or zippers.

“We want to prevent the situation where someone comes in, they type in ‘brown shirt,’ and they’re, like, this sucks.”

—Andrew Wyatt, CALA

Wyatt believes this alternative user interface will help designers zone in on important features and avoid duds. “What we’re kind of doing here, is we built a UI on top of the prompt engineering. Our goal here is to get people to a meaningful result as quickly as possible.” This, Wyatt hopes, will nudge designers away from dead-end or unappealing results. “We want to prevent the situation where someone comes in, they type in ‘brown shirt,’ and they’re, like, this sucks.”

I saw the results of this tactic in my own, messy effort to make a Halloween sweater. Fashion design is, admittedly, well out of my comfort zone, but I found the tool approachable. The entire process, including the time spent waiting for results to appear, took less than a minute. CALA presents six results at a time, any of which can then be inserted into the design platform for further iteration.

CALA’s implementation shouldn’t be misunderstood as a one-click design tool. Designers still need to bring their own skills and learn how to use CALA’s platform. However, Wyatt hopes AI will significantly reduce the barrier to entry for new designers and give veteran designers a way to overcome creative roadblocks.

“We want to let people take an idea and just follow the rabbit trail, through variation after variation after variation,” says Wyatt. “We think it’s going to help people come up with way crazier and different concepts.”

Ease of use could drive DALL-E’s surge

CALA’s tool is the first live, public implementation of OpenAI’s DALL-E API by a third party. The API is not currently available to the public and doesn’t have a release date.

This isn’t OpenAI’s first rodeo. GPT-3, the company’s deep learning language model, was released as an API in 2020 and was quickly adopted by third parties. GPT-3 is now used by dozens of companies and organizations including Copysmith and MessageBird. Microsoft acquired a license to use the GPT-3 model for Microsoft Power Apps and the Azure OpenAI Service.

Luke Miller, product manager at OpenAI, says the company learned valuable lessons from GPT-3’s rollout. “Each deployment teaches us more about safety, engineering, and, ultimately, how our technology can create value in the world,” says Miller. “Since releasing the GPT-3 API, we’ve made a number of improvements to our safeguards. For example, we announced an updated moderation endpoint in August and we’re continuing to find ways we can improve.”

CALA’s experience with the DALL-E API hints ease of use will prove the key driver of the API’s adoption once it’s made available to the public. Wyatt says his company’s engineers put the API to use in just a few weeks.

“We sort of did some high-res concepting that we passed off to [OpenAI] for feedback about eight weeks ago. Then the total build and polish was less than a month,” says Wyatt. “I could see this being a meaningful integration in a multitude of different products.”

In fact, the flood of tools based on DALL-E has already begun. Shutterstock, a service which offers stock photos, images, and videos, plans to implement the DALL-E API “in the coming months.” Shutterstock paired this announcement with a framework to compensate artists on the platform when their work is used to train AI models. Microsoft is bringing DALL-E to its Azure OpenAI Service, too, though access is currently invite-only.

“We’ve always felt the future, especially within fashion, is kind of moving towards AI-powered design and automated production,” says Wyatt. “We just thought it was going to be, you know, five years from now. Over the last six months, just seeing the amount of progress...[we] think there’s just going to be tremendous innovation over the next couple of years.”

- 5 AI Art Generators You Can Use Right Now - IEEE Spectrum ›

- DALL-E 2's Failures Are the Most Interesting Thing About It - IEEE ... ›

- Stable Attribution Identifies the Art Behind AI Images - IEEE Spectrum ›

- ChatGPT May Be A Better Improviser Than You - IEEE Spectrum ›

- AI Art Generators Can Be Fooled Into Making NSFW Images - IEEE Spectrum ›

Matthew S. Smith is a freelance consumer-tech journalist. An avid gamer, he is a former staff editor at Digital Trends and is particularly fond of wearables, e-bikes, all things smartphone, and CES, which he has attended every year since 2009.