Inside the Development of Light, the Tiny Digital Camera That Outperforms DSLRs

The creator of the new Light digital camera explains how he made it work

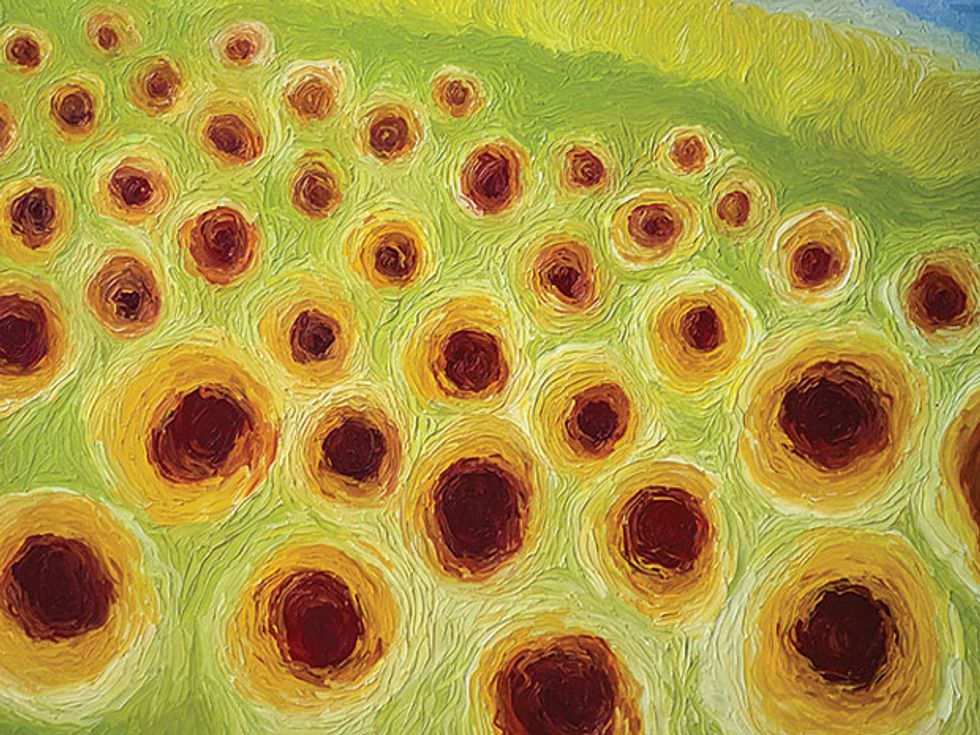

The best digital cameras today areSLRs (single-lens reflex cameras), which use a movable mirror to guide the same light rays that fall on the sensor into the viewfinder. These cameras normally have precisely ground glass lenses and large, high-quality image sensors. In the right hands, they can shoot amazing pictures, with brilliant colors and pleasing lighting effects, often showing a crisply focused subject and an aesthetically blurred background.

But these cameras are big, heavy, and expensive: A good digital SLR (DSLR) with a decent set of lenses—including a standard 50 mm, a wide angle, and a telephoto, for example—can easily set you back thousands of dollars.

So most photos today aren’t being shot with DSLRs but with the tiny camera modules built into mobile phones. Nobody pretends these pictures match the quality of a photograph taken by a good DSLR; they tend to be grainy, and the camera allows very little artistic control. But smartphone cameras certainly are easy to carry around.

Can’t we have it both ways? Couldn’t a high-quality yet still-tiny camera somehow be fit into a mobile device?

That’s the question I asked myself five years ago. And the very positive answer, announced last October and shipping early in 2017, is coming from a company I started: Light.

The Light camera starts with a collection of inexpensive plastic-lens camera modules and mechanically driven mirrors. We put them in a device that runs the standard Android operating system along with some smart algorithms. The result is a camera that can do just about everything a DSLR can—and one thing it can’t: fit in your pocket. More on how it works later. First, let me tell you a little bit about my background, because that helps explain how—and why—I came up with this approach.

In 2011, I was looking for my next challenge. I had just left Flarion Technologies, a company I had founded and later sold. My engineering career—as a researcher at Lucent Technologies’ Bell Labs, before I started Flarion—had been in communications and information theory, so I’d expected to stay involved in those technologies. But I found myself instead thinking more and more about cameras.

I had taken photographs with film cameras as a child in India, but I never had any particularly good equipment. I was intrigued when digital photography started taking off, but I didn’t buy my first digital camera until 1999, when my daughter was born. I took a lot of pictures with that Kodak camera, then moved on to various Sony digital cameras. Eventually, when DSLRs came out, I went all out, purchasing a couple of DSLR cameras, a bunch of Canon lenses, and all sorts of other equipment—three camera bags’ worth. And I took lots of pictures with this gear.

Ten-plus years later, I got an iPhone and also started taking pictures with it, not because the quality was really there but because of the convenience: I had to plan ahead if I wanted to take pictures with one of my good cameras, which I did less and less. As I talked with other avid photographers, I discovered I wasn’t the only one who had expensive camera gear gathering dust. It’s not that any of us were happy with the quality of the pictures we were taking with our phones—indeed, we were all frustrated by it. But at the end of the day, convenience always won out.

So when I was looking around for a new professional challenge, I realized I could attack a problem I was dealing with myself. But I didn’t start immediately; I didn’t know optics well, and I assumed that if there was to be a technical solution it would come from somebody who did.

But I kept investigating, and I soon discovered that most experts in optics don’t really understand digital-image processing, and electrical engineers and computer scientists who understand digital images don’t generally know much about optics. The solution, I later realized, straddled both the digital and the optical worlds. And I had the luxury of being able to sit at home and teach myself optics for a year.

I had only a rough idea of how the problem might be solved at that point. But as I dug in, I realized not only that there might be a technical solution but also that this was the perfect time to try to build a different kind of commercially viable camera.

I knew that cellphone camera lenses were molded out of plastic—that’s why they were so inexpensive. And thanks to cellphones, molded plastic lens technology had been nearly perfected over the previous five years to the point where these lenses were “diffraction limited”—that is, for their size, they were as good as the fundamental physics would ever allow them to be. Meanwhile, the cost had dropped dramatically: A five-element smartphone camera lens today costs only about US $1 when purchased in volume. (Elements are the thin layers that make up a plastic lens.) And sensor prices had plummeted as well: A high-resolution (13-megapixel) camera sensor now costs just about $3 in volume.

While smartphone lenses have become extremely good, the quality of the smartphone camera today is nowhere comparable to that of a high-end DSLR. There are four main reasons: First, the lenses of smartphone cameras are small and collect very little light. And you can’t produce good pictures without capturing enough light energy. So smartphone photos will often be “noisy” or grainy, particularly in low light. Second, small sensors and a high pixel count mean that the individual pixels are tiny (approximately 1 micrometer across), and therefore hit a saturation point after receiving just a little bit of light. This results in pictures with very limited dynamic range (limited differences between the darkest darks and the lightest lights). Third, smartphone-camera lenses have a fixed focal length and so can’t zoom. Finally, because of the small lens aperture, smartphone pictures have a very large depth of field—that is, they are sharp over a very large range of subject distances from the camera. That might sound like a good thing, but it’s not, because controlling the depth of field is essential for artistic photography.

I thought that all these shortcomings of smartphone cameras might be overcome by using multiple smartphone camera modules to take multiple pictures simultaneously, which could then be combined digitally. By using many modules, the camera could capture more light energy. The effective size of each pixel would also increase because each object in the scene would be captured in multiple pictures, increasing the dynamic range and reducing graininess. By using camera modules with different focal lengths, the camera would also gain the ability to zoom in and out. And if we arranged the multiple camera modules to create what was effectively a larger aperture, the photographer could control the depth of field of the final image.

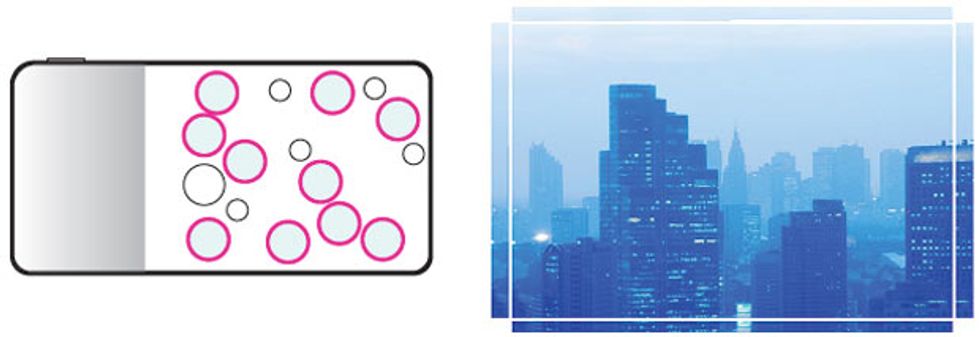

Choices, Choices

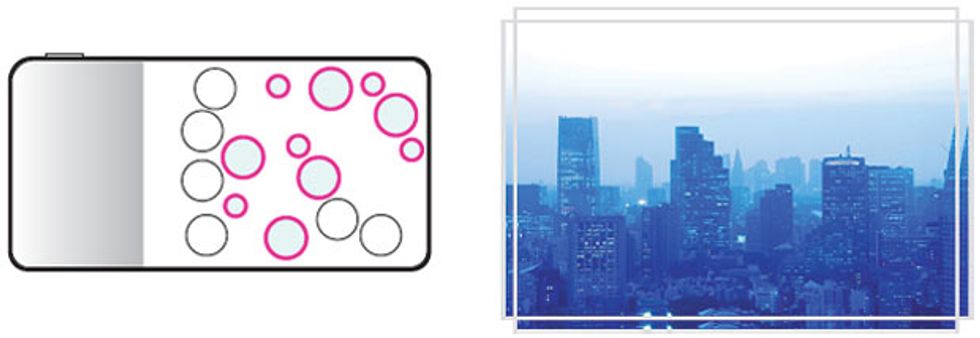

Though the individual camera modules have fixed focal lengths and aperture sizes, combining the images from the lenses in different ways allows photographers to change the depth of field and zoom using the focal-length equivalents of 28 mm through 150 mm.

While at that point I knew it was theoretically possible to use multiple modules to overcome the shortcomings of a small camera, I needed to confirm that such a strategy was truly practical. So I figured I’d better check with some optics experts. In the first half of 2013, I cold-called Julie Bentley, a lens-design expert at the University of Rochester, in New York, and drove 5 hours from where I was living in New Jersey to meet with her.

It started as a contentious conversation: She basically told me I was crazy. But she kept asking questions. I answered them, apparently convincingly, because after about 45 minutes, she became supportive of the approach I was proposing and sent me to Moondog Optics in Fairport, N.Y., a company that does optical design. I met with the CEO, Scott Cahall, who thought what I was suggesting was doable.

And so later in 2013, I joined with Dave Grannan, who had just left Nuance Communications, and officially started Light.

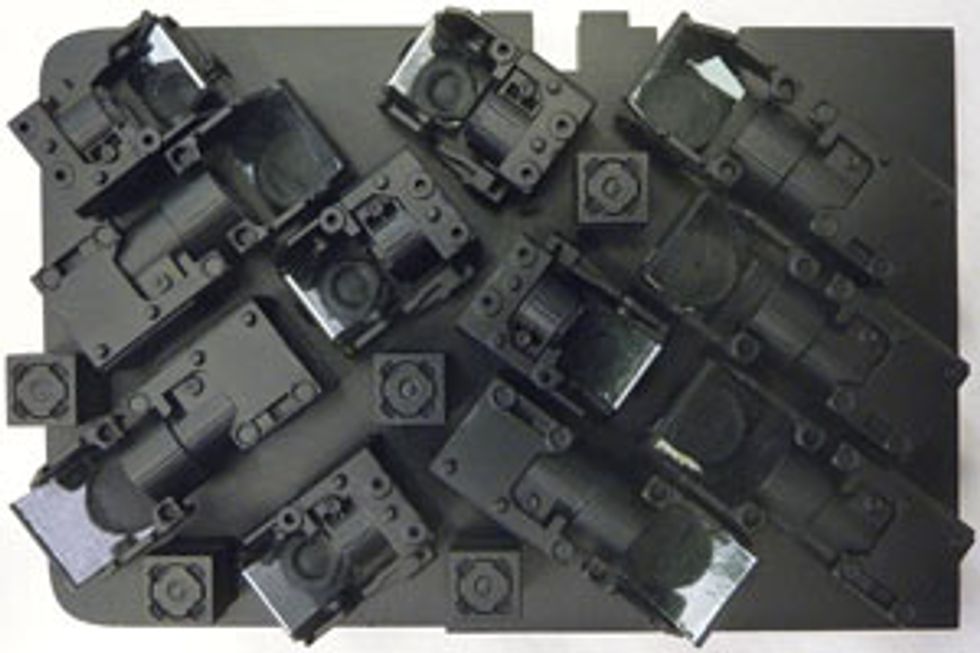

The first and currentversion of the Light camera—called the L16—has 16 individual camera modules with lenses of three different focal lengths—five are 28-mm equivalent, five are 70-mm equivalent, and six are 150-mm equivalent.

“Equivalent” means that the lens achieves the same field of view as a lens of the specified focal length in a conventional film camera. For a simple single-lens element, when light comes from far away and hits the lens, it converges at a point. The focal length represents the distance of that point from the lens. For example, the equivalent focal length of a human eye is around 50 mm. A lens with a larger focal length has a higher magnification and makes the subjects appear larger.

All the lenses in the L16 are molded plastic, and all the camera modules capture images using standard CMOS sensors, similar to those used in smartphone cameras. Each camera module has a lens, an image sensor, and an actuator for moving the lens to focus the image. Each lens has a fixed aperture of F2.4—that is, the focal length of the lens divided by its diameter is equal to 2.4. (A lens with a lower F number has a larger aperture and so lets in more light.)

Five of these camera modules capture images at what we think of as a 28-mm field of view; that’s a wide-angle lens on a standard SLR. These camera modules point straight out. Five other modules provide the equivalent of 70-mm telephoto lenses, and six work as 150-mm equivalents. These 11 modules point sideways, but each has a mirror in front of the lens, so they, too, take images of objects in front of the camera. A linear actuator attached to each mirror can adjust it slightly to move the center of its field of view.

Each image sensor has a 13-megapixel resolution. When the user takes a picture, depending on the zoom level, the camera normally selects 10 of the 16 modules and simultaneously captures 10 separate images. Proprietary algorithms are then used to combine the 10 views into one high-quality picture with a total resolution of up to 52 megapixels. The image fusion can be done either in the camera or on another computer.

When you press the shutter button to take a 28-mm image, all five 28-mm modules fire simultaneously, recording five images of the same thing but from slightly different perspectives. All five 70-mm modules also record images. Normally, a 70-mm lens can capture approximately a quarter of the scene that a 28-mm lens takes. But our camera adjusts the mirrors in front of four of those lenses so that different modules point at each of the four quadrants of the 28-mm frame we’re trying to take, so these four 70-mm images effectively end up covering most of the 28-mm frame.

Because four 70-mm images are recorded, each at a 13-megapixel resolution, the camera captures 52 megapixels of information. The fifth 70-mm module points at the center of the 28-mm frame to ensure the best picture quality at the center of the final image. The software in the camera uses information from the 28-mm modules to precisely stitch together the 70-mm images and then combines all the data into one high-resolution, high-quality picture. It’s easy to see how this process lets us capture much more light than if we’d just used one camera module.

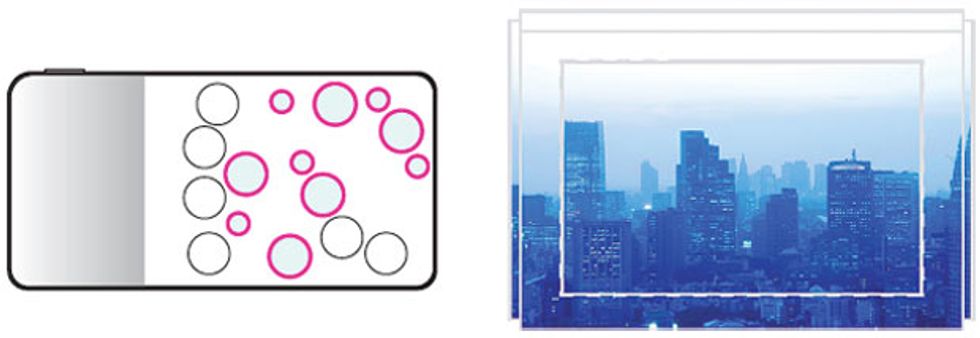

Zoom, Zoom

To shoot an image with the equivalent of a 28-mm focal length, the Light camera’s 28-mm and 70-mm camera modules get into the act; the resulting 52-megapixel image is a combination of the data. To shoot at 70 mm, the 70-mm and 150-mm camera modules go to work and the 28-mm modules rest. The Light camera doesn’t contain any 50-mm modules, so taking a 50-mm image involves the 28-mm and 70-mm modules (the former slightly cropped and the latter slightly overlapped), resulting in a 40-megapixel image.

To take pictures at 70 mm, we move the mirrors so that the five 70-mm modules now point straight out from the camera; all of them cover approximately the same field of view but from slightly different perspectives. We now enlist the 150-mm modules as well, adjusting their mirrors so that they capture four images that tile the 70-mm modules’ field of view. And once again, we combine the many images digitally to provide a better picture than a single-module camera could possibly take, one that rivals a DSLR image.

We can even use our technology to zoom anywhere in the range of 28 to 150 mm. Traditional zoom lenses change focal length by physically moving the lens elements with respect to one another when you rotate the zoom-control ring. Our modules are too small to have either the space or the mechanical precision to accomplish this synchronously across multiple camera modules. So we took a systems approach to solving the problem, using fixed-focal-length lenses.

Suppose you wanted to capture an image with a 50-mm field of view, smaller than what a 28-mm lens captures but larger than that of a 70-mm lens. We activate all the 28-mm camera modules and crop each of the images to the 50-mm frame. (Cropping is not ideal because we lose some sensor area and light.) We also simultaneously use the 70-mm modules. But before we do that, we move the mirrors on four of the 70-mm modules so the captured 70-mm images overlap enough to cover only the 50-mm frame. This way, we retain all of the light collected by the 70-mm modules.

There are other advantages to having multiple lenses. Because the different lenses are set slightly apart from one another, just as your eyes are, the Light camera can obtain images from multiple viewpoints and use them to generate a depth map of the scene. That’s valuable because it allows the software to produce any desired depth of field by appropriately blurring those portions of the scene that are outside a selected range of depths.

The software can also change what is called the bokeh, which refers to the aesthetic quality of the blur that appears in the out-of-focus parts of an image. Traditional cameras adjust their lens apertures by opening and closing an iris of sorts made of plastic leaves, overlapped to try to mimic a circular opening. As a result, small, bright, out-of-focus objects appear as regular polygons or circular disks. This is the effect most people are used to. Many photographers consider the ideal bokeh as having a very gentle roll-off, with no sharp edges defining the circle—a Gaussian blur.

Photographers will pay a lot of money for a lens with the right bokeh. In our design, the camera uses software to add blur with the right bokeh to those parts of the scene that are outside the selected depth of field. This approach means that users can get whatever bokeh they want. They can choose the conventional disk-shaped bokeh or one with a Gaussian blur. Or they can get creative—for instance, picking a star-shaped bokeh for use in holiday photos, making small decorative lights appear as stars.

The Light camera also naturally allows for an increased dynamic range. That’s because the modules don’t all have to use the same exposure. We can deliberately overexpose pictures in some modules so that they can image the dark areas with less noise, while underexposing others to capture the highlights perfectly. Because the camera records redundant images, it still has all the information to reconstruct the final picture, but with a much larger dynamic range. Apple iPhone cameras can do this today in their HDR (high dynamic range) mode, but they achieve that by taking a sequence of pictures in time, which can cause motion artifacts.

Recording all these images and then properly combining them into a composite image takes a lot of processing power. We’re using the Qualcomm Snapdragon 820 processor, which today is the state of the art in mobile processing. We also have a custom integrated circuit that enables us to interface 16 cameras with the Snapdragon processor. This allows near real-time processing of images in the camera, which results in a resolution of about three megapixels; that’s good enough for sharing on social media.

We expect that most of our users will render full-resolution images on their computers, though. That will be faster and won’t eat up the battery of the mobile unit. In the next generation of our camera, we will build in hardware acceleration of our processing algorithms, which will then enable the camera to process full-resolution images and manipulate the depth of field without unduly taxing the battery or the user’s patience.

The rest of our camera hardware is a standard Android package, which can run Android apps; it’s about the same length and width as a smartphone, but at 21 mm it’s about two to three times as thick. This approach lets us easily write a user-friendly camera app to simplify the interface and make it less intimidating to use the camera’s advanced features.

The Light camera can run in either auto or manual mode. The auto mode will allow you to tell the camera what you want to do—take a portrait, say, in which case the camera will activate the flash if it’s dark, select a shallow depth of field to highlight the subject, and adjust the exposure. The manual mode will offer more controls, but we’re not going to ask users to set 10 different exposures on 10 different camera modules. We will likely allow them to set everything that they could adjust on a DSLR—flash, aperture, shutter speed, ISO, and exposure—and let the camera’s software figure out the details.

Our first-generation L16 camera will start reaching consumers early next year, for an initial retail price of $1,699. Meanwhile, we have started thinking about future versions. For example, we can improve the low-light performance. Because we are capturing so many redundant images, we don’t need to have every one in color. With the standard sensors we are using, every pixel has a filter in front of it to select red, green, or blue light. But without such a filter we can collect three times as much light, because we don’t filter two-thirds of the light out. So we’d like to mix in camera modules that don’t have the filters, and we’re now working with On Semiconductor, our sensor manufacturer, to produce such image sensors.

But beyond tweaking our current technology to improve performance, I have a more ambitious goal. I want to use this technology to build a camera with a 600-mm lens equivalent in something the size of a tablet computer, perhaps a little thicker. Today, a 600-mm lens is bigger than a rolling pin, weighs more than 4 kilograms, and costs upwards of $12,000. Only professional wildlife or sports photographers would ever buy one. But if consumers could afford a 600-mm camera, travel with it easily, and take high-quality pictures with it, that would be incredibly cool.

That’s my next challenge. For now, however, I’m looking forward to seeing Light technology migrate into cellphones. Then the cameras we all carry with us everywhere will be as good as the ones we leave sitting at home.

This article appears in the November 2016 print issue as “A Pocket Camera With Many Eyes.”

About the Author

Rajiv Laroia is chief technology officer and cofounder of Light, based in Palo Alto, Calif.