Computer scientists have created algorithms to run all kinds of tests on big hauls of data. Powerful learning algorithms, like those that can predict septic shock, improve crop yields, and filter college and job applications, supposedly remove human error and bias. However, algorithms may be more human than we think.

Computer scientists at the University of Utah, University of Arizona and Haverford College in Pennsylvania created a method to both fish out and fix algorithms that may exhibit unintentional bias based on race, gender, or age.

Algorithms can be biased by detecting subtleties or trends in data that correlate with a demographic even though all the data is unlabeled. For example, if offers of employment were made based on an oral exam score, some minority candidates might be filtered out by an initial algorithm screening if there is a small relationship between minorities and scores.

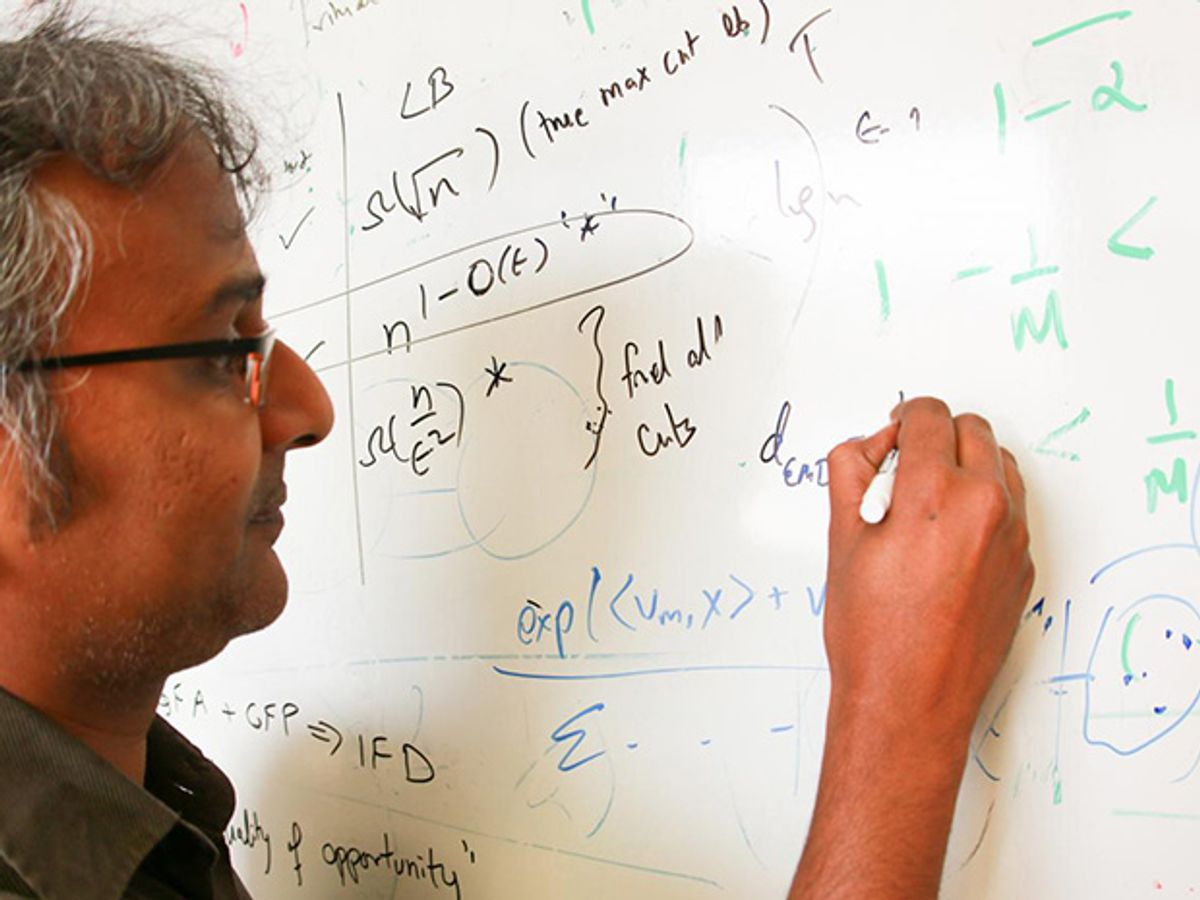

This issue came to the attention of the lead researcher, University of Utah professor Suresh Venkatasubramanian, during a conversation with sociologists about the 1970 U.S. Supreme Court anti-discrimination case, Griggs v. The Duke Power Co. The court ruled that a business hiring decision was illegal if it was discriminatory along racial, religious, or gender lines or because of disability, regardless of whether or not the decision was deliberately prejudiced. Venkatasubramanian wanted to know if algorithms succumb to biases unintentionally the way humans do.

Over a conference luncheon, “we ended up doodling on a napkin how to formulize if a machine-learning algorithm was doing what is was supposed to or if it was discriminatory,” says Venkatasubramanian. “The very idea that an algorithm can be biased is difficult for people to draw. It is perhaps the biggest hurdle in the paper itself.”

In the paper, presented on 12 August at the Association of Computing Machinery’s Conference on Knowledge and Discovery, the group introduced two important new algorithms that work in tandem.

The first implements the disparate-impact rule from the Griggs case and tests whether a particular selection algorithm is discriminatory. If the test results show that the algorithm under question can distinguish attributes—like whether a data point represents a male or female—then it’s labeled biased. The other algorithm the researchers introduced tries to remedy the bias by modifying the actual data set so that any selection algorithm would deliver fair results. The algorithm does this by blurring attributes that may be correlated to, say, race or gender.

To ensure that the new algorithms worked, the researchers ran the test on three sets of data that had previously been analyzed. They found that their technique of detection and repair was more effective than competing methods.

Understanding how an algorithm becomes biased is fascinating, says Venkatasubramanian. The bias is germinated innocently enough within a simple processing system, and develops in a carefully controlled setting. But the self-improving nature of learning algorithms raises concern. Venkatasubramanian and his colleagues wonder whether we can ever trust the fairness of algorithms. To that end, they have begun stockpiling relevant information about where and how they go wrong. He also hopes that the study will help lawmakers understand how algorithms and big data should be treated in a legal case.

Many people believe that an algorithm is just a code, but that view is no longer valid, says Venkatasubramanian. “An algorithm has experiences, just as a person comes into life and has experiences.”