Almost from the moment Cerebras Systems announced a computer based on the largest single computer chip ever built, the Silicon Valley startup declared its intentions to build an even heftier processor. Today, the company announced that its next-gen chip, the Wafer Scale Engine 2 (WSE 2), will be available in the 3rd quarter of this year. WSE 2 is just as big physically as its predecessor, but it has enormously increased amounts of, well, everything. The goal is to keep ahead of the ever-increasing size of neural networks used in machine learning.

“In AI compute, big chips are king, as they process information more quickly, producing answers in less time—and time is the enemy of progress in AI," Dhiraj Malik, vice president of hardware engineering said in a statement.

Cerebras has always been about taking a logical solution to the problem of machine learning to the extreme. Training neural networks takes too long—weeks for the big ones when Andrew Feldman cofounded the company in 2015. The biggest bottleneck was that data had to shuttle back and forth between the processor and external DRAM memory, eating up both time and energy. The inventors of the original Wafer Scale Engine figured that the answer was to make the chip big enough to hold all the data it needed right alongside its AI processor cores. With gigantic networks for natural language processing, image recognition, and other tasks on the horizon, you'd need a really big chip. How big? As big as possible, meaning the size of an entire wafer of silicon (with the round bits cut off), or 46,225 square millimeters.

WSE 2 | WSE | Nvidia A100 | |

Size | 46,225 mm2 | 46,225 mm2 | 826 mm2 |

Transistors | 2.6 trillion | 1.2 trillion | 54.2 billion |

Cores | 850,000 | 400,000 | 7,344 |

On-chip memory | 40 gigabytes | 18 GB | 40 megabytes |

Memory bandwidth | 20 petabytes/s | 9 PB/s | 155 GB/s |

Fabric bandwidth | 220 petabits/s | 100 Pb/s | 600 gigabytes/s |

Fabrication process | 7 nm | 16 nm | 7 nm |

That wafer size is one of the only stats that hasn't changed from the WSE to the new version WSE 2 as you can see in the table above. (For comparison to an more conventional AI processor, Cerebras uses Nvidia's AI-chart topping A100.)

What Made It Happen?

The most obvious and consequential driver is the move from TSMC's 16-nanometer manufacturing process—which was more than five years old by the time WSE came out—to the megafoundry's 7-nm process, leapfrogging the 10 nanometer process. A jump like that basically doubles transistor density. The process change should also result in about 40 percent speed improvement and a 60 percent reduction in power, according to TSMC's description of its technologies.

“There are always physical design challenges when you change nodes," says Feldman. “All sorts of things are geometry dependent. Those were really hard, but we had an extraordinary partner in TSMC."

The move to 7-nm alone would spell a big improvement, but according to Feldman, the company has also made improvements to the microarchitecture of its AI cores. He wouldn't go into details, but says that after more than a year working with customers, Cerebras has learned some lessons and incorporated them into the new cores.

Which brings us to the next thing that's driving the changes between WSE ad WSE 2—the customers. Although it had some when it launched WSE (all undisclosed at the time), it has a much longer list now and much more experience serving them. The customer list is heavy on scientific computing:

Customer | Use |

Argonne National Lab | Cancer therapeutics, COVID-19 research, gravity wave detection, materials science and materials discovery |

Edinburgh Parallel Computing Centre | Natural language processing, genomics, COVID-19 research |

GlaxoSmithKline | Drug discovery, multi-lingual research synthesis |

Lawrence Livermore National Lab | Integrated into Lassen, the 8th most powerful supercomputer; used for fusion simulation, traumatic brain injury research, cognitive simulation |

Pittsburgh Supercomputer Center | Component of a new research supercomputer called Neocortex |

Unnamed others in heavy manufacturing, pharmaceuticals, biotech, military, and intelligence | We may never know |

Finally, there's the big increase in size of the company. IEEE Spectrum visited Cerebras in 2019, when it had one small building in Sunnyvale. “The team has basically doubled in size," says Feldman. The company now has about 300 engineers in Silicon Valley, San Diego, Toronto, and Tokyo and more than dozen open positions listed on its website.

What hasn't changed (much)?

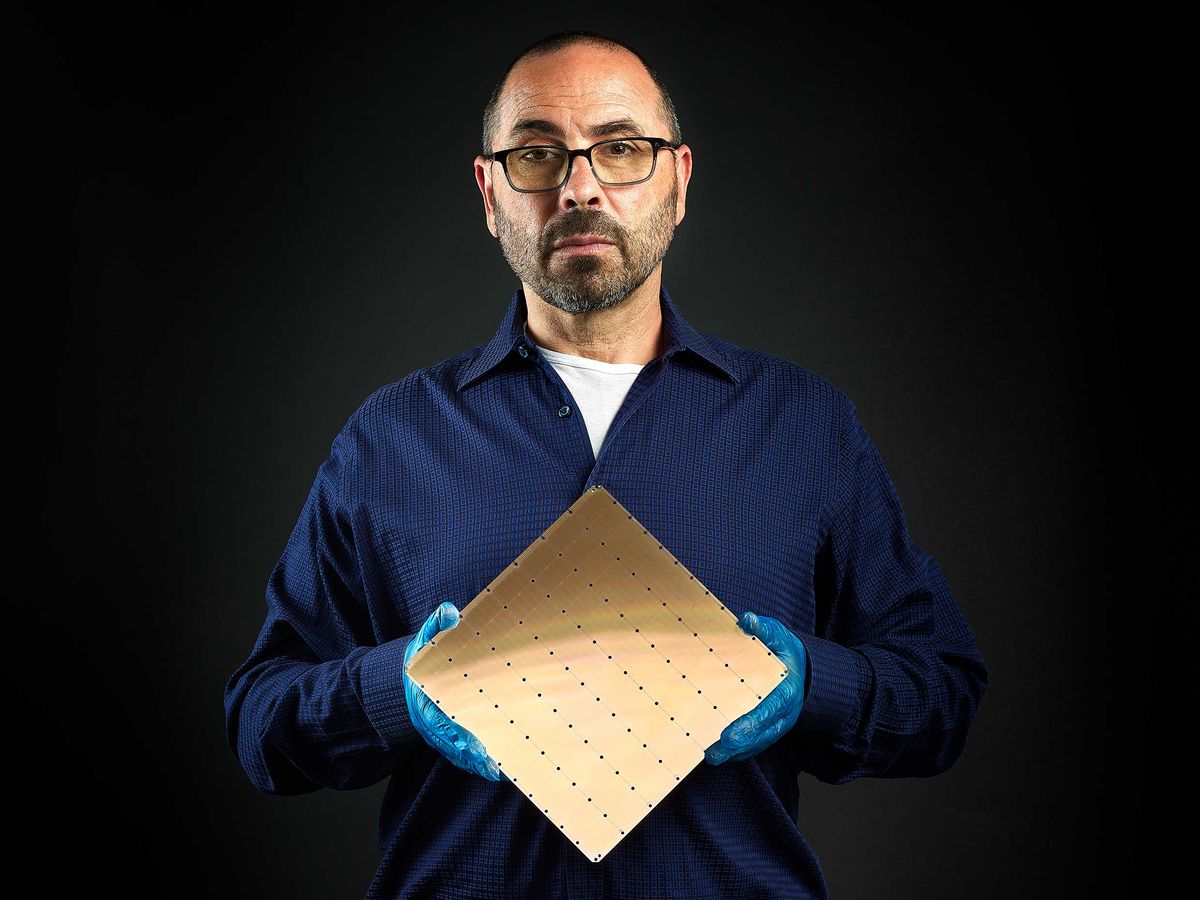

For fairly obvious reasons, the size of the chip itself hasn't changed. 300-millimeters is still the maximum wafer size in mass production, so the chip's outer dimensions can't change. And despite having twice as many AI cores, the WSE-2 looks just like WSE to the naked eye. It's still divided into a 7 x 12 grid of rectangles, but that's just an artifact of the chip-making process.

The computer system that hosts the WSE 2, called the CS-2, hasn't really changed much either. “We were able to carry forward significant portions of the physical design," says Feldman.

The CS-2 still takes up one-third of a standard rack, consumes about 20 kilowatts, relies on a closed-loop liquid cooling system, and has some pretty big cooling fans. Heat had been one of the biggest issues when developing a host system for the original WSE. That chip needed some 20,000 amps of current fed to it from one million copper connections to a fiberglass circuit board atop the wafer. Keeping all that aligned as heat expanded the wafer and the circuit board meant inventing new materials and took more than a year of development. While the CS-2 required some new engineering, it didn't need that degree of wholesale invention, according to Feldman. (With all the things that didn't change, the deep dive we did on Cerebras' CS-1 is still pretty relevant. It details some of the many things that had to be invented to bring that computer to life.)

Another holdover is how CS-2 uses all those hundreds of thousands of cores to train a neural network. The software allows users to write their machine learning models using standard frameworks such as PyTorch and TensorFlow. Then its compiler devotes variously-sized, physically contiguous portions of the WSE-2, to different layers of the specified neural network. It does this by solving a “place and route" optimization problem that ensures that the layers all finish their work at roughly the same pace, so information can flow through the network without stalling. Cerebras had to make sure the “software was sufficiently robust to compile not just 400,000 cores but 850 thousand cores… to do place and route on things that are 2-2.3 times larger," says Feldman.

This article appears in the July 2021 print issue as “Supersize AI."

- Cerebras' Tech Trains 'Brain-Scale" AIs - IEEE Spectrum ›

- Cerebras’s Giant Chip Will Smash Deep Learning’s Speed Barrier - IEEE Spectrum ›

- Graphcore Uses TSMC 3D Chip Tech to Speed AI by 40% ›

- New AI Chip Twice As Energy Efficient As Alternatives - IEEE Spectrum ›

- The Case for Running AI on CPUs Isn't Dead Yet - IEEE Spectrum ›

- SambaNova’s New Chip Means GPTs For Everyone ›

- Cerebras WSE-3: Third Generation Superchip for AI - IEEE Spectrum ›

Samuel K. Moore is the senior editor at IEEE Spectrum in charge of semiconductors coverage. An IEEE member, he has a bachelor's degree in biomedical engineering from Brown University and a master's degree in journalism from New York University.