A growing fleet of smart cars may add their street camera views to those of the surveillance camera networks already covering many major cities. That could open the door for a new technology that enables different video cameras to “talk” with one another and track the same individual person across many different camera views—possibly giving rise to Google Earth style maps that can display pedestrian and vehicle traffic.

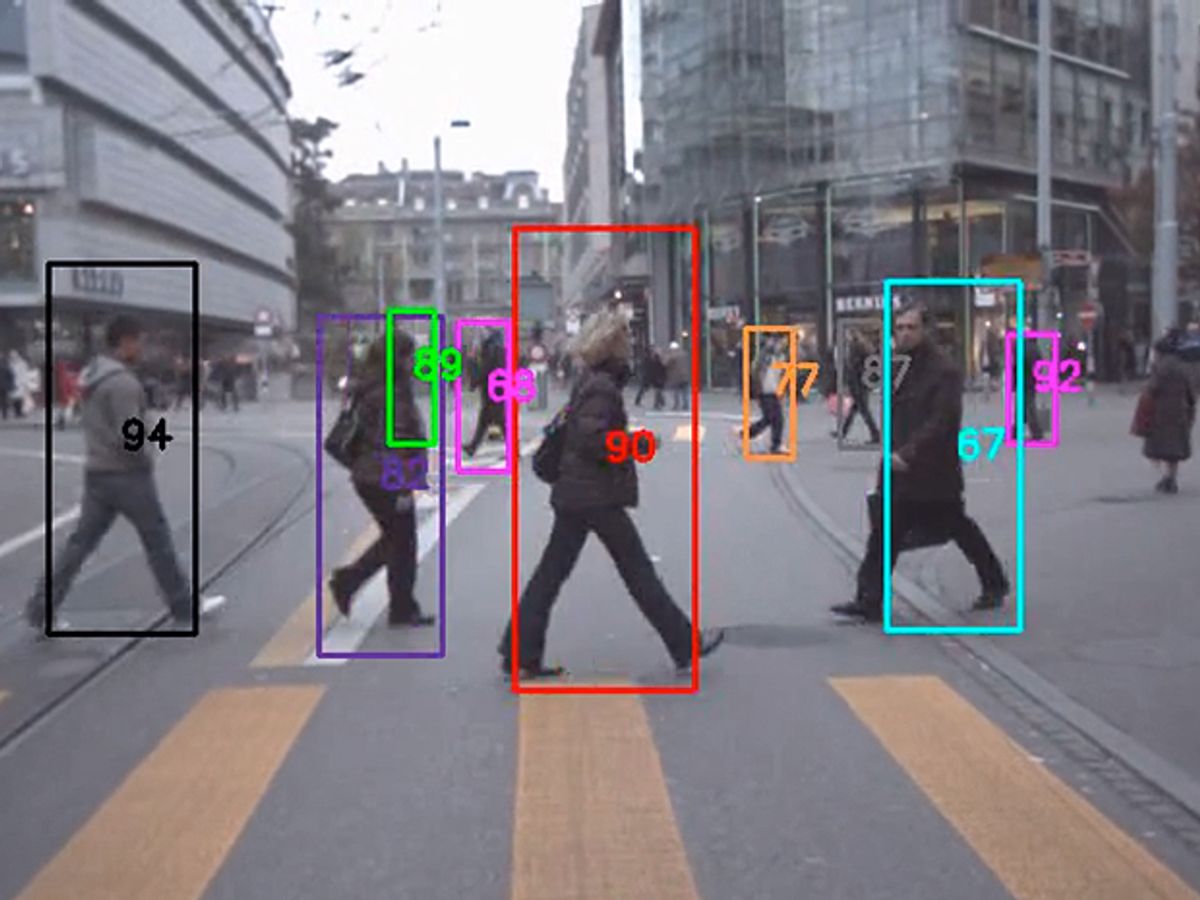

The technology is based on a computer algorithm that can compare different camera views of the same person and learn to recognize the same individuals across many camera views by focusing on body color, texture and movement. Researchers envision a large-scale version of the system tracking pedestrian traffic on a virtual map—perhaps displayed on a car’s GPS screen—or enabling police to easily track fleeing suspects across multiple surveillance camera views.

“Our idea is to enable the dynamic visualization of the realistic situation of humans walking on the road and sidewalks, so eventually people can see the animated version of the real-time dynamics of city streets on a platform like Google Earth,” said Jenq-Neng Hwang, a professor of electrical engineering at the University of Washington, in a news release.

Hwang’s team of researchers tested the algorithm with cameras placed on moving cars. They trained individual cameras learn to work together in pairs by training them on a certain set of test footage. Such testing led to the new work, which was presented at the Intelligent Transportation Systems Conference sponsored by IEEE and held in Qingdao, China.

But the technology does not have to work with only car-mounted cameras. The team has experimented with using their algorithm on cameras carried by flying drones. There is also no reason such an algorithm couldn’t eventually extend to existing surveillance camera systems in big cities such as New York City and London.

The algorithm could also aid law enforcement in tracking individuals across multiple surveillance cameras scattered across a city. Such capability could have come in handy for U.S. detectives hunting the Boston Marathon bombing suspects last year.

Hwang even describes how the algorithm could allow retail stores to track individual customers walking around and deduce their shopping preferences—perhaps enabling stores to quickly send a coupon for certain items to the shopper’s smartphone.

The number of cameras usable by the algorithm will likely only grow in the near future as flying drones fill the skies, car-mounted cameras continue to proliferate, and a growing number of pedestrians may walk around with smartphones in hand or smart glasses on their faces.

A technology that enables cameras to more easily track individual people could easily raise new privacy concerns. Hwang acknowledged such concerns in a University of Washington video, but emphasized the benefits for public safety. Just how individual communities choose to weigh that tradeoff in deploying such technnology remains to be seen.

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.