"When building a completely new system or test bed, the risks of designing or developing a fully custom radio from a circuit board are too great to take on because the development stage may require plenty of redesigning. Also, the system may need various adjustments after it has started going through complete runs or during the experiment. Choosing a COTS solution has significant advantages to easily performing measurements while making those changes."

- Masayuki (大堂) Ohdo (雅之 氏), National Institute of Information and Communications Technology (国立研究開発法人 情報通信研究機構)

The Challenge:

We aimed to develop wireless communication technology that could remarkably reduce the latency caused by a series of communication-related processes while multiple mobile phones, also called user equipment (UE), simultaneously connect to a single base station. To achieve this, we needed to design a completely new wireless communication method from scratch, build a high-performing test bed within a short time period, and obtain experimental results to prove the validity of that method.

The Solution:

We used commercial off-the-shelf (COTS) software defined radio (SDR) solutions that offer flexible software support for various specifications and functions to build a high-performing system in a short period, while avoiding the risks inherent in a new design. We created an environment to prove the validity of the new communication method based on real-world radio propagation by using USRP RIO solutions, which emulate UEs, and combining NI’s PXI products and FlexRIO devices to emulate a base station.

Author(s):

Masayuki (大堂) Ohdo (雅之 氏) - National Institute of Information and Communications Technology (国立研究開発法人 情報通信研究機構)

Masafumi (森山) Moriyama (雅文 氏) - National Institute of Information and Communications Technology (国立研究開発法人 情報通信研究機構)

Hayato (手塚) Tezuka (隼人 氏) - National Institute of Information and Communications Technology (国立研究開発法人 情報通信研究機構)

Takuji (笹生) Sasoh (拓児 氏) - Dolphin System Co.,Ltd.

Background

The wireless communication industry expects 5G to be the core technology for the Internet of Things (IoT), which makes 5G an important topic for R&D. The number of applications built on 5G is expected to increase dramatically in the future. It is clear that we need new wireless access technologies to accommodate an even greater number of UEs. In terms of the mobile communications market, businesses and research organizations around the world are investigating new technologies. The 3rd Generation Partnership Project (3GPP) is formulating standards using some of those technologies as a basis. In Japan, the Ministry of Internal Affairs and Communications is boosting the R&D of technology geared toward expanding radio wave resources. It is promoting businesses and organizations that focus on improving wireless technologies and supplying them with funds so that they can find and verify new ideas. One of the R&D cases created by this Ministry is called “High-Efficiency Communication Method Research and Development Concerning Mobile Communication Networks That Store Multiple Devices.” Our group, the Wireless System Research Lab of the Wireless Network Research Center, is part of the National Institute of Information and Communications Technology (NICT). We are one of the institutions entrusted with this project.

This project has required the development of technology that can achieve simultaneous connections from multiple UEs to a single base station, as well as technology that reduces latency caused by various processes that accompany communication. We regard 5G as a great research topic, so we wanted to address this issue by targeting the physical layer.

Now, what does it mean to achieve simultaneous connections to UEs? This means that when we focus on the uplink from the UE to the base station, if radio waves are sent from multiple UEs at the same frequency and at the same time to the same base station, we can restore the individual data sent from each device at the base station. This technique efficiently uses frequency resources and directly relates to accommodating many UEs. The other technique to reduce latency is indispensable in making 5G a reality, especially for applications that require real-time control (such as controls for drones and autonomous vehicles). Both are important, but they are mostly discussed as individual issues. Even in 3GPP, massive machine-type communication (mMTC) for methods that handle simultaneous connections to multiple UEs and Ultra-Reliable and Low Latency Communications (URLLC) for latency are discussed as two different use cases. Based on the way it is handled in 3GPP, achieving both simultaneous connections to multiple UEs and low latency might be even further in the future than 5G. We have ventured into creating a solution that balances simultaneous connections and low latency. We carried out a proposal that would achieve simultaneous connections to five UEs, which exceeds 3GPP’s current target, with a latency of less than 5 ms. The target latency for small-capacity packet (20 byte) transmissions assumed by usage scenarios for 3GPP’s mMTC is 10 s (3GPP TR 38.913). The target number of simultaneously connected UEs will be discussed in the future.

The Issue

As previously stated, radio waves are sent from multiple UEs to one base station at the same frequency and at the same time in simultaneous connections. In other words, they reach the base station with multiple radio waves overlapping. In the context of wireless communication, this kind of situation is called a non-orthogonal state. The base station must make full use of signal processing technology to separate and detect signals from each device. To achieve this, the wireless frame from the transmitter was first configured with a data signal and reference signal. The data signal contains data corresponding to voice, speech, or anything one wishes to send. Information that identifies UEs is stored in the reference signal. Additionally, this signal also includes information that can identify the transmission path’s characteristics from the UE to the base station. The latter information is used to suppress/eliminate the effects of interference of data signals between UE. The base station has a mechanism that separates the received signals based on this information and implements processes that correspond to the respective UEs.

Another issue is the reduction of latency. Latency is the time required for a series of processes (such as the time required by the process from when transmission data from the UE is generated until the time that data is turned into a wireless signal), the time required for radio propagation (latency of wireless sections), and the time used by a process from when the wireless signal is received at the base station until the output of data of each UE. We decided to reduce the grant-free transmission and transmit time interval (TTI) as a method to reduce latency. With the grant-free transmission method, when a request for data communication is generated at the UE, data can be transferred immediately from the UE without the base station granting permission beforehand. The preprocessing that occurs between the base station and the UE is simple, so it can curb latency. When reducing the TTI, the latency of the wireless section is lowered by reducing the smallest transmission unit, which is comprised of a reference signal and data signal, by reducing the existing LTE method of 1 ms to 0.5 ms.

This project did not even have the basic specifications related to communications in the first place. As a result, we had to design everything from scratch, including the bandwidth used, frequency bandwidth, and signal frame platform. After determining these specifications, the functions that power simultaneous connections to multiple UEs and low latency were described as a program and verified through a computer simulation. Furthermore, in this project, we needed to not only construct a theory on paper, but also to build a system and verify the algorithm using real-world signals. In the end, we had to conduct a field test and obtain results to prove that the wireless communication algorithm was effective. As a result, in concurrence with our construction of a theory, we decided to look for a system we could use to verify said theory with over-the-air signals. However, there were not only various gaps between the simulation and the actual prototyping system, but also many uncertain elements. In addition, we had no room to spare at all in our schedule. With all things considered, we could not justify building a fully customized system from scratch as a realistic solution.

Solutions and Effects

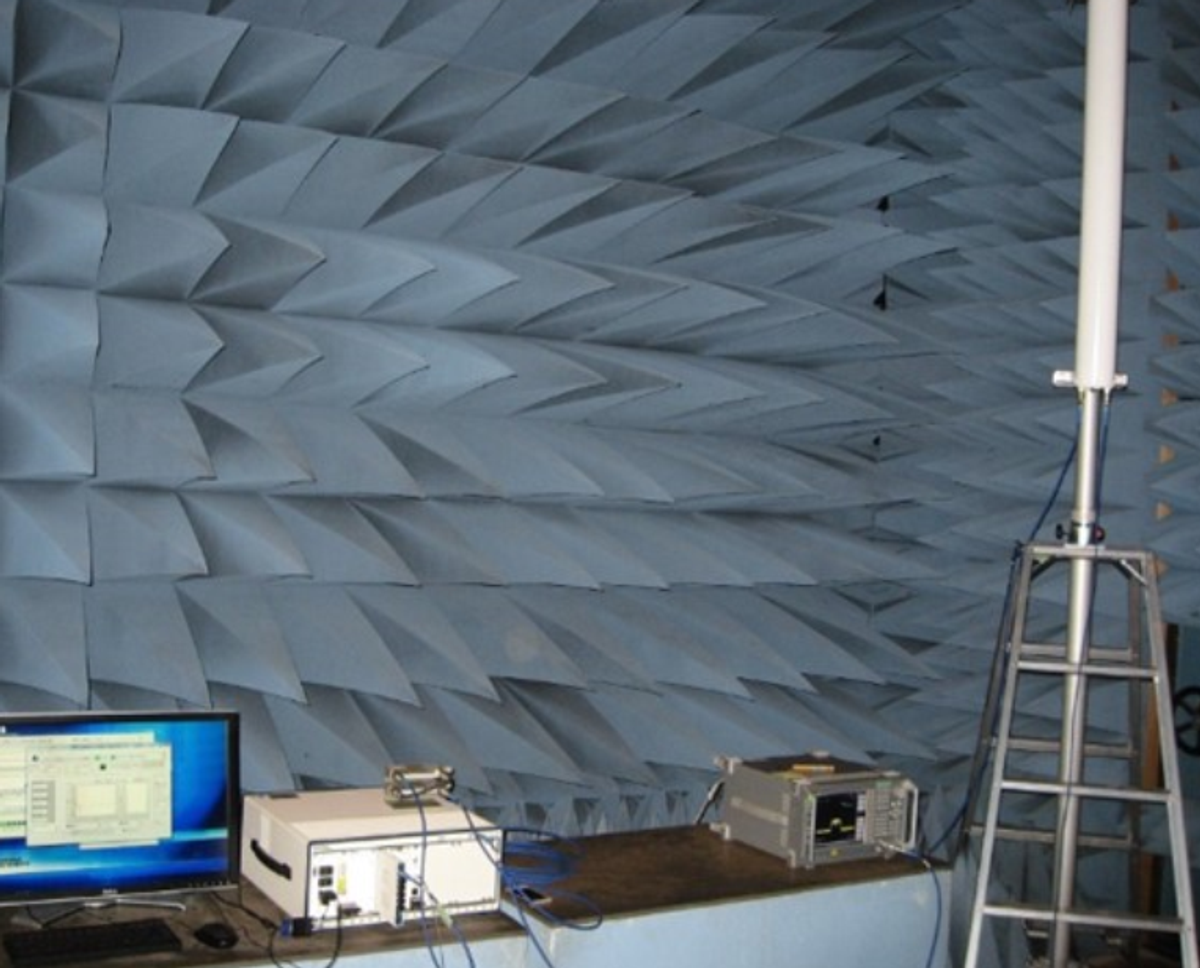

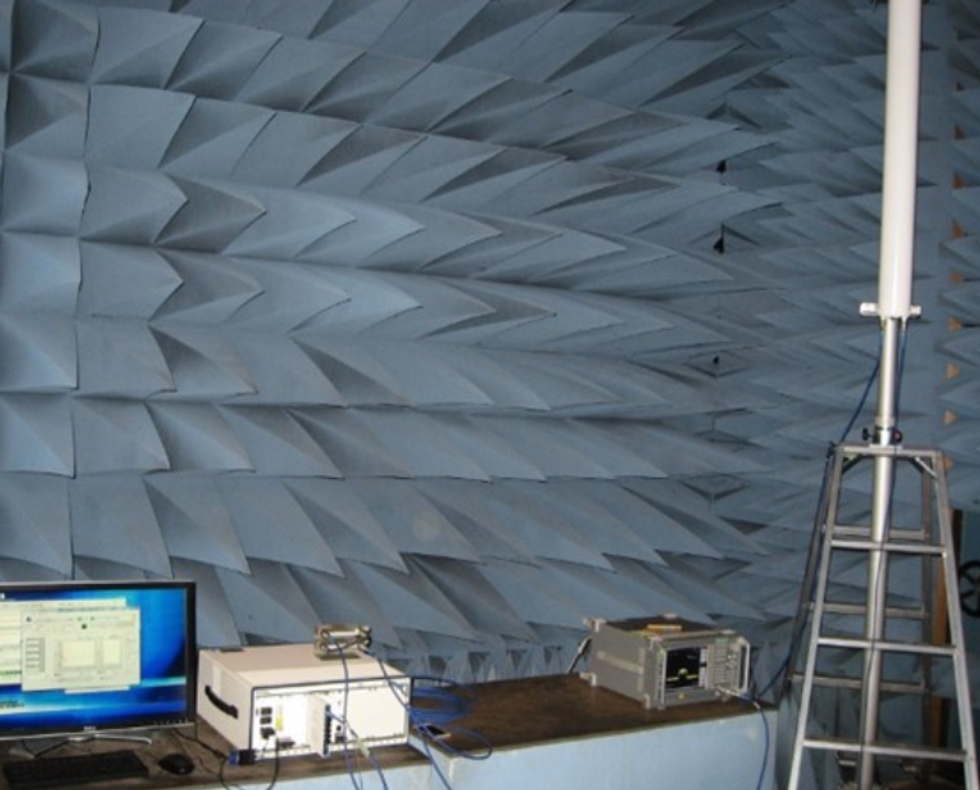

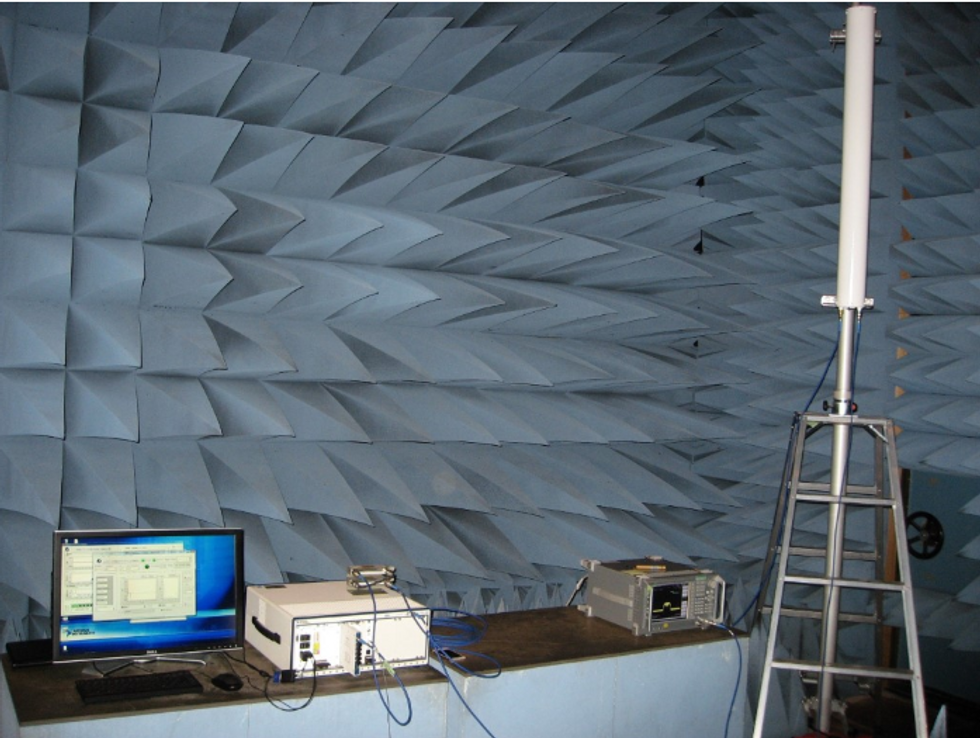

We decided to use COTS SDR products to resolve this issue. We used the USRP RIO SDR device for the UE (Figure 1). This product adapts to various wireless communication methods just by changing the software in accordance with system specifications without altering the hardware. For the base station, we used a combination of NI’s PXI products such as FlexRIO and the NI-5791 RF I/O module (Figure 2). Also, we decided to ask an NI partner, Dolphin System, to implement all the functions designed for the base station side while we focused on the UE.

Figure 1. Equipment for the UE devices, which imitate five UEs using five USRP RIO devices. Each USRP RIO is combined with an external power amplifier. Transmission signals from the USRP RIO devices are amplified and then emitted from the antenna.

Figure 2. Equipment for the base station, which combines and implements PXI and FlexRIO. The monitor on the left-side-facing PXI displays the user interface of the software for the base station that Dolphin System established.

We provided the system specifications and the simulation code written in The MathWorks, Inc. MATLAB® software to Dolphin System. Dolphin System rewrote the code in LabVIEW. Another company implemented the algorithm used for signal separation performed at the base station. This algorithm was provided to us as a register-transfer level (RTL) code for FPGA. Dolphin System then implemented the RTL code on the FlexRIO FPGA-based hardware along with other digital processing algorithms written in LabVIEW and LabVIEW FPGA for the base station, such as frame synchronization and demodulation.

Computer simulation is an effective method, but it is extremely difficult to completely reproduce the real-world environment in a simulation. Therefore, implementing the algorithm on a prototype almost always requires changing conditions and some part of the algorithm. Also, we ran into multiple situations where what worked in simulation did not work on the prototype. We dealt with those problems with the cooperation of Dolphin System. As an example, there were instances in which we could not attain the desired communication quality. We isolated the problems caused by timing using the flexible I/O functions of synchronization timing signals and trigger signals that come with PXI, FlexRIO, and USRP (Universal Software Radio Peripheral). We could then easily understand the cause. While each base station and UE operates based on its respective internal clock under normal circumstances, after we tried synchronizing the timing signals of the base station and multiple UEs using a cable and applying synchronization between them, the performance improved greatly. We immediately figured out that the lack of timing synchronization between the base station and UE caused a problem. Furthermore, when there was a lack of function or mismatches with a specification in an IP block on FPGAs, we dealt with them by coming up with workarounds with Dolphin System. Dolphin System had already implemented functions which down-converted radio wave signals and recorded I/Q waveform data onto a hard disk. After the problem mentioned above occurred, as a part of troubleshooting, we could use this I/Q waveform data for analysis instead of conducting normal reception processing at the base station side.

We were able to finish a system (Phase 1 prototype) that was used in experiments, which took about two to three months of development. We used SDR products like USRP RIO and FlexRIO to eliminate the need to build custom radio equipment. Furthermore, when using LabVIEW, we could use graphical and intuitive approaches to program not only the CPU processor of a PC, but even on an FPGA of the SDR hardware, so that we could treat the hardware as wireless communication processing units. We used LabVIEW to quickly develop and repair the system. We were originally going to fully customize the whole system but finishing development in such a short period seemed impossible.

When building a completely new system or test bed, the risks of designing or developing a fully custom radio from a circuit board are too great to take on because the development stage may require plenty of redesigning. Also, the system may need various adjustments after it has started going through complete runs or during the experiment. Choosing a COTS solution has significant advantages to easily performing measurements while making those changes. If adjustments are anticipated, the costs should be much more manageable than if one were to develop a full customization. Moreover, for this system, executing the signal’s separation algorithm in real time was essential for the base station. This signal processing algorithm has an extremely high load and cannot be executed in real time with the CPU of a PC. In that sense, FPGA, in which users can program freely, was very useful.

Future Development

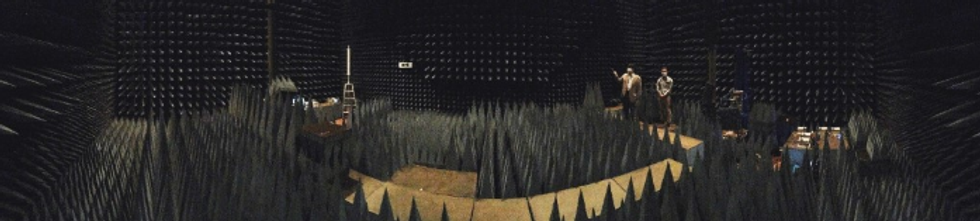

At this time, system construction (Phase 2 prototype) has already been completed. We could implement a function that verifies whether the data could be separated with a probability of X percent when signals are sent from multiple UEs to the base station. Although a few conditions need to be fulfilled, we verified that we could implement simultaneous connections to our target of five UEs with latency of 5 ms or less. Furthermore, the tests in the anechoic chamber (Figure 3) and the tests outdoors were conducted smoothly.

Figure 3. The experiment being conducted in the anechoic chamber shows PXI, which is the base station on the left side, and five USRP RIO devices, which are the UE devices on the right side, installed.

We have presented at international and domestic conferences on our successful creation of a real system based on a theoretical study. In the future, we want to submit our outdoor test results to articles in magazines and to 3GPP.

As for future development, we have decided to increase the number of simultaneous connections to ten UEs. However, there is a trade off between the number of UEs and the amount of latency. As the number of connected devices increases, the time it takes to perform separation processes increases. For the ten-UE case, we have discussed relaxing the latency requirements to more than 5 ms. Future initiatives will probably focus on increasing the number of UE devices that can achieve simultaneous connections and improving reliability.

This project assumed the existence of a mobile communication system. However, at NICT, we focus on communication technology suited for IoT without being limited to 5G. Naturally, 5G can be used as the core technology of IoT. On the other hand, there probably exist cases in which unique communication systems that utilize the industrial, scientific, and medical (ISM) bands are used. We look forward to expanding this developed technology to those applications as well. We are eager to see this technology that we worked on in R&D be used in society. We hope that the system we developed will be used as a system component technology without limitation to mobile phones.

Author Information:

Masayuki (大堂) Ohdo (雅之 氏)

National Institute of Information and Communications Technology (国立研究開発法人 情報通信研究機構)

3-4, Hikarino-Oka, Yokosuka

Kanagawa 239-0847

Japan

Tel: +81 46 847 5050