There's something persistently appealing about 8-bit computing: You can put together a self-contained system that's powerful enough to be user friendly but simple enough to build and program all by yourself. Most 8-bit machines built by hobbyists today are powered by a classic CPU from the heroic age of home computers in the 1980s, when millions of spare TVs were commandeered as displays. I'd built one myself, based on the Motorola 6809. I had tried to use as few chips as possible, yet I still needed 13 supporting ICs to handle things such as RAM or serial communications. I began to wonder: What if I ditched the classic CPU for something more modern yet still 8-bit? How low could I get the chip count?

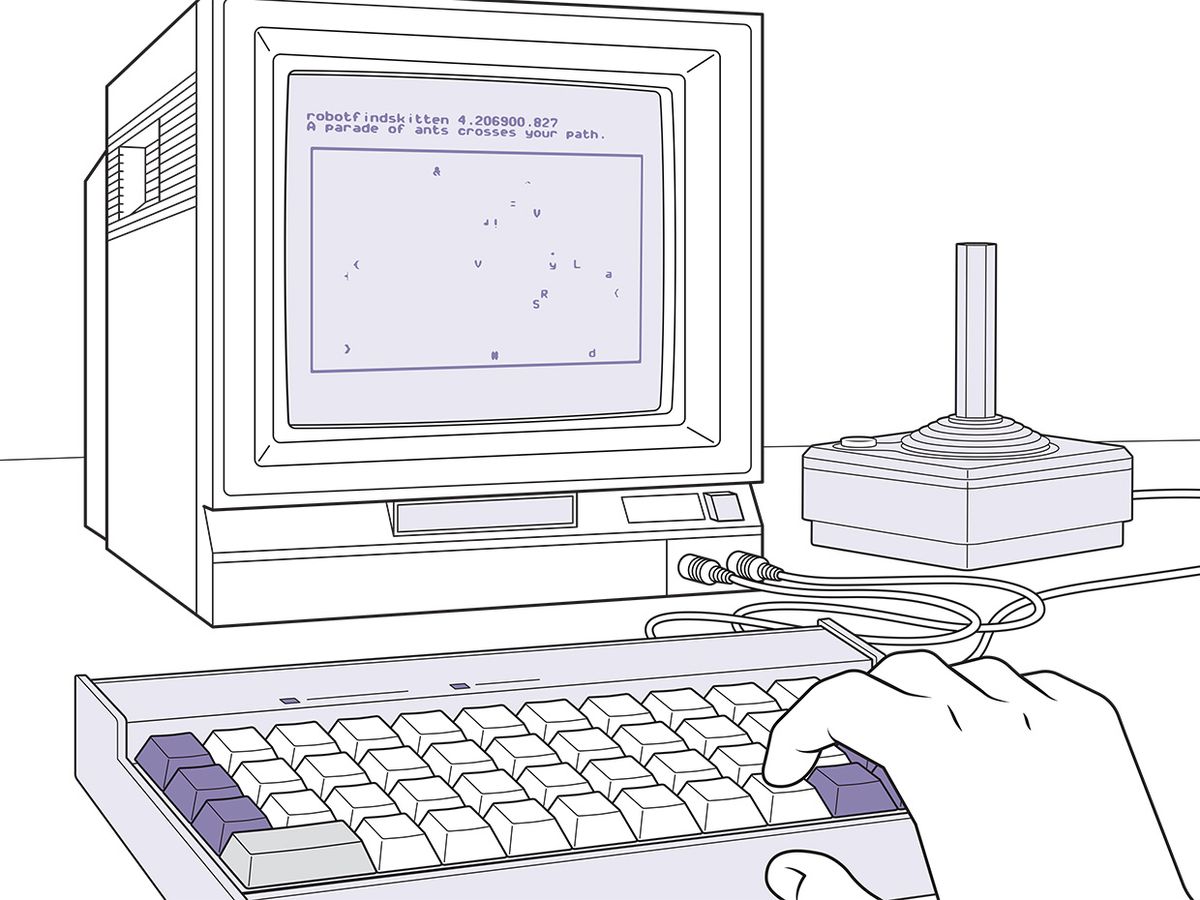

The result was the Amethyst. Just like a classic home computer, it has an integrated keyboard and can generate audio and video. It also has a built-in high-level programming language for users to write their own programs. And it uses just six chips—an ATMEGA1284P CPU, a USB interface, and four simple integrated circuits.

The ATMEGA1284P (or 1284P), introduced around 2008, has 128 kilobytes of flash memory for program storage and 16 kB of RAM. It can run at up to 20 megahertz, comes with built-in serial-interface controllers, and has 32 digital input/output pins.

Thanks to the onboard memory and serial interfaces, I could eliminate a whole slew of supporting chips. I could generate basic audio directly by toggling an I/O pin on and off again at different frequencies to create tones, albeit with the characteristic harshness of a square wave. But what about generating an analog video signal? Surely that would require some dedicated hardware?

Then, toward the end of 2018, I came across the hack that Steve Wozniak used in the 1970s to give the Apple II its color-graphics capability. This hack was known as NTSC artifact color, and it relied on the fact that U.S. color TV broadcasting was itself a hack of sorts, one that dated back to the 1950s.

Originally, U.S. broadcast television was black and white only, using a fairly straightforward standard called NTSC (for National Television System Committee). Television cathode-ray tubes scanned a beam across the surface of a screen, row after row. The amplitude of the received video signal dictated the luminance of the beam at any given spot along a row. Then in 1953, NTSC was upgraded to support color television while remaining intelligible to existing black-and-white televisions.

Compatibility was achieved by encoding color information in the form of a high-frequency sinusoidal signal. The phase of this signal at a given point, relative to a reference signal (the “colorburst") transmitted before each row began, determined the color's underlying hue. The amplitude of the signal determined how saturated the color was. This high-frequency color signal was then added to the relatively low-frequency luminance signal to create so-called composite video, still used today as an input on many TVs and cheaper displays for maker projects.

To a black-and-white TV, the color signal looks like noise and is largely ignored. But a color TV can separate the color signal from the luminance signal with filtering circuitry.

In the 1970s, engineers realized that this filtering circuitry could be used to great advantage by consumer computers because it permitted a digital, square-wave signal to duplicate much of the effect of a composite analog signal. A stream of 0s sent by a computer to a television as the CRT scanned along a row would be interpreted by the TV as a constant low-analog voltage, representing black. All the 1s would be seen as a constant high voltage, producing pure white. But with a sufficiently fast bit rate, more-complex binary patterns would cause the high-frequency filtering circuits to produce a color signal. This trick allowed the Apple II to display up to 16 colors.

At first I thought to toggle an I/O pin very quickly to generate the video signal directly. I soon realized, however, that with my 1284P operating at a clock speed of 14.318 MHz, I would not be able to switch it fast enough to display more than four colors, because the built-in serial interfaces took two clock cycles to send a bit, limiting my rate to 7.159 MHz. (The Apple II used fast direct memory access to connect its external memory chip to the video output while its CPU was busy doing internal processing, but as my computer's RAM is integrated into the chip, this approach wasn't an option.) So I looked in my drawers and pulled out four 7400 chips—two multiplexers and two parallel-to-serial shift registers. I could set eight pins of the 1284P in parallel and send them simultaneously to the multiplexers and shift registers, which would convert them into a high-speed serial bitstream. In this way I can generate bits fast enough to produce some 215 distinct colors on screen. The cost is that keeping up with the video scan line absorbs a lot of computing capacity: Only about 25 percent of the CPU's time is available for other tasks.

Consequently, I needed a lightweight programming environment for users, which led me to choose Forth over the traditional Basic. Forth is an old language for embedded systems, and it has the nice feature of being both interactive and capable of efficient compilation of code. You can do a lot in a very small amount of space. Because the 1284P does not allow compiled machine code to be executed directly from its RAM, a user's code is instead compiled to an intermediate bytecode. This bytecode is then fed as data to a virtual machine running from the 1284P's flash memory. The virtual machine's code was written in assembly code and hand-tuned to make it as fast as possible.

As an engineer working at Glowforge, I have access to advanced laser-cutting machines, so it was a simple matter to design and build a wooden case (something of a homage to the wood-grain finish of the Atari 2600). The mechanical keyboard switches are soldered directly onto the Amethyst's single printed circuit board; it does have the peculiarity that there is no space bar, rather a space button located above the Enter key.

Complete schematics, PCB files, and system code are available in my GitHub repository, so you can build an Amethyst of your own or improve on my design. Can you shave a chip or two off the count?

This article appears in the April 2020 print issue as “8 Bits, 6 Chips."