Artificial intelligence systems based on neural networks have had quite a string of recent successes: One beat human masters at the game of Go, another made up beer reviews, and another made psychedelic art. But taking these supremely complex and power-hungry systems out into the real world and installing them in portable devices is no easy feat. This February, however, at the IEEE International Solid-State Circuits Conference in San Francisco, teams from MIT, Nvidia, and the Korea Advanced Institute of Science and Technology (KAIST) brought that goal closer. They showed off prototypes of low-power chips that are designed to run artificial neural networks that could, among other things, give smartphones a bit of a clue about what they are seeing and allow self-driving cars to predict pedestrians’ movements.

Until now, neural networks—learning systems that operate analogously to networks of connected brain cells—have been much too energy intensive to run on the mobile devices that would most benefit from artificial intelligence, like smartphones, small robots, and drones. The mobile AI chips could also improve the intelligence of self-driving cars without draining their batteries or compromising their fuel economy.

Smartphone processors are on the verge of running some powerful neural networks as software. Qualcomm is sending its next-generation Snapdragon smartphone processor to handset makers with a software-development kit to implement automatic image labeling using a neural network. This software-focused approach is a landmark, but it has its limitations. For one thing, the phone’s application can’t learn anything new by itself—it can only be trained by much more powerful computers. And neural networks experts think that more sophisticated functions will be possible if they can bake neural-net–friendly features into the circuits themselves.

The larger a neural network is, the more computational layers it has, and the more energy it takes to run, says Vivienne Sze, an electrical engineering professor at MIT. No matter the application, the main drain on power is the transfer of data between processor and memory. This is a particular problem for convolutional neural networks, which are used for image analysis. (The “convolutional” in the name hints at the many steps involved.)

For the human brain, drawing on memories to make associations comes naturally. A 3-year-old child can easily tell you that a photo shows a cat lying on a bed. Convolutional neural networks can also label all the objects in an image. First, a system like the image-recognition champ AlexNet might find the edges of objects in the photo, then begin to recognize those objects one by one—cat, bed, blanket—and finally deduce that the scene is taking place indoors. Yet even doing this kind of simple labeling is very energy intensive.

Neural networks, particularly those used for image analysis, are typically run on graphics processing units (GPUs), and that’s what Snapdragon will use for its Scene Detect feature. GPUs are already specialized for image processing, but much more can be done to make circuits that run neural networks efficiently, says Sze.

Sze, working with Joel Emer, also an MIT computer science professor and senior distinguished research scientist at Nvidia, developed Eyeriss, the first custom chip designed to run a state-of-the-art convolutional neural network. They showed they could run AlexNet, a particularly demanding algorithm, using less than one-tenth the energy of a typical mobile GPU: Instead of consuming 5 to 10 watts, Eyeriss used 0.3 W.

Sze and Emer’s chip saves energy by placing a dedicated memory bank near each of its 168 processing engines. The chip fetches data from a larger primary memory bank as seldom as possible. Eyeriss also compresses the data it sends and uses statistical tricks to skip certain steps that a GPU would normally do.

Lee-Sup Kim, a professor at KAIST and head of its Multimedia VLSI Laboratory, says these circuits built for neural-network–driven image analysis will also be useful in airport face-recognition systems and robot navigation. At the conference, Kim’s lab demonstrated a chip designed to be a general visual processor for the Internet of Things. Like Eyeriss, the KAIST design minimizes data movement by bringing memory and processing closer together. It consumes just 45 milliwatts, though to be fair it runs a less-complex network than Eyeriss does. It saves energy by both limiting data movement and by lightening the computational load. Kim’s group observed that 99 percent of the numbers used in a key calculation require only 8 bits, so they were able to limit the resources devoted to it.

“It’s a challenge deciding between generality versus efficiency,” says Nvidia’s Emer. Sze, Emer, and Kim are trying to make general-purpose neural-network chips for image analysis, a sort of NNPU. Another KAIST professor, Hoi-Jun Yoo, head of the System Design Innovation and Application Research Center, favors a more specialized, application-driven approach to making neural-network hardware.

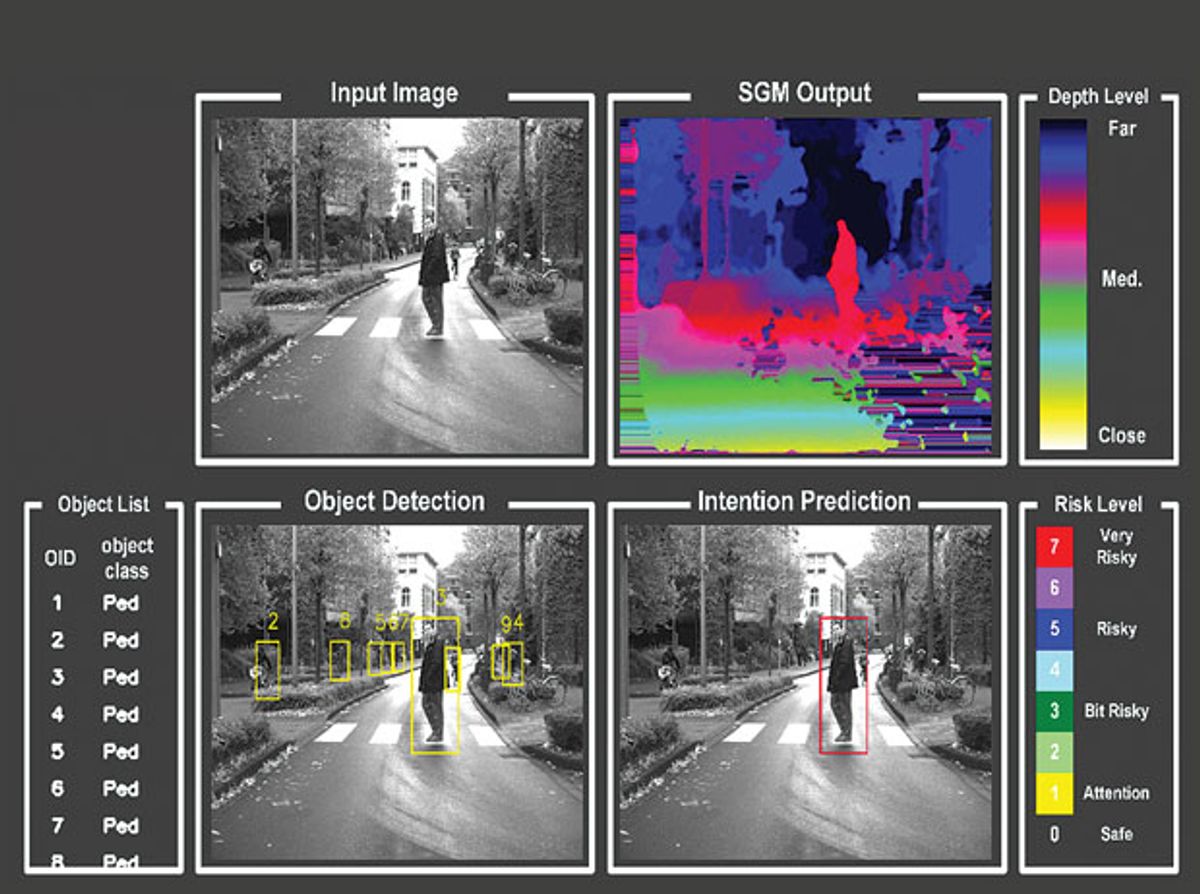

One system Yoo described was meant for self-driving cars. It’s designed to run convolutional networks that identify objects in the visual field and also to use a different type of algorithm, called a recurrent neural network. The “recurrent” refers to the system’s temporal skills—they excel at analyzing video, speech, and other information that changes over time. In particular, Yoo’s group wanted to make a chip that would run a recurrent neural network that tracks a moving object and predicts its intention: Is a pedestrian on the sidewalk going to enter the roadway? The system, which consumes 330 mW, can predict the intention of 20 objects at once, almost in real time—the lag is just 1.24 milliseconds.

Another difference between Yoo’s system and the MIT chip is that his hardware, which he calls an Intention Prediction Processor, continues its training while on the road. Yoo’s designs integrate what he calls a deep-learning core, a circuit that’s designed to add to the neural nets’ training. For convolutional neural networks, this deep-learning training is typically done on powerful computers. But Yoo says that our devices should adapt to us and learn on the go. “It’s impossible to preprogram all events. The real world is diverse and almost impossible to predict,” says Yoo.

This article appears in the March 2016 print issue as “Neural Networks on the Go.”