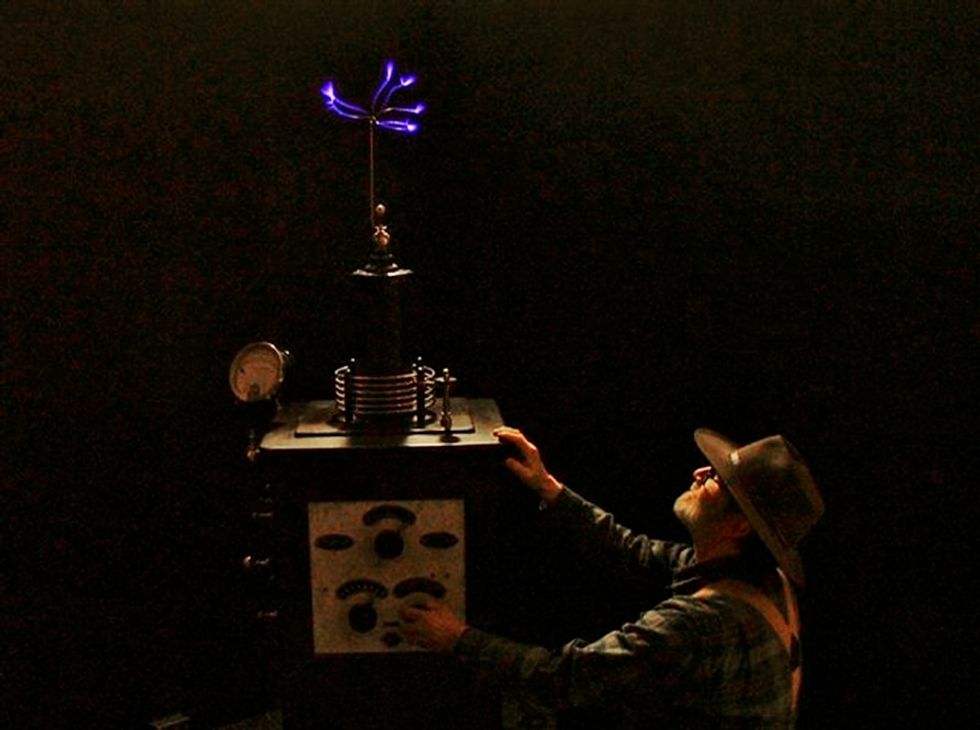

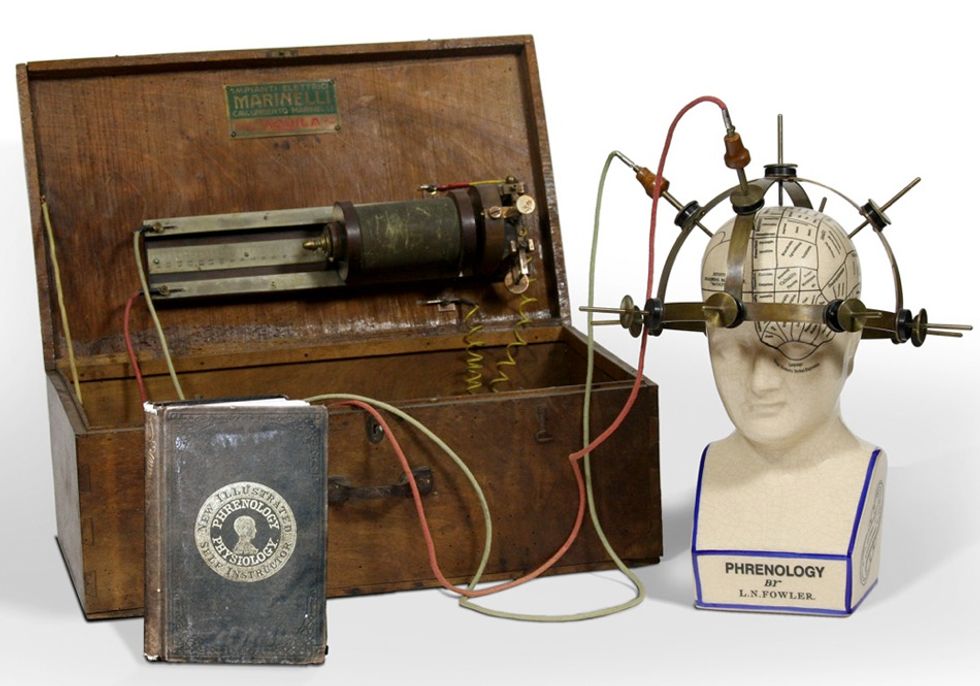

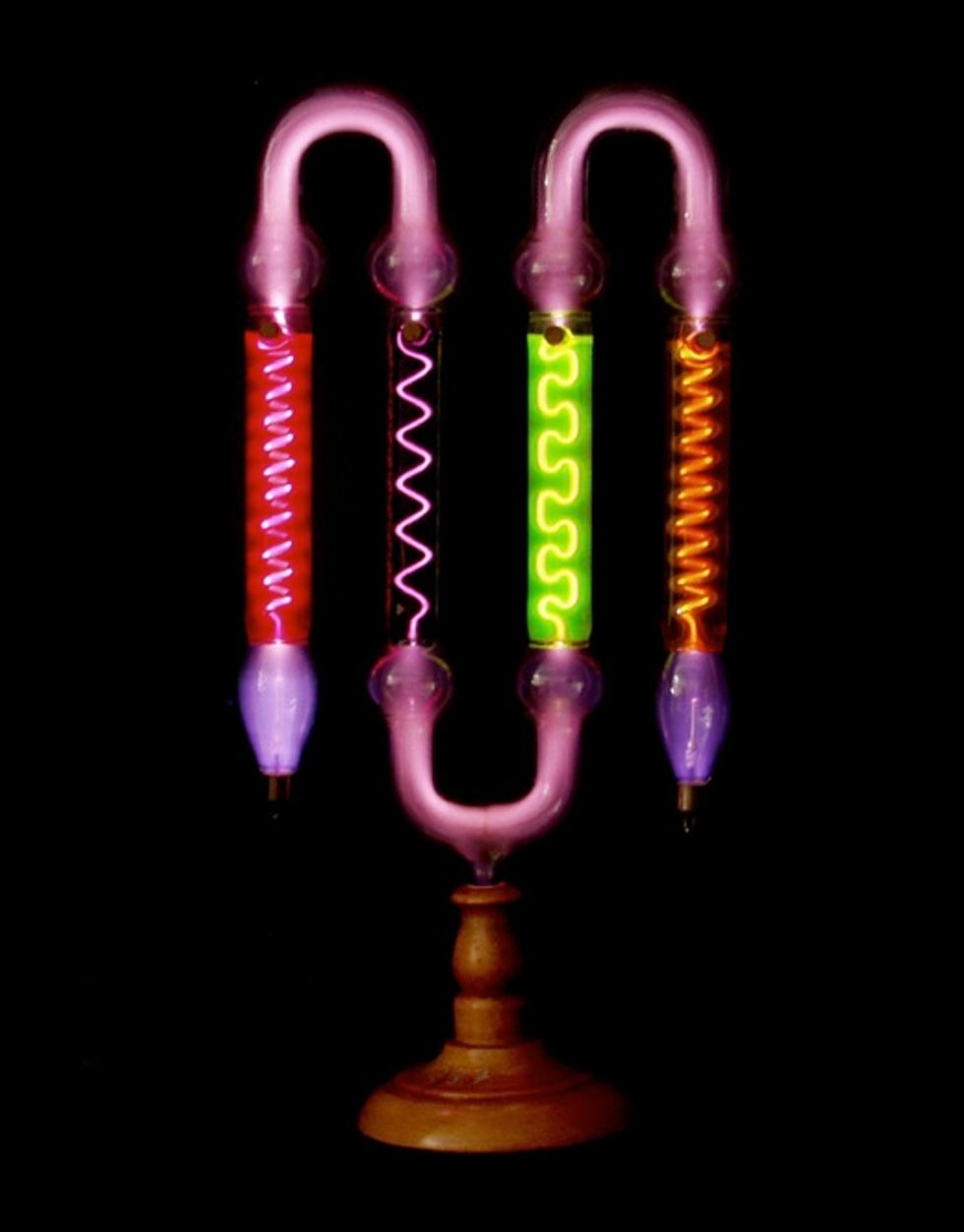

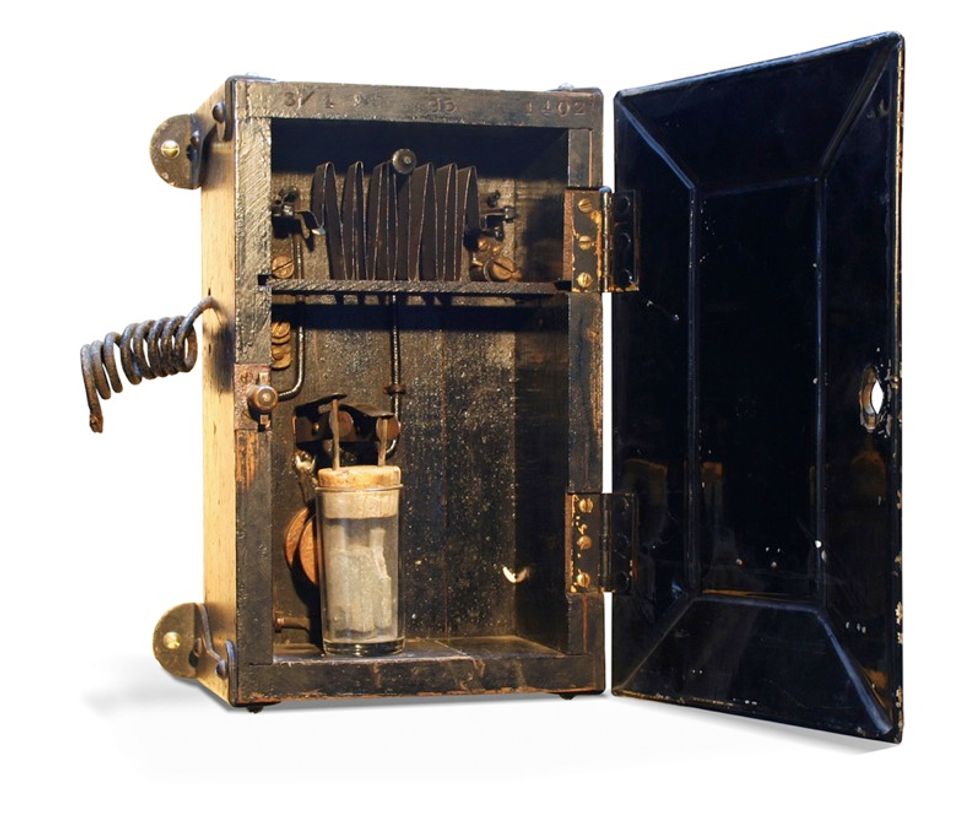

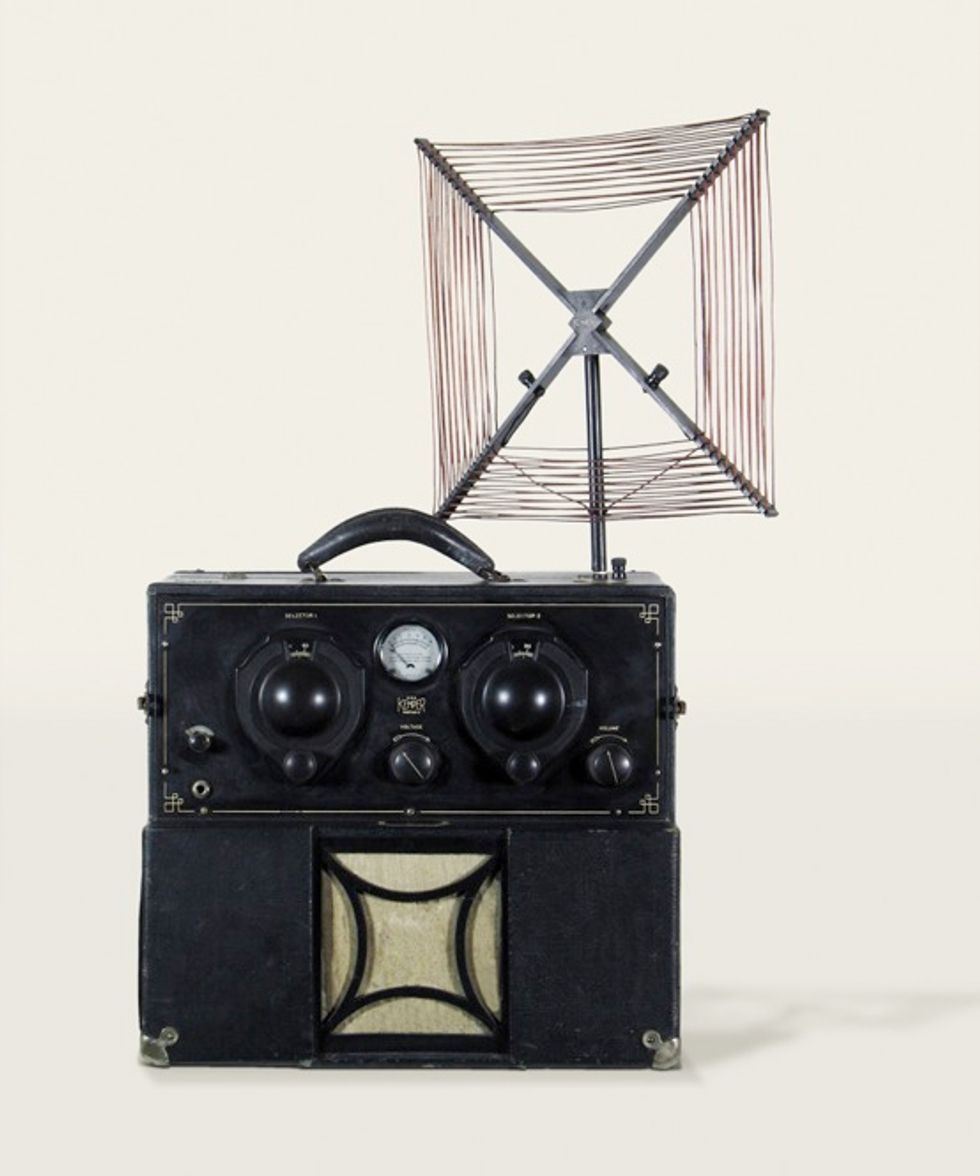

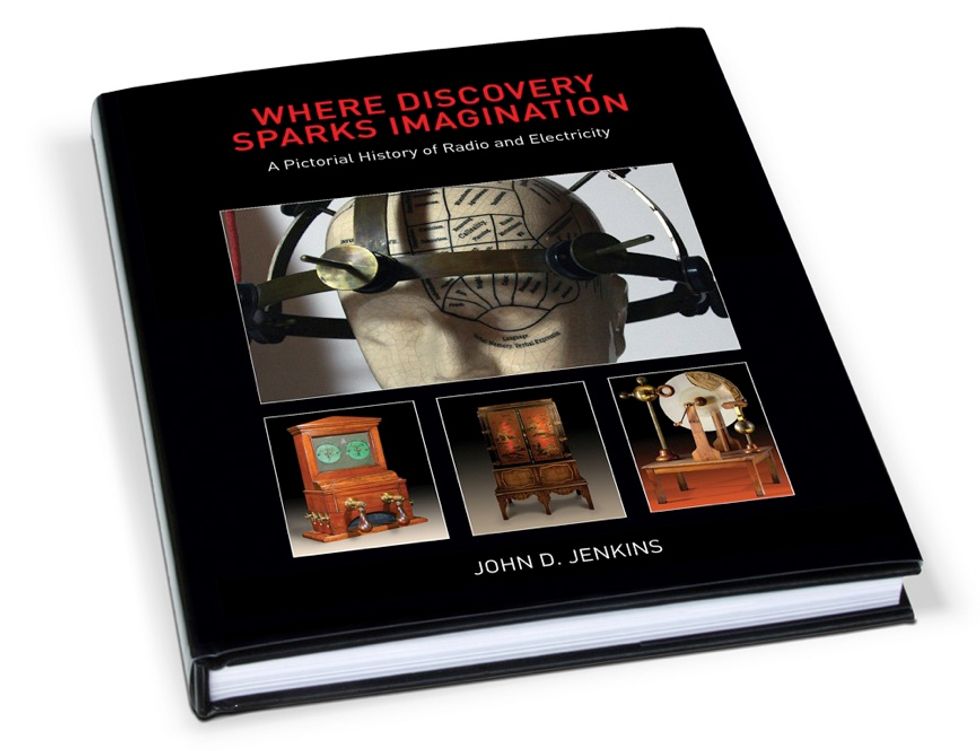

RADIO DAYS: The American Museum of Radio and Electricity, located in Bellingham, Wash., contains a unique collection of interactive galleries and artifacts. Here, curator Jonathan Winter adjusts the high-frequency electrostatic display on a rare combination Oudin/Tesla coil. These photographs are from the museum’s new book: Where Discovery Sparks Imagination: A Pictorial History of Radio and Electricity.

The Conversation (0)