The health care industry may seem the ideal place to deploy artificial intelligence systems. Each medical test, doctor’s visit, and procedure is documented, and patient records are increasingly stored in electronic formats. AI systems could digest that data and draw conclusions about how to provide better and more cost-effective care.

Plenty of researchers are building such systems: Medical and computer science journals are full of articles describing experimental AIs that can parse records, scan images, and produce diagnoses and predictions about patients’ health. However, few—if any—of these systems have made their way into hospitals and clinics.

So what’s the holdup? It’s not technical, says Shinjini Kundu, a medical researcher and physician at the University of Pittsburgh School of Medicine. “The barrier is the trust aspect,” she says. “You may have a technology that works, but how do you get humans to use it and rely on it?”

Most medical AI systems operate as “black boxes” that take in data and spit out answers. Doctors are understandably wary about basing treatments on reasoning they don’t understand, so researchers are trying a variety of techniques to create systems that show their work.

Paint Us a Picture

Kundu, who described her research at the United Nations’ recent AI for Good conference, is working on AI that analyzes medical images and then explains what it sees. Her system starts with a machine-learning component that examines images such as MRI scans and discovers patterns of interest to doctors.

In Kundu’s most recent experiments, the AI analyzed knee MRIs and predicted which knees would develop osteoarthritis within three years. Then, using a technique called “generative modeling,” the AI created a new image—its version of an MRI scan showing a knee that was guaranteed to develop that condition. “We enabled a black box classifier to generate an image that demonstrates the patterns it’s seeing as it makes its diagnosis,” Kundu explains.

Photos: Top: Osteoarthritis Initiative (2); Bottom: University of Pittsburgh School of Medicine The Power to Predict: Human eyes can’t tell the difference between MRI scans of those patients who won’t develop osteoarthritis in their knees within three years and those who will. But an AI program found subtle differences in the patterns of cartilage, which it showed to researchers. The AI’s generated image revealed that it was basing its predictions on subtle changes to the cartilage shown in the MRI scans—which human doctors hadn’t noticed. “That was another powerful aspect of this work,” says Kundu. “It helped humans understand what the early developmental process of arthritis might be.”

Now What Do You See?

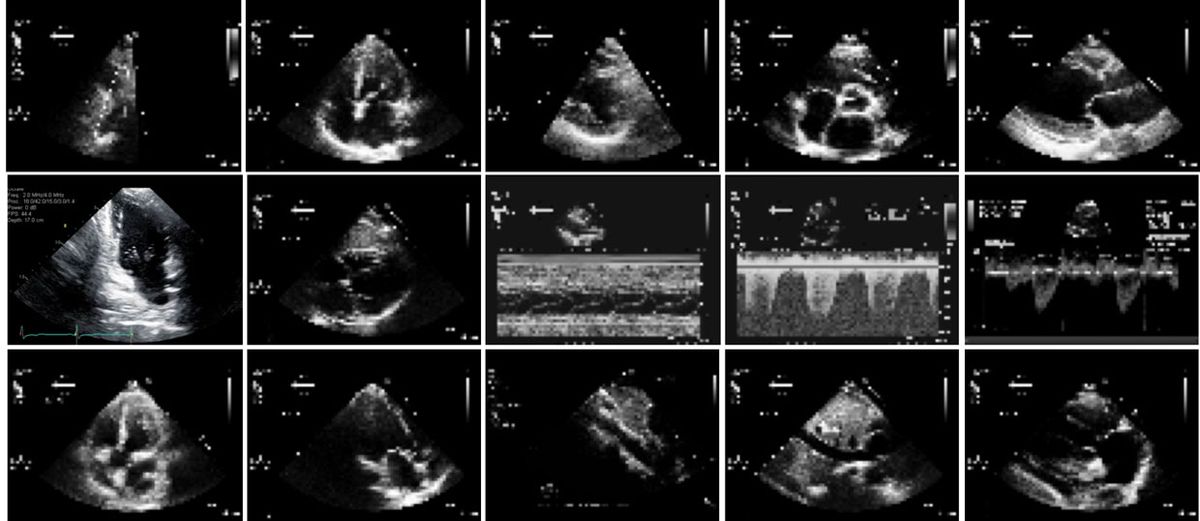

Rima Arnaout, an assistant professor and practicing cardiologist at the University of California, San Francisco, trained a neural network to classify echocardiograms, the ultrasound scans crucial for diagnosing heart ailments. The first version of her AI, described in the journal NPJ Digital Medicine in March, was more accurate than human cardiologists at sorting tiny, low-resolution images by their angle of perspective on the heart. The next version will use this information to identify the anatomical structures in view and diagnose cardiac diseases and defects.

But such a diagnostic system isn’t likely to be used: “I’m never going to make a diagnosis that doesn’t sit well with me, and say, ‘The computer made me do it,’ ” Arnaout says. So she used two techniques to understand how her classifier was making decisions. In occlusion experiments, she covered up parts of test images to see how it changed the AI’s answers; with saliency mapping, she traced the neural network’s final answers back to the original image to discover which pixels carried the most weight.

Both techniques showed which parts of the image the AI relied on to make decisions. Encouragingly, the structures that contributed most to the AI’s decisions were also those that human experts judged important.

Moving Beyond Correlation

At Microsoft Research in Redmond, Wash., principal researcher Rich Caruana has been on a mission for decades to make machine-learning models that aren’t just intelligent but also intelligible. His AI uses electronic health records from hospitals to make predictions about patient outcomes. But he has found that even models that appear highly accurate can hide serious flaws.

He cites his ongoing research using a data set of pneumonia patients. In one study, he trained a machine-learning model to distinguish between high-risk patients, who should be admitted to the hospital, and low-risk patients, who could safely stay home to recuperate. The model found that people with heart disease were less likely to die of pneumonia and confidently asserted that these patients were low risk.

Caruana explains that heart disease patients who are diagnosed with pneumonia have better outcomes—not because they’re low risk but because they typically go to the emergency room at the first sign of breathing problems and therefore get immediate diagnosis and treatment. “The correlation the model found is true,” Caruana says, “but if we used it to guide health care interventions, we’d actually be injuring—and possibly killing—some patients.” Based on his troubling discoveries, he’s now working on machine-learning models that clearly show the relationship between variables, letting him judge whether the model is not only statistically accurate but also clinically useful.

This article appears in the August 2018 print issue as “Making Medical AI Trustworthy.”