Processors that use light instead of electricity show promise as a faster and more energy-efficient way to implement AI. So far they’ve only been used to run models that have already been trained, but new research has demonstrated the ability to train AI on an optical chip for the first time.

As AI models get ever larger there is growing concern about the amount of energy they consume, both due to ballooning costs and the potential impact on the environment. This is spurring interest in new approaches that can reduce AI’s energy bills, with photonic processors emerging as a leading candidate.

These chips replace the electrons found in conventional processors with photons and use optical components like waveguides, filters, and light detectors to create circuits that can carry out computational tasks. They are particularly promising for running AI because they are very efficient at carrying out matrix multiplications—a key calculation at the heart of all deep-learning models. Companies like Boston-based Lightmatter and Lightelligence in Cambridge, Mass., are already working to commercialize photonic AI chips.

“Our experiment...suggest[s] a new energy-efficient route for training neural networks.”

—Sunil Pai, PsiQuantum

So far, though, these devices have been used only for inference, which is when an AI model that has already been trained makes predictions about new data. That’s because these chips have struggled to implement a crucial algorithm used to train neural networks–backpropagation. But in a new paper in Science, a team from Stanford University has described the first-ever implementation of the training approach on a photonic chip.

“Our experiment is the first to demonstrate that in situ backpropagation can train photonic neural networks to solve a task, suggesting a new energy-efficient route for training neural networks,” says Sunil Pai, who led the research at Stanford but now works at California-based PsiQuantum, which is building photonic quantum computers.

Backpropagation involves repeatedly feeding training examples into a neural network and asking it to make predictions about the data. Each time, the algorithm measures how far off the predictions are, and this error signal is then fed backward through the network. This is used to adjust the strength of connections, or weights, between neurons to improve prediction performance. This process is repeated many times until the network is able to solve whatever task it’s been set.

This approach is hard to implement on a photonic processor though, says Charles Roques-Carmes, a postdoctoral associate at MIT, because these devices can carry out only a limited palette of operations compared to standard chips. As a result, working out the weights for a photonic neural networks typically relies on complex physical simulations of the processor carried out off-chip on a conventional computer.

But in 2018, some of the authors of the new Science paper proposed an algorithm that, in theory, could efficiently carry out this critical step on the chip itself. The scheme involves encoding training data in a light signal, passing it through a photonic neural network and then calculating the error at the output. This error signal is then sent backward through the network and made to optically interfere with the original input signal, the result of which tells you how the network’s connections need to be adjusted to improve predictions. However, the scheme relies on sending light signals both forward and backward through the chip and being able to measure the intensity of light passing through individual chip components, which was not possible with existing designs.

“This might open the way to fully photonic computing on-chip for applications in AI.”

—Charles Roques-Carmes, MIT

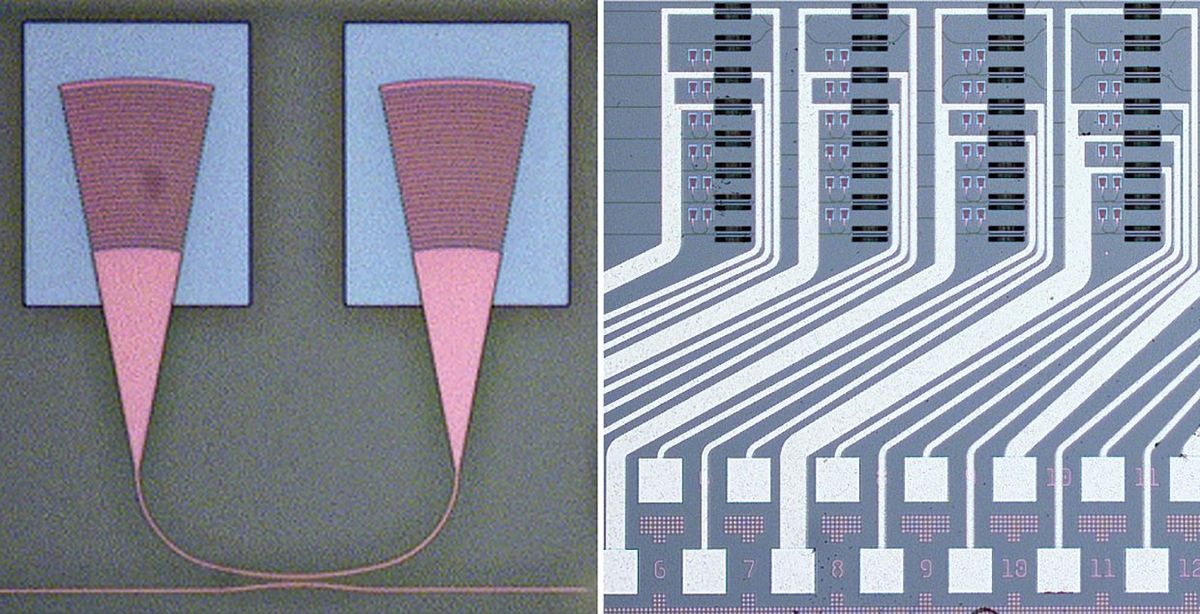

Now, Pai and his colleagues have built a custom photonic chip that can successfully implement this algorithm. It uses a common design known as a “photonic mesh,” which features an array of programmable optical components that control how light signals are split across the chip. By causing beams of light to mix and interfere with each other, the chip is able to carry out matrix multiplications and therefore implement a photonic neural network.

What sets the new chip apart though, is that it also has light sources and light detectors at both ends, allowing signals to pass forward and backward through the network. It also features small “taps” at each node in the network that siphon off a small amount of the light signal, redirecting it to an infrared camera that measures light intensities. Together, these changes make it possible to implement the optical backpropagation algorithm. The researchers showed that they could train a simple neural network to label points on a graph based on their position with an accuracy of up to 98 percent, which is comparable to conventional approaches.

There’s still a lot of work to do before the approach can become practical, says Pai. The optical taps and camera are fine for an experimental set up, but would need to be replaced by integrated photodetectors in a commercial chip. And Pai says they needed to use relatively high optical power to get good performance, which suggests a trade-off between accuracy and energy consumption.

It’s also important to recognize that the Stanford researcher’s system is actually a hybrid design, say Roques-Carmes. The computationally expensive matrix multiplications are carried out optically, but simpler calculations known as nonlinear activation functions, which determine the output of each neuron, are carried out digitally off-chip. These are currently inexpensive to carry out digitally and complicated to do optically, but Roques-Carmes says other researchers are making headway on this problem as well.

“This research is a significant step towards implementing useful machine-learning algorithms on photonic chips,” he says. “Combining this with efficient on-chip nonlinear operations being currently developed, this might open the way to fully photonic computing on-chip for applications in AI.”

- The Femtojoule Promise of Analog AI ›

- Optical Algorithm Simplifies Analog AI Training ›

- New Photonic Chip Is the Full Package - IEEE Spectrum ›

- AI Training:Newest Google and Nvidia Chips Speed AI Training - IEEE Spectrum ›

- Optical AI Enables Greener, Faster Image Creation - IEEE Spectrum ›

Edd Gent is a freelance science and technology writer based in Bengaluru, India. His writing focuses on emerging technologies across computing, engineering, energy and bioscience. He's on Twitter at @EddytheGent and email at edd dot gent at outlook dot com. His PGP fingerprint is ABB8 6BB3 3E69 C4A7 EC91 611B 5C12 193D 5DFC C01B. His public key is here. DM for Signal info.