Enabling Faster, More Capable Robots With Real-Time Motion Planning

Hardware-based motion planning that operates in under a millisecond makes robots both safer and more versatile

This is a guest post. The views expressed here are solely those of the authors and do not represent positions of IEEE Spectrum or the IEEE.

Despite decades of expectations that we will have dexterous robots performing sophisticated tasks in the house and elsewhere, the use of robots remains painfully limited, largely due to insufficient motion-planning performance. Motion planning is the process of determining how to move a robot, or autonomous vehicle, from its current configuration (or pose) to a desired goal configuration: For example, how to reach into a fridge to grab a soda can while avoiding obstacles, like the other items in the fridge and the fridge itself. Until recently, this critical process has been implemented in software running on high-performance commodity hardware. The problem is that this software takes multiple seconds, precluding the deployment of robots in dynamic environments or environments with humans. At Realtime Robotics, we have developed special-purpose hardware to solve motion planning in under a millisecond, greatly expanding the range of tasks that robots will soon be able to complete.

With traditional motion planning, robots that can be used in unstructured, dynamic environments are quite simple, with only a few degrees of freedom. These robots include autonomous floor cleaners (e.g., Roomba) and cylindrical robots with tablets that can aid in activities like telepresence (e.g., Kubi, PadBot, etc.). Robots are also used today in static environments, such as automobile assembly lines, where they can be programmed to perform the same operations repeatedly on objects that are always in the same position and orientation. These factories are engineered, at enormous expense, so that the robots can simply repeat pre-programmed motions. The robot's metaphorical eyes are closed and it does not react to any unexpected changes in its highly structured environment; these robots are often caged to protect people from being injured by them. The robots of today are thus either sophisticated robots in cages or simple robots in the wild.

At first glance, motion planning seems like it should be simple. After all, it doesn't take a person five seconds to figure out how to reach into a refrigerator to extract a can of soda. Computers typically perform motion planning using a graph data structure called a roadmap, in which each vertex corresponds to a specific configuration of a mechanical system, and an edge between two vertices corresponds to the motion between those configurations. Motion planning is the process of finding a path through the roadmap from the current configuration to a goal configuration. The challenging aspect of motion planning for computers—but not for people—is collision detection: determining which edges in the roadmap (i.e., motions) cannot be used because they will result in a collision.

When a robot moves from one configuration to another configuration, it sweeps a volume in 3D space. Collision detection is the process of determining if that swept volume collides with any obstacle (or with the robot itself). Typically, the surfaces of the swept volume and the obstacles are represented with meshes of polygons, and collision detection consists of computations to determine whether these polygons intersect. This process is not conceptually difficult because it is simple computational geometry; the challenge is computational because there is so much of it. Each test to determine if two polygons intersect involves cross products, dot products, division, and other computations, and there can be millions of polygon intersection tests to perform.

As with many computational challenges, it can be sped up with more hardware resources and with software optimizations. The state of the art has been to use the vast computational resources of GPUs and sophisticated software that carefully maps the computations to the GPUs so as to maximize performance. Nevertheless, even this approach (which consumes a large amount of power) cannot compute more than a few plans per second, and performance is fragile: Changes in task or scenario often require retuning the software. Some industrial solutions provide high performance but with only limited functionality, either by performing extremely coarse-grained collision detection (e.g., stop moving if anything is detected nearby) or by performing collision avoidance until a dynamic obstacle has vacated the workspace and then performing motion planning in an obstacle-free environment.

To achieve general-purpose, real-time motion planning, we have developed special-purpose processors that achieve submillisecond motion plans. These processors overcome motion planning's performance bottleneck by converting the computational geometry task into a much faster lookup task. Long before runtime (e.g., at design time), we can precompute data that records, for a large number of motions between configurations, what part of 3D space these motions collide with. This offline precomputation—which is based on simulating the motions to determine their swept volumes—is loaded onto the processor so that it can be accessed at runtime. At runtime, the processor receives sensory input that describes what part of the robot's environment is occupied with obstacles, and the processor uses its precomputed data to eliminate the motions that would collide with these obstacles.

Our original processor, developed as part of a research project at Duke University, was a proof of concept that demonstrated that sub-millisecond collision detection is possible. This design was an exciting first step, but was limited in several significant ways. Most notably, it was limited to small roadmaps (on the order of 2,000 to 3,000 edges), and larger roadmaps are needed for finer dexterity and for handling more sophisticated robotics tasks. A roadmap for a pick-and-place task may require on the order of 100,000 edges, whereas an autonomous car may benefit from a million edges. Another crucial limitation was that each processor based on this design was targeted to a single robot and could not be retargeted.

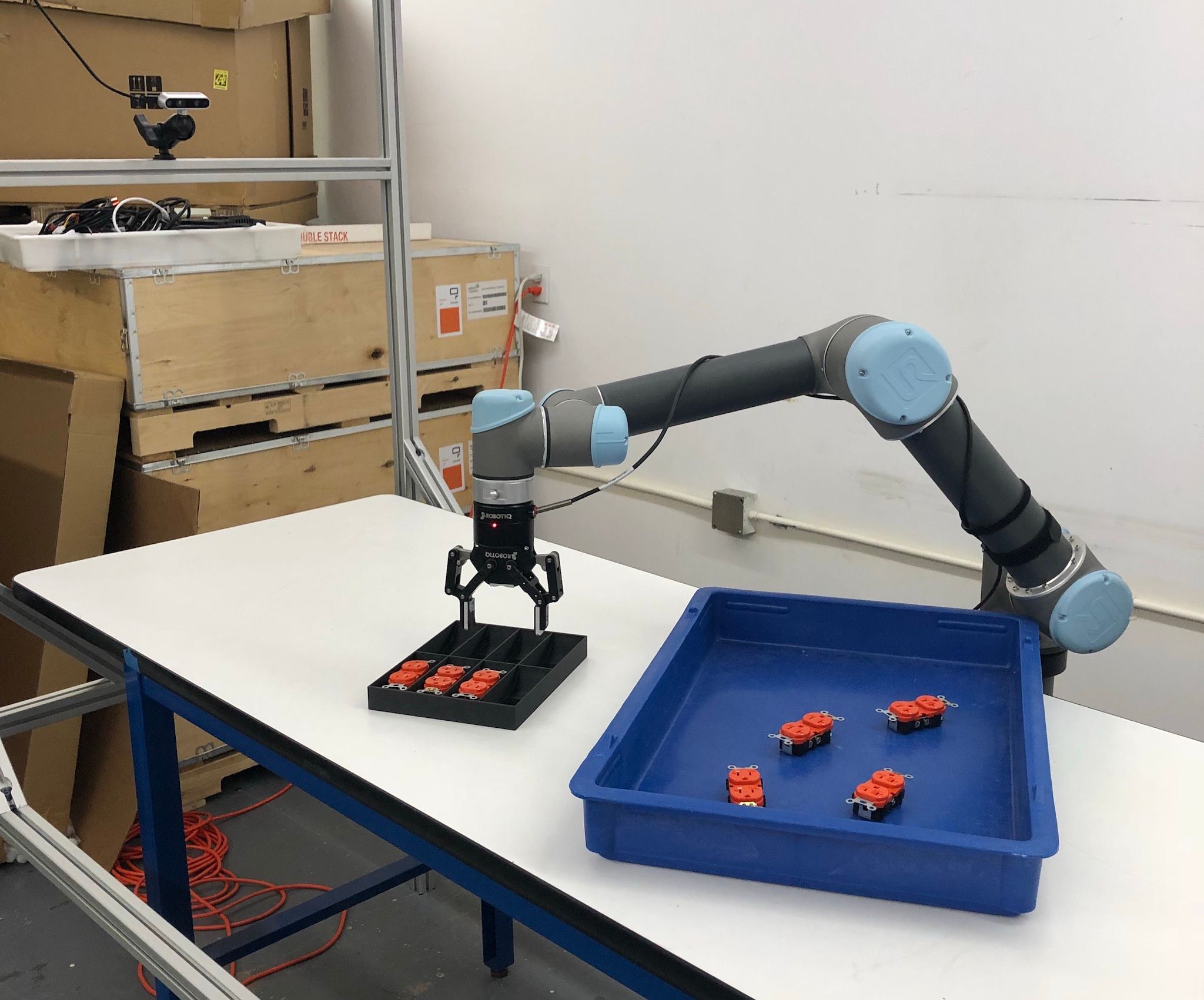

To take the next steps with this technology and bring it to the market, we created a company, Realtime Robotics, based in Boston, Mass. At Realtime, we have developed and released a new processor, RapidPlan, that is retargetable, updatable on the fly, and has the capacity for tens of millions of edges. The RapidPlan inherits many of the design principles of the original processor at Duke, but it has a reconfigurable and more scalable design for the hardware for computing whether a roadmap edge's motion is colliding with an obstacle. The retargetability is a critical feature; without it, each robot requires its own dedicated design. Along with the capacity for extremely large roadmaps, RapidPlan can partition that capacity into several smaller roadmaps and switch between them at runtime with negligible delay. Additional roadmaps can also be transferred from off-processor memory on the fly. The ability to change roadmaps at runtime allows the user to, for example, have different roadmaps that correspond to different states of the end effector or for different task types. There could be a roadmap for when there is nothing grasped, a roadmap for when a small spherical object (or something that fits within that shape) is grasped, a roadmap for picking and sorting, another for picking and packing, and so on.

With the real-time motion planning provided by RapidPlan, the opportunity to use robots is far greater. A robot with fast reaction times can operate safely in an environment with humans. A robot that can plan quickly can be deployed in relatively unstructured factories and adjust to imprecise object locations and orientations, thus lowering a major the barrier to the use of robots. We see great potential in industries including logistics, manufacturing, health care, agriculture, and domestic assistants.

High-speed motion planning is also critical for autonomous vehicles. The motion planning problem for AVs is, in some ways, simpler in that the configuration of a vehicle has fewer dimensions than a many-jointed robot arm. However, motion planning for a vehicle must consider that the vehicle moves at high speeds in an environment with other agents that operate unpredictably, including bicycles, pedestrians, other vehicles, etc. With sufficiently fast motion planning, though, we can drive in a risk-aware fashion. We can treat each agent's behavior probabilistically and motion plan for each of a large number of samples drawn from the agents' joint distributions. For example, in one sample the car on the left continues straight, the car ahead slows down, and the pedestrian on the curb remains on the curb. With plans for each sample, we can then choose the best plan that has a collision probability within our risk tolerance. Note that no motion plan, other than leaving the vehicle parked at all times, has a risk of zero; if another agent is determined to cause a collision (e.g., by deliberately swerving into our vehicle), it is extremely difficult to avoid, for both an autonomous vehicle or a human driver.

We are excited by the possibilities that are opened up by the advent of real-time motion planning—robots operating in, and engaged with, the real world, instead of carefully hidden away from it.