Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

ITU Robot Olympics – April 7-9, 2017 – Istanbul, Turkey

ROS Industrial Consortium – April 07, 2017 – Chicago, Ill., USA

U.S. National Robotics Week – April 8-16, 2017 – USA

NASA Swarmathon – April 18-20, 2017 – NASA KSC, Florida, USA

RoboBusiness Europe – April 20-21, 2017 – Delft, Netherlands

RoboGames 2017 – April 21-23, 2017 – Pleasanton, Calif., USA

ICARSC – April 26-30, 2017 – Coimbra, Portugal

AUVSI Xponential – May 8-11, 2017 – Dallas, Texas, USA

AAMAS 2017 – May 8-12, 2017 – Sao Paulo, Brazil

Austech – May 9-12, 2017 – Melbourne, Australia

Innorobo – May 16-18, 2017 – Paris, France

NASA Robotic Mining Competition – May 22-26, 2017 – NASA KSC, Fla., USA

IEEE ICRA – May 29-3, 2017 – Singapore

University Rover Challenge – June 1-13, 2017 – Hanksville, Utah, USA

Let us know if you have suggestions for next week, and enjoy today’s videos.

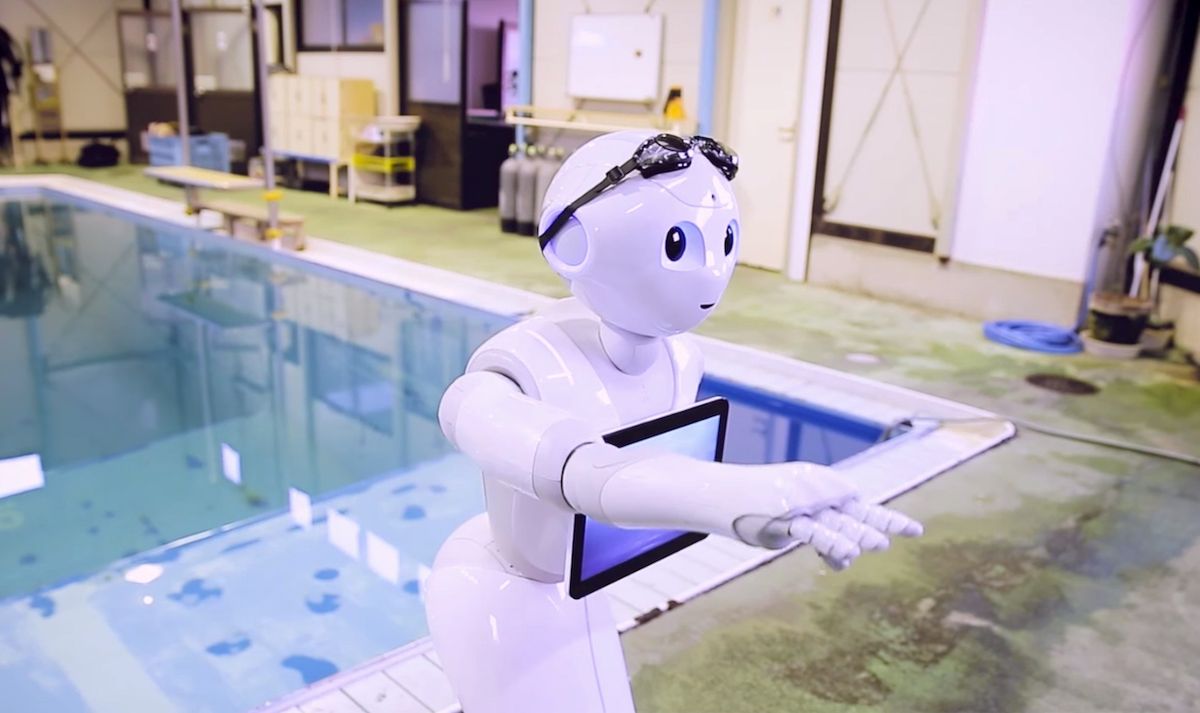

This video was published on March 31, not April 1, which I assume means that Pepper’s fish mode is going to be a real thing:

In fact, it’s possible that this capability has already been enabled, so feel free to toss your Pepper into the nearest lake and let us know how it goes.

[ SoftBank ]

Watching this tensegrity robot blob itself up a 44-degree slope is pretty amazing:

[ UC Berkeley ]

Kinema Pick is the world’s first Deep Learning 3D Vision system for industrial robots. Kinema Pick integrates the KS1000, a high-resolution 3D/2D sensor with deep-learning and 3D Vision to find and locate boxes on complex pallets. Kinema Pick’s advanced motion planning brings self-driving abilities to industrial robots, making Kinema Pick easy to configure for any workcell layout.

[ Kinema Systems ]

Simone Giertz gets a manicure:

Or, you know, doesn’t get a manicure. Because if it was successful, it would be a lot less entertaining.

[ Simone Giertz ]

BuzzFeed attempts (with supervision) to steal food from a Starship delivery robot, because that seems exactly like something BuzzFeed would do.

[ BuzzFeed ]

And now, this:

[ Takanishi Lab ]

Some nifty old robot videos from CMU’s Chris Atkeson:

[ Chris Atkeson ]

With a little bit of instruction, AMIGO will very slowly clean up for you. And if it can’t reach something on the ground, it’s got an adorable little sidekick to help out.

[ R5-COP ]

Cozmo would like to remind you that Easter is coming up:

And that’s why you should use chocolate eggs instead of real eggs for everything.

[ Cozmo ]

Daniel Claes wrote in to share some of his recent Ph.D. work on decentralized multi-robot warehouse commissioning:

The robots plan autonomously which actions to take based on the information that they get from the warehouse management software, i.e. the number of active orders and the approximate positions of the other robots. The robots adapt directly to new incoming orders and picked orders from the other robots. The robots have to plan with a limited capacity of three items after which they have to return to the depot to unload. The exact positions of the objects on the platforms is not known. Additionally, the platforms are at different heights.

A paper on this will be in AAMAS 2017, but in the meantime, you can read more details at the link below.

Thanks Daniel!

Look, it’s Astro Boy! It’s got some skills, I guess?

[ ATOM 2020 ] via [ Biped Robot News ]

The Zurich Urban Micro Aerial Vehicle Dataset:

This paper presents and releases to the public the first dataset recorded on-board a camera equipped Micro Aerial Vehicle (MAV) flying within urban streets at low altitudes (i.e., 5-15 meters above the ground). The 2 km dataset consists of time synchronized aerial high-resolution images, GPS and IMU sensor data, ground-level street view images, and ground truth data. The dataset is ideal to evaluate and benchmark appearance-based topological localization, monocular visual odometry, simultaneous localization and mapping (SLAM), and online 3D reconstruction algorithms for MAV in urban environments.

We posted about the autonomous version of Robo-One earlier this week, but here are a few highlights from the remote controlled competition:

Watch also the final in the 3-kg class between KingPuni vs. RP-CHEON.

[ Robo-One ] via [ Biped Robot News ]

In 20 years there will be 9.6 billion people to feed, and not enough food. Carnegie Mellon University’s FarmView is tackling this problem through a team effort of researchers and robots that will increase crop yields with fewer resources by controlling and measuring the environment then analyzing the data, to provide solutions to farmers across the globe.

[ CMU ]

MekaMon started with a question 4 years ago. Why can’t we have next gen gaming now? This our story from idea to manufacturing our first units.

[ MekaMon ]

The European Robotics League ERL Emergency Robots Tournament will be held on 15-23 September 2017 in Piombino, Italy.

CMU RI Seminar: Peter Stone on “Robot Skill Learning: From the Real World to Simulation and Back.”

For autonomous robots to operate in the open, dynamically changing world, they will need to be able to learn a robust set of interacting skills. This talk begins by introducing "Overlapping Layered Learning" as a novel hierarchical machine learning paradigm for learning such interacting skills in simulation. While learning in simulation is appealing because it avoids the prohibitive sample cost of learning in the real world, unfortunately policies learned in simulation often fail when applied on physical robots. This talk then introduces "Grounded Simulation Learning" to address this problem by algorithmically altering the simulator to better match the real world, and connects this new algorithm to a theoretical analysis of off-policy evaluation in reinforcement learning. Overlapping Layered Learning was the key deciding factor in UT Austin Villa’s RoboCup robot soccer 3D simulation league championship, and Grounded Simulation Learning has led to the fastest known stable walk on a widely used humanoid robot.

[ CMU RI ]

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.