Electrical Engineering’s Identity Crisis

When does a vast and vital profession become unrecognizably diffuse?

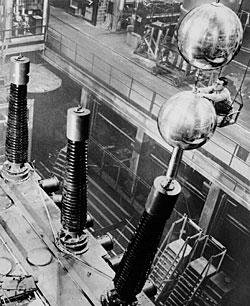

More than a century ago, electrical engineering was so much simpler. Basically, it referred to the technical end of telegraphy, trolley cars, or electric power. Nevertheless, here and there members of that fledgling profession were quietly setting the stage for an era in industrial history unparalleled for its innovation, growth, and complexity.

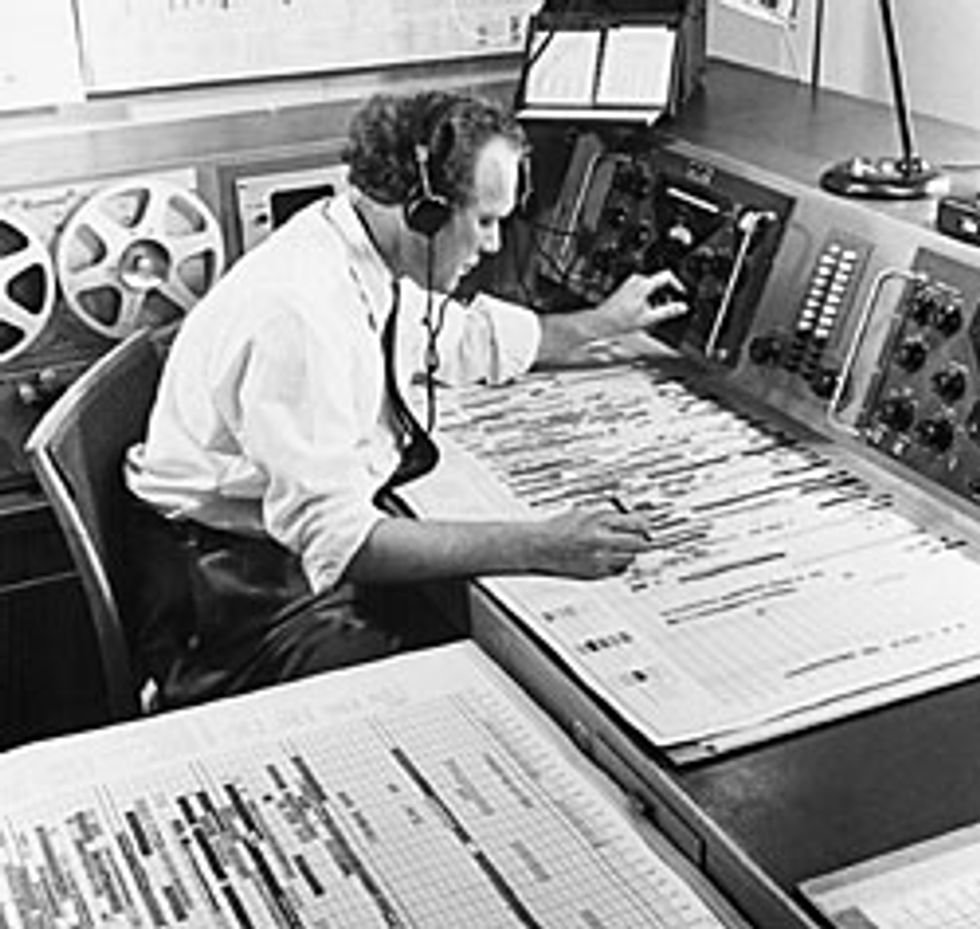

That decades-long saga was punctuated early on by spark-gap radios, tubes, and amplifiers. With World War II came radar, sonar, and the proximity fuze, followed by electronic computation. Then came solid-state transistors and integrated circuits: originally with a few transistors, lately with hundreds of millions. Oil-filled circuit breakers the size of a cottage eventually gave way to solid-state switches the size of a fist. From programs on punch cards, computer scientists progressed to programs that write programs that write programs, all stored on magnetic disks whose capacity has doubled every 15 months for the past 20 years [see “Through a Glass”]. In two or three generations, engineers took us from shouting into a hand-cranked box attached to a wall to swapping video clips over a device that fits in a shirt pocket.

Today, at its fringes, electrical engineering is blending with biology to establish such disciplines as biomedical engineering, bioinformatics, and even odd, nameless fields in which, for example, researchers are interfacing the human nervous system with electronic systems or striving to use bacteria to make electronic devices. On another frontier—one of many—EEs are joining forces with quantum physicists and materials scientists to establish entirely new branches of electronics based on the quantum mechanical property of spin, rather than the electromagnetic property of charge.

What EEs have accomplished is amazing by any standard. “Electrical engineers rule the world!” exclaims David Liddle, a partner in U.S. Venture Partners, a venture capital firm in Menlo Park, Calif. “Who’s been more important? Who’s made more of a difference?”

But as the purview of electrical engineering expands, does the entire discipline risk a kind of effacement by diffusion, like a photograph that has been enlarged so much that its subject is no longer recognizable? For those in the profession, and those at universities who teach its future practitioners, this is not an abstract issue. It calls into question the very essence of what it means to be an EE.

“I remember hearing the same sort of words 20 years ago,” says Fawwaz T. Ulaby, professor of electrical engineering and computer science and vice president for research at the University of Michigan, in Ann Arbor. Indeed, two decades ago, in its 20th anniversary issue, IEEE Spectrum ran an article describing how the drive toward abstraction and computer simulation was reshaping electrical engineering [see “The Engineer’s Job: It Moves Toward Abstraction,” Spectrum, June 1984]. Breadboards and soldering irons were out; computer simulations and other abstractions were in.

If anything, the variety of things EEs do has actually increased since then. If you are an EE, you might design distribution substations for an electric utility or procure mobile communications systems for a package delivery company or plan the upgrade of sprawling computer infrastructures for a government agency. You might be a project manager who directs the work of others. You might review patents for an intellectual property firm, or analyze signal strength patterns in the coverage areas of a cellphone company. You might preside over a company as CEO, teach undergraduates at a university, or work at a venture capital or patent law firm.

Maybe you work on contract software in India, green laser diodes in Japan, or inertial guidance systems in Russia. Maybe, just maybe, you design digital or—more and more improbably—analog circuits for a living. Then there are the offshoots: field engineering, sales engineering, test engineering. Lots of folks in those fields consider themselves EEs, too. And why not? As William A. Wulf, president of the National Academy of Engineering (NAE), in Washington, D.C., notes, the boundaries between disciplines are a matter of human convenience, not natural law.

If your aim is to define the essence of the electrical engineering profession, you might ask what all these people have in common. Perhaps what links them is the connection, however indirect, between their livelihoods and the motion of electrons (or photons). But is such a link essential to defining an EE? Not to Ulaby.

“Engineers tend to be adaptive machines,” he says. Even though there’s little resemblance between the details of what he learned in school and the work he does now, Ulaby, who is also editor of the Proceedings of the IEEE, has no doubt that he himself is an EE.

David A. Mindell of the Massachusetts Institute of Technology, in Cambridge, says the perception that the field is heading toward unrecognizability is a constant. (This associate professor of the history of engineering and manufacturing also designs electronic subsystems for underwater vehicles.) Perhaps the biggest change to the electrical engineering field occurred in 1963, when engineers who worked with generators and transmission lines and engineers who worked with tubes and transistors finally agreed that they were all part of the same discipline.

That was the year the American Institute of Electrical Engineers (AIEE), whose membership consisted largely of power engineers, agreed to merge with the Institute of Radio Engineers (IRE) to form the IEEE. In the 1980s, jokers were already suggesting that the IEEE should become the Institute of Electrical Engineers and Everyone Else. Then, as now, many observers worried that such mainstay specialties of the profession as power engineering and analog circuit design were stagnating, while all of the interesting progress took place at the boundaries between electrical engineering and other fields.

Forced to choose a single core activity of electrical engineering, many technologists would probably pick circuit design, in all its various manifestations. It wouldn’t be anything like a unanimous choice, of course, but it would make sense in much the same way as identifying surgery as the archetype of the medical profession, say, or litigation as the heart of lawyers’ work. Circuit design is, after all, what non-EEs tend to associate with electrical engineering, if only in a vague way. And if a connection to moving electrons is a fundamental characteristic of an EE’s occupation, then circuit designers must be counted among the elite.

By that standard, Tom Riordan is an EE’s EE. Now a vice president and general manager of the microprocessor division at chip conglomerate PMC-Sierra Inc., in Santa Clara, Calif., Riordan started his career in the late 1970s, when circuit design was king and designing your own microprocessor, he says, “was the be-all and end-all” of an electrical engineering career. Riordan helped design a single-chip signal processor at Intel Corp. and created special-purpose arithmetic units at Weitek Corp. He then played a key role in developing the design for the central processing unit of the single-chip reduced instruction set computer (RISC) that made what was then MIPS Computer Systems Inc. a commercial success in the early 1990s.

That kind of deeply technical 14- to 16-hour-a-day work, mixing intimate knowledge of architectural principles with the intricacies of semiconductor layout required to get a chip working at speed, is what Riordan still thinks of as engineering. He designed a floating-point unit for MIPS and oversaw the architecture of a couple of more generations of CPUs before starting his own company, Quantum Effect Devices Inc., where he guided about a dozen engineers over the hurdles of creating MIPS-compatible custom processors. On the side, he negotiated with customers and dealt with investors and investment bankers.

After PMC-Sierra bought Quantum in 2000, Riordan dropped much of the CEO side of his job. This shift, he says, gives him roughly one day a week of what he calls “real engineering”—helping to make complex tradeoffs in CPU architecture or reviewing the niceties of yet another reduction in the size of a chip feature. He may not get into the same level of technical detail on every project as he once did, but he asserts that knowing the ins and outs of nanometer-scale circuit design is still part of his job.

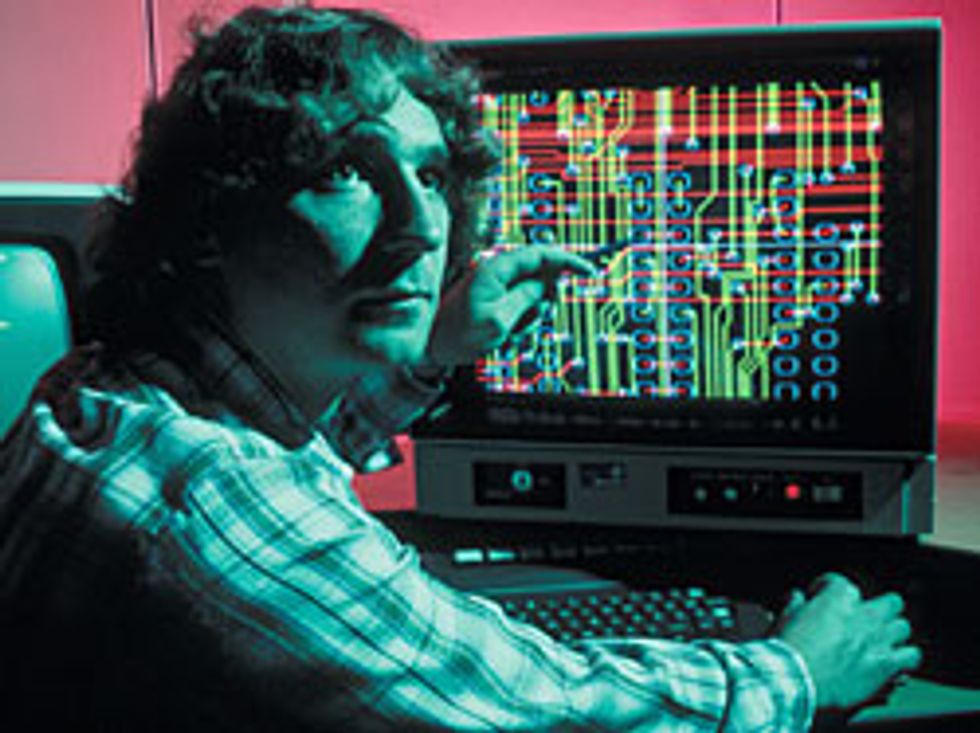

Of course, the definition of hands-on has changed drastically in the past 20 or 30 years. Designers in the 1970s and 1980s still built prototypes out of parts they could see with the naked eye. And when those prototypes didn’t work, they attached oscilloscope probes to suspect points until they found the source of the problem. Those days are fast becoming a fond memory.

For the past 10 or 15 years, at least, “you couldn’t debug a system into working,” says John Mashey, a former chief scientist at Silicon Graphics Inc., in Mountain View, Calif. When you’re building on silicon, the first chip out of production has to “more or less work,” he adds, maybe not at the full speed or with all the functions intended. But if the chip doesn’t do most of what it was designed to do, a project will lose months getting to market while waiting for a new fabrication cycle. So design now means endless rounds of simulation and modeling. And design engineers effectively become programmers as they type the “source code” representing their circuits into the tools that will ultimately generate a layout.

Where designers once built, breadboarded, poked, and probed, they now simulate. And almost all of the modeling, analysis, and synthesis that designers do, Riordan points out, would be unthinkable without the nearly two orders of magnitude by which computing power has increased in the past decade.

As Moore’s Law continues its relentless advance, engineers who build systems—whether chips or boards—seem to be doing less and less actual design of circuits and ever more assembly of prepackaged components. Circuit designers are working with bigger and bigger functional blocks, assembling them with increasingly powerful tools, and getting further from both the messiness and the simple satisfactions of working in the real world.

Mashey points out that for a system on a chip, or SOC, designers don’t even lay out blocks of circuitry. Instead they stitch together CPU blocks, network and video interfaces, cache memory, and other pieces of intellectual property from multiple vendors—each with software instructions that handle the detailed interconnections—to create a custom chip for a set-top box, a toy, or a smart refrigerator. Designers may put together complex systems containing billions of transistors without ever seeing a physical circuit; to the designer, the chip or populated circuit board is merely a collection of files stored on a desktop computer.

Although such an abstract, project management-style view of engineering may be what the future holds, it could well leave current generations of engineers behind. Some technologists have always embraced management; others (such as Riordan) have taken on management tasks only reluctantly. If managing becomes what engineers do, might a very different kind of person make up most of the engineering population? The NAE’s Wulf doesn’t think so: he politely scolds his interviewer for parroting the old stereotype of engineers as gizmo-focused loners. As long as engineering involves using technology to make new things, he argues, that’s what engineering types will do, even if it involves work that looks like a combination of anthropology, marketing, and project management.

Some engineering schools and departments have been bowing to these trends for years. Rosalind H. Williams, director of the MIT Program in Science, Technology, and Society, helped oversee the institution’s curriculum retooling in the second half of the 1990s. She suggests that assembling parts from disparate sources and cobbling together abstractions makes engineering more akin to project management than to design. Some of the changes in MIT’s curriculum were designed to prepare engineering students for management-related careers. Others, like the addition of biology to the core curriculum, respond to changes in the world where students will live and work.

Already, she says, many of the roughly one-third of MIT students who major in electrical engineering and computer science, or EECS, view it as a sort of technical liberal arts degree that prepares them for a wide range of technical and nontechnical jobs. Indeed, after earning their undergraduate degrees, about a quarter of MIT students go directly into jobs in finance or management consulting.

One crucial problem, Michigan’s Ulaby says, is giving students a sense of the potential breadth of their field without sacrificing solid training in its fundamentals. It takes time for students to absorb the mathematical rigor associated with the material, he says. With demand for both a broad perspective and a rigorous grounding in an ever-enlarging set of core subjects, it is not surprising that the four-year engineering degree is under pressure, as it has been for decades. Wulf, for example, states flatly that the four-year engineering degree should not suffice as a first professional qualification. A. Richard Newton, dean of the College of Engineering at the University of California, Berkeley, proposes that students take a fifth year tackling real-world problems far from home to improve their practical and cultural understanding of their discipline’s role in society.

Even as some schools and engineers embrace generalist status, others must specialize. Why? Simple: who creates those neatly packaged abstractions that project managers assemble into finished systems at the click of a mouse? Other EEs, of course, working as module designers and programmers. These engineers must focus on the minutiae of a particular subdiscipline—say, the timing characteristics of a particular family of CMOS chip-fabrication processes or the design of a special class of databases. Then comes the hard part: packaging their knowledge in a form that nonspecialists can use without worrying about all the details.

Ironically, as the visible face of circuits becomes more and more digital, their analog foundation becomes more and more apparent. As circuit features shrink below 100 nanometers, the quaint design-rule abstractions that allowed engineers for the most part to leave aside leakage current, parasitic capacitance, and other messy real-world issues no longer hold, says Riordan. Anyone who designs systems that operate at high speed and low power in this nano domain must know quantum field theory and solid-state physics as well as algorithms. And module builders have to work harder to maintain the digital behavior.

Such difficulties point to the downside of the entrenched reliance on packages, encapsulated expertise, and abstraction. Many observers have begun to worry that EEs reared on abstraction and on computer simulations that simply parrot abstract models may lose touch with the behavior of real devices. Fred G. Martin, a longtime MIT Media Laboratory researcher who is now an assistant professor at the University of Massachusetts-Lowell, tells a story of just how brittle abstract knowledge can be. One of his students complained about Martin’s lecture on transistors, fixated on a rule he had learned in a previous course, namely that collector current equals base current multiplied by gain.

As many EEs have discovered, at some point this rule is trumped by Ohm’s Law, which tells you how much current flows through a circuit with a given resistance and input voltage. But the student, who had never built a real, working circuit, was ready to believe that Martin’s discussion of Ohm’s Law was wrong because it conflicted with the shorthand rule he’d been taught about idealized transistors. A working EE would never make such a simple error.

Bert Sutherland, who retired in 2000 as director of Sun Microsystems Laboratory after a career that also included stints managing researchers at Xerox Palo Alto Research Center, expresses another concern about where the increasing reliance on modeling and simulation may be taking engineers. Sure, Sutherland says, growing computer power makes it easier to model phenomena that are already easy to model, but “things that are difficult to model stay difficult.”

As the tools themselves become more complex, the temptation to avoid approaches that aren’t amenable to existing software may increase. Techniques such as asynchronous logic or adiabatic clock distribution (in which resonant circuits recapture much of the energy usually dissipated in sending clock pulses across a chip) offer significant improvements in performance or power consumption, for example, but the chips are much harder to analyze than ones in which the gates are synchronized and all of a clock network’s energy is dissipated to ground.

The same advances that led EEs to build more complex systems also allow smaller teams—maybe even a single engineer—to handle projects that in previous decades would have called for a hangar full of men with slide rules, pocket protectors, and narrow ties. This increase in productivity poses a conundrum, says Sutherland: you have to hope that the number of projects calling for engineering talent outpaces the rate at which EEs encapsulate and standardize their knowledge, making fewer of them necessary for any given project.

MIT’s Williams points to the long-term decline in U.S. students choosing engineering as a sign that young people do not see it as a secure, comfortable career [see sidebar, "Stay Current, Stay Lucky, Stay Employed"]. Between 1987 and 2001, the U.S. Department of Education reports, the number of electrical engineering bachelor’s degrees in the United States decreased by more than 45 percent.

With EE enrollment, employment, and subject matter all in upheaval, companies and educational institutions will have to make significant adjustments. Some of them may prosper beyond expectations; others will not survive.

Wulf is optimistic: he points to the rapid revamping of curricula shortly after World War II, when EEs built a science-based educational system that effectively reclaimed their field from the physicists, who had made so many key technological advances during the war. Wulf also thinks that knowing something about electrical engineering can benefit people in other disciplines. For example, he says, a civil engineer should know enough about digital design to be able to specify how a bucketload of radio-frequency-enabled strain gauges can be installed in a bridge to let the structure diagnose itself.

For the EEs who keep up with the pace of innovation, the ride ahead will be thrilling. Quantum-based cryptographic devices are already reaching market, and their computing progeny—which in theory could simultaneously calculate all possible answers to some questions—are inching into existence in laboratories around the globe. On the biology side, EEs like Tom Knight, senior research scientist at the MIT Artificial Intelligence Laboratory, are applying principles that worked for chip-design rules and the very large-scale integration (VLSI) revolution to create stripped-down microorganisms that could be bred to lay down patterns for ultrasmall circuits made of silicon, or whatever material comes next.

Indeed, biology will reshape electrical engineering in ways we can’t imagine. Neural networks, genetic programming, computer viruses—each of these took inspiration from biological phenomena, points out Kenneth R. Foster, a professor of bioengineering at the University of Pennsylvania, in Philadelphia.

“During the span of my own career, a new discipline, bioengineering, emerged from electrical engineering and other classical engineering fields and has taken off in the directions of tissue engineering, genetics, proteomics, and neuroscience,” he says. What Foster refers to as “the spectacular science in these fields” will reshape the way that electrical engineering is practiced. For example, EEs are borrowing techniques from the world of molecular biology to assemble structures that can be used as displays or switches, and to simulate neurons.

“We are using VLSI chips to simulate the action of neurons and other biological cells,” explains Foster’s colleague Kwabena Boahen, an associate professor in the Penn bioengineering department. Boahen points out that a basic analog circuit-design modeling program such as Spice can simulate neurons “just fine.”

More EEs are getting involved with neurobiology, Boahen notes, and biologists are happy to work with them. “The biologists determine what the inner workings of a neuron are,” he explains. “They tell what the pieces are, and I’ll design a circuit to mimic how these pieces work together.” Boahen himself is a prime example of an EE who migrated to biology. He holds bachelor’s and master’s degrees in electrical and computer engineering and a doctorate in computation and neural systems.

So in such a complex new industrial and educational ecology, how will we recognize EEs? By their stance, Riordan says: feet squarely planted on terra firma, but with a gaze out toward the horizon. Musing about why he became an EE, he cheerfully concedes that he didn’t crave pure intellectual exploration, as the best scientists do. Nor did he have whatever turn of brain it takes to make fine art. What he had then, and has now, is “the specific ability to deal with the real world as it exists and craft things from it.” Others with similar talents will find in electrical engineering a heady lifelong stimulus. Riordan concludes, “I can’t think of a more fortunate path than the one I followed.”

About the Author

PAUL WALLICH is a science writer who lives in Montpelier, Vt.

To Probe Further

Two histories of electrical engineering were published by the IEEE Press in 1984. One of the books, Engineers & Electrons, by John D. Ryder and Donald G. Fink, was a breezy overview aimed at a broad readership. The other, The Making of a Profession: A Century of Electrical Engineering in America, was a more scholarly volume written by A. Michal McMahon.

Rosalind H. Williams of the Massachusetts Institute of Technology incorporated personal reminiscences and family history into her book Retooling: A Historian Confronts Technological Change (MIT Press, 2002).