Facial recognition has already come a long way since U.S. Special Operations Forces used the technology to help identify Osama bin Laden after killing the Al-Qaeda leader in his Pakistani hideout in 2011. The U.S. Army Research Laboratory recently unveiled a dataset of faces designed to help train AI on identifying people even in the dark—a possible expansion of facial recognition capabilities that some experts warn could lead to expanded surveillance beyond the battlefield.

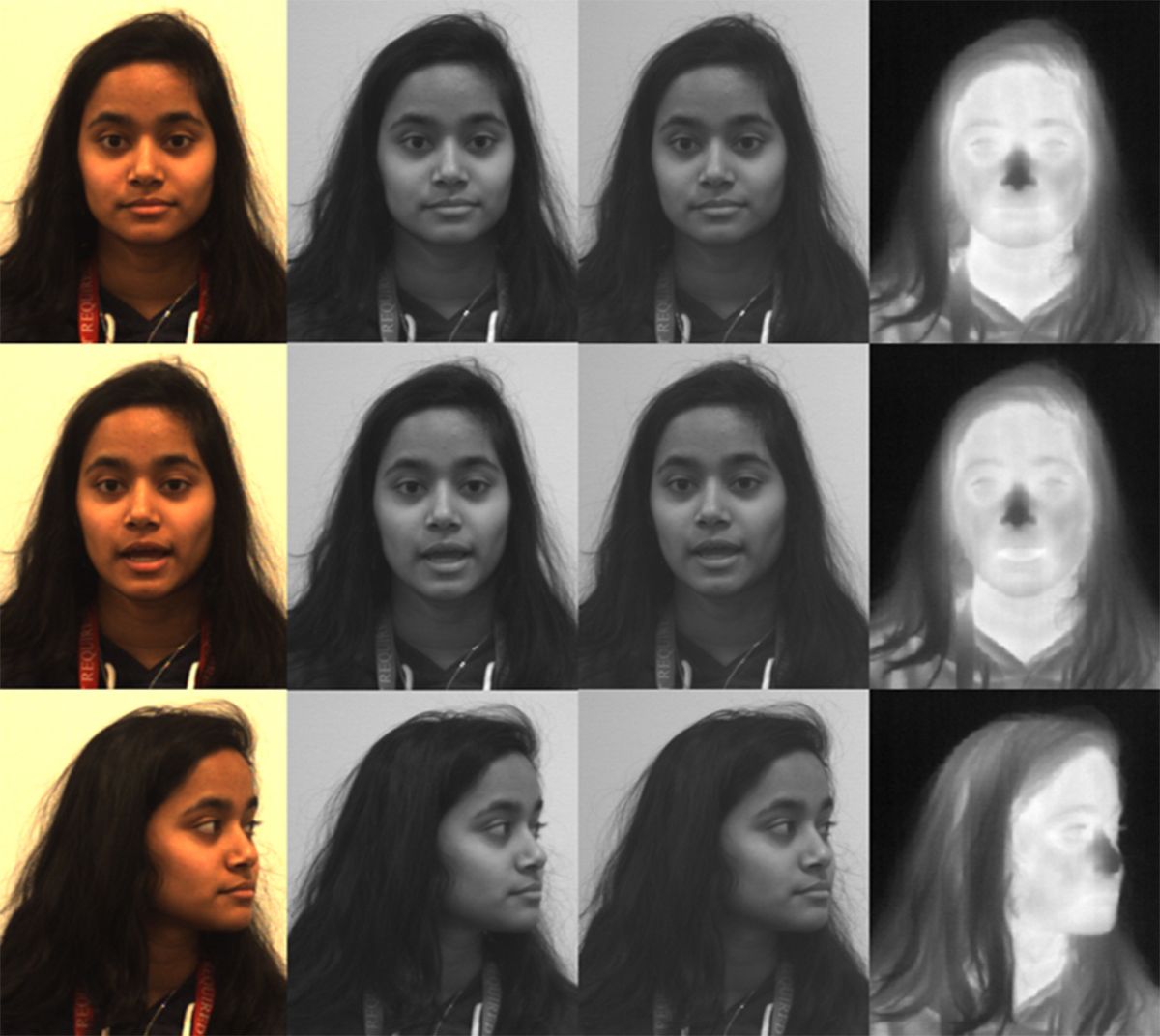

The Army Research Laboratory Visible and Thermal Face dataset contains 500,000 images from 395 people. Despite its modest size as far as facial recognition datasets go, it is one of the largest and most comprehensive datasets that includes matching images of people’s faces taken under both ordinary visible-light conditions and with heat-sensing thermal cameras in low-light conditions.

“Our motivation for this dataset was we wanted to develop a nighttime and low-light face recognition capability for these unconstrained or difficult lighting settings,” says Matthew Thielke, a physicist at the Army Research Laboratory in Adelphi, Maryland.

Facial recognition applications for nighttime or low-light conditions are still not mature enough for deployment, according to the Army Research Laboratory team. Early benchmark testing with the dataset showed that facial recognition algorithms struggled to either identify key facial features or identify unique individual faces from thermal camera images—especially when the normal details visible in faces are reduced to blobby heat patterns. The dataset is described in a paper (PDF) that was presented during the 2021 IEEE Winter Conference on Applications of Computer Vision from 5–9 January.

The algorithms also struggled with “off-pose” images in which the person’s face is angled 20 degrees or more away from center. And they had problems matching the visible-light images of individual faces with their thermal imagery counterparts when the person was wearing glasses in one of the images.

But several independent experts familiar with facial recognition technology warned against feeling any false sense of security about how such technology is currently struggling to identify faces in the dark. After all, well-documented problems of racial bias, gender bias, and other accuracy issues with facial recognition have not stopped companies and law enforcement agencies from deploying the technology. The broad availability of such a dataset to train facial recognition algorithms to work better in low-light conditions—and not just for military scenarios—could also contribute to the broader surveillance creep of technologies designed to monitor individuals.

“It's another of those steppingstones on the way to removing the ability for us to be anonymous at all,” says Benjamin Boudreaux, a policy researcher working on ethics, emerging technology, and international security at the Rand Corporation in Santa Monica, Calif. “We can’t even, if you will, hide in the dark anymore.”

The development of the dataset is related to ongoing work at the Army Research Laboratory aimed at developing automatic facial recognition that can work with the thermal cameras already deployed by military aircraft, drones, ground vehicles, watch towers, and checkpoints. U.S. Army soldiers and other military personnel may also sometimes use body-worn thermal cameras that might someday be coupled with facial recognition.

“The intention is not for any of these low-light facial recognition technologies to be used on U.S. citizens inside the continental United States, because that is outside the general purview of the U.S. military,” says Shuowen “Sean” Hu, an electronics engineer at the Army Research Laboratory.

Notably, the dataset development was sponsored by the Defense Forensics & Biometrics Agency, which manages a U.S. military database filled with images of faces, fingerprints, DNA, and other biometric information from millions of individuals in countries such as Afghanistan and Iraq, according to documents obtained by OneZero. The agency has also issued contracts to several U.S. companies to develop facial recognition technology that works in the dark—efforts that might benefit from the new training dataset.

Some Americans may have a casual attitude toward the idea of the U.S. military deploying facial recognition outside the United States. In 2017, Boudreaux helped conduct a RAND Corporation survey (PDF); 62 percent of American respondents thought it was “ethically permissible” for a robot to use facial recognition at a military checkpoint “to identify and subdue enemy combatants.” (Survey participants skewed more white and male than the overall U.S. population.)

But Americans ought not feel complacent about such technology remaining limited to overseas military deployment, says Liz O’Sullivan, technology director for the Surveillance Technology Oversight Project in New York City. She points out that Stingrays, the cell phone tracking devices originally developed for the U.S. military and intelligence agencies, have now become common tools in the hands of U.S. law enforcement agencies.

Even if the U.S. military version of facial recognition technology does not find its way home, the dataset could help train similar facial recognition algorithms for companies that have commercial uses in mind for the U.S. market. The Army Research Laboratory has stated a willingness to share the dataset with academia, the private sector, or other government agencies, if outside researchers can show that they are engaged in “valid scientific research” and sign a data-sharing agreement that prevents them from casually uploading and spreading the images around.

“It starts with military funding and research, and then it just kind of very quickly proliferates through all the commercial applications a lot faster than it ever used to,” O’Sullivan says. She added that “if you think that this technology is not going to end up in a stadium or in a school, then you just haven't been paying attention to history.”

The United States currently has no federal data privacy law restricting the use of facial recognition, despite a series of bipartisan bills aimed at limiting its use by federal law enforcement. Instead, there is a patchwork of local and state laws covering data privacy, such as the California Consumer Privacy Act, or bans on official government use of facial recognition in cities such as Boston, San Francisco, and Oakland.

On the military side, the U.S. Department of Defense did formally issue what it described as “five principles for the ethical development of artificial intelligence capabilities” in February 2020. It’s still unclear how the principles would be put into practice—the Army Research Laboratory dataset collection predated the announcement—but their existence indicates the U.S. military’s eagerness to plant its flag in having an “ethical and principled” approach, says Ainikki Riikonen, a research assistant at the Technology and National Security Program at the Center for a New American Security in Washington, D.C.

In fact, Riikonen suggested that the U.S. military may be better positioned as an organization to ethically deploy facial recognition and other AI technologies than are local U.S. police departments that may take a more haphazard approach.

“The thing that would give me the confidence is that the Department of Defense has the resources and the interest to develop guidelines, and I think maybe a lot of local law enforcement departments don't,” Riikonen says. “Even from an institutional and process standpoint [DoD is] probably better positioned to try to make some functional and principled and less accidental use of this.”

To their credit, the Army Research Laboratory team and its university research partners collected the images for their dataset from adult volunteers under a careful informed consent process with oversight from an Institutional Review Board that holds the responsibility for monitoring biomedical research. That contrasts with the more controversial practices of research groups or companies such as Clearview AI that have scraped social media websites and the public internet to collect images of faces.

Still, O’Sullivan noted that the paper describing the new dataset lacked any discussion of the well-known facial recognition biases involving race, gender, and age that have led to real-world performance problems. And Boudreaux questioned whether the new dataset’s collection of faces would accurately reflect the populations in those parts of the world where the U.S. military intends to someday deploy the combination of facial recognition and thermal cameras. Any related performance issues that lead to cases of mistaken identity could potentially have high-stakes and even deadly consequences in a military scenario.

The Army Research Laboratory researchers declined to publicize demographic statistics from the dataset, in part because they had decided from the outset to avoid making demographics a major focus of the study. But they described expanding the diversity of such datasets as a goal for future research.

Separately, they also acknowledged the limitations of a dataset collected under controlled settings with people sitting just a few meters from the camera—a vastly different scenario from future real-world deployment at military checkpoints or in the field. But they view the dataset as an early step toward helping the research community extend facial recognition into the thermal imagery domain. And they hope future datasets could include images of faces captured by thermal imagery at longer ranges and in less laboratory-like conditions.

“In the future, once this technology is mature enough, we want the distance to be much longer,” Hu says. “Ideally tens of meters, or 100 or 200 meters.”

A long-distance facial recognition technology that also works in the dark would undoubtedly prove incredibly useful for the U.S. military and other militaries. But any perfected version of such technology in the future could also open the door to what experts see as a disturbing erosion of individual privacy and ultimately liberty—a phenomenon already evident in how certain minority populations have been specifically targeted for government surveillance in countries around the world.

Even an imperfect version of such facial recognition coupled with other biometric surveillance methods could shrink the space of surveillance-free movement and activity for individuals. And as higher-end thermal cameras become more affordable for customers beyond the U.S. military in the coming decade, more companies and law enforcement agencies could someday add facial recognition in the dark to their surveillance capabilities.

“As much as we feel comfortable that facial recognition has the problems it does today, if we were to grab three or four different unchangeable and readable-at-a-distance traits to combine them, that would be a pretty inescapable panopticon,” O’Sullivan says. “And it would be a really distressing moment for civil liberties around the world.”

Editor’s Note: The original article incorrectly stated that the Army Research Laboratory is based in White Oak, Maryland. It is located in Adelphi, Maryland.

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.