Amiga: The Computer That Wouldn’t Die

Having outlived a number of its corporate owners, and spurred on by its passionate users, the Amiga computer is going back to market again—but this time, in very different forms

In April 2000, a new computer architecture was introduced as the multimedia platform for the coming decade, a seamless link between television, DVD, video games, cellular phones, and the Internet. It could display your favorite movie on your big-screen TV, look up information about the actors, cue up selections from the sound track on your CD player, and send you e-mail confirming purchase of tickets for the sequel. Patented user-interface software could overlay computer information unobtrusively on your video screen or vice versa, popping up just the bits you needed to see. If all goes well, its backers expect it to be in millions of homes within a few years. It is called the Amiga.

Revolutionary new technology? You can call it this only if you consider something still new when its roots go back more than 20 years. Since it was first conceived, the Amiga has had five corporate owners and has helped bankrupt two major manufacturers. Yet hundreds of thousands of hardware aficionados and software developers swear by it. And now its glamor has seduced yet another group of engineers and programmers into putting their efforts behind the Amiga name.

According to Bill McEwen, president of Amiga Inc. in Snoqualmie, Wash., the same multimedia vision that made the Amiga so talked about when it was introduced in 1985—and has sustained it through 15 lean years—still gives it the name recognition and user base to make a relaunch worthwhile.

At a time when most other computers had monochrome displays, the original machine was designed to render images and animation along with high-quality sound and speech, thanks to custom hardware that also let it interact smoothly with television signals. In its latest incarnation, however, essentially all the original custom circuits are gone. Instead, the company is pinning its hopes on innovative virtual-machine software that lets almost any model of central-processing unit (CPU) emulate an idealized microprocessor whose architecture is optimized for compact code and easy compilation of programs. (Sun Microsystems Inc.’s Java language relies on a similar virtual-machine concept, albeit one that executes more complex instructions.)

Developed by the Tao Group in Early, Reading, England, this software base will equip the Amiga to appear in a host of different incarnations—cellular phones, television set-top boxes (which control the signals coming into a TV set from a satellite dish, cable system, or the Internet), video and sound equipment, home-control systems, and, oh, yes, next-generation PCs [see photo].

Fleecy Moss, Amiga's vice president of development, argues that—thanks to a 15-year-old community of people accustomed to using the Amiga to work with sound, video, and digital information simultaneously—Amiga is perfectly positioned to become a central component in the emerging so-called digital content universe, in which all sources of digital information must operate smoothly together. And the loosely organized but fanatical group of hobbyists, programmers, and hardware engineers who have kept the machine alive in the nearly 10 years since serious corporate development ceased are just the kind of folks you might want as developers and evangelists.

Cult of the Amiga

The amount of information available about the Amiga is staggering. More than 50 000 Web pages deal with it at more than 200 sites. You can find archived interviews with Amiga’s original designers, schematics of its innards going back a dozen years, and, of course, gigabytes of code and images to download. Amigans extol the virtues of the “classic” machine—many still use their 10- or even 15-year-old computers for graphics, word processing, e-mail, and Web browsing—or argue about whether hardware error-checking leads to badly written software. Some of them offer to buy 10 copies each of any new software introduced either by Amiga or by the handful of third-party developers still in business, just to ensure the viability of the market.

Why so much loyalty? Part of it is natural selection: everyone less fiercely devoted to the machine has long since switched to a Macintosh or a PC. But even now, for some purposes, the original Amiga’s capabilities are hard to duplicate without hardware costing thousands of dollars more. From the day it was introduced in 1985, high-quality animated graphics and easy manipulation of video signals were part of the Amiga’s repertoire, and those features continue to find work for it. The custom video circuitry could manipulate images anywhere from two to 40 times as fast as other personal computers of the time. In addition, the Amiga’s combination of a graphical, menu-based user interface with command-line scripting and multitasking operating system was an advantage that put the company almost 10 years ahead of its competition.

The inability of the Amiga’s various owners to deliver product upgrades over the years has also helped forge a cohesive community. Small companies supply everything from memory expansion boards to hard disks to operating-system (OS) upgrades and new CPU boards. (The new Amiga Inc. has already learned to make use of such independent developers, delegating new versions of the classic operating system to Haage & Partner in Glashuetten, Germany, and hardware production for its first models to Eyetech Group in Stokesley, North Yorkshire, England.)

Fervent loyalty is, of course, two-edged: responding to the comments, queries, and demands of thousands of fans ready to second-guess every technical and financial decision—or even take over development if necessary—could be a small start-up’s worst nightmare. Observers differ on just how many members of the Amiga classic community will make the leap to the new software-based digital universe.

On the other hand, the history of the Amiga from its inception has been one of changing design goals and survival against the odds. In response to its designers’ ambitions and a changing marketplace, it evolved from a video game console into a home computer before it even reached the prototype stage. Almost all the original machine’s internal circuitry and software bear the marks of repeated erasures and rewriting.

The ultimate video game

The Amiga began its gestation not as a PC but as the next home video game, intended to follow the Atari VCS and 5200. Those machines, designed in the late 1970s, were based on the same Motorola 6502 microprocessor that powered the Apple II, and on a series of custom chips mainly designed by Atari Inc. engineer Jay Miner. In 1979, Miner, programmer Larry Kaplan, and a few other employees told their bosses at Atari that they wanted to build a game console based on the Motorola MC68000, a faster and more powerful microprocessor that later became the heart of the Apple Macintosh.

“We said, ‘Give us $5 million and we’ll give you the next machine,’” Kaplan told this writer back in 1982. But instead of a green light, the veterans who had helped make Atari a multibillion-dollar company got endless requests for a detailed business plan. So, in tried-and-true Silicon Valley fashion, they resigned.

Kaplan became one of the founders of game-software giant Activision, and Miner went to Zymos, an early manufacturer of semicustom ICs. Meanwhile, on the side, Kaplan found US $7 million in start-up funds to build that next machine they had dreamed of. By 1982, Miner also began moonlighting at Hi-Toro, as the company was originally called, sketching out the architecture for a computer code-named Lorraine.

His original architecture included the key feature that made the Amiga such a ground-breaking home computer: a clock synchronized to a National Television Standards Committee (NTSC) video signal. At the time, this synchronization allowed the Amiga to outperform the graphics capabilities of much more expensive machines. It let the Amiga hardware track exactly what part of the video screen was being drawn at any given moment. The machine could thus display and manipulate images much more efficiently than less well-synchronized competitors. In fact, in some applications, it could do the job of broadcasting equipment that cost $50 000 or more.

The clock feature also meant that, whereas other machines had to create an entire video frame before displaying it—and hold at least two frames in memory at a time to do animation—the Amiga could generate images on the fly, even as a monitor’s electron beam was whizzing across the screen. This was no small advantage in days of expensive memory chips, when a few full-color images could fill a computer’s entire memory: the Amiga could create complex animations in a fraction of the RAM needed by its competitors.

Miner also devised a full-fledged display coprocessor, nicknamed Copper, a unit that could read a stream of instructions and load new values into registers that controlled graphics, sound, and other functions [see figure]. It removed almost the entire burden of display manipulations from the 68000, leaving the CPU free to multitask between programs.

That notion seems mundane in today’s world of video boards with their multiple custom processors and megabytes of private memory. But at the time, most PCs relied on the CPU to do all the work—one reason that multitasking was considered impractical. Multitasking usually means a processor switching back and forth between different applications, but back then, a CPU had so much work to do taking care of display and other housekeeping that running multiple programs at the same time was out of the question.

Even more impressive was the circuitry under Copper’s control. As befitted a game machine, Lorraine could position eight sprites anywhere on the screen. Sprites were independently defined, movable objects used to display players and opponents in computer games, cursors in graphics programs, or whatever else came to a programmer’s mind. Lorraine’s were 16 pixels wide, any height, and 2 bits deep so that they could be rendered in three colors (a pixel whose bits were 00 was transparent; 01, 10, and 11 were the other possibilities). Sprites could also be linked in pairs to create 15-color (4-bit) images.

“Behind” the sprites was a series of background bit-planes—areas in memory that contained data for each pixel on the screen. One plane could describe images with two colors; two planes could link together for a four-color image, and so on—up to six planes and 64 colors. Colors on the Amiga, like those on most PCs of the succeeding decade, were controlled by a look-up table. Each of the 16 colors in a 4-bit-deep display, for example, could be chosen from a palette of 4096. This compared to a palette of at most 16 colors on other PCs of the time. (Today, such palettes, or color look-up tables, are still used for images with limited color ranges. In images with thousands or millions of colors, each pixel’s color is specified directly in terms of red, green, and blue [RGB] values.)

The original Amiga had 4 bits each for hue (H), saturation (S), and luminance (L)—a color representation that mapped elegantly to the NTSC video signal. To make the best use of this representation, Miner developed a special hold-and-modify mode, in which data would tell the video output chip how to alter the H, S, or L values from the previous pixel on the screen; it could display subtly shaded images with remarkable realism.

Blitter component

To complement Copper, which told the video circuits where to place image data on the screen and what colors to paint it, the designers built a blitter—a feature previously seen only in high-end systems such as flight simulators. Named for the bit-block-transfer operation first explored by computer scientists at Bell Labs, the blitter could take data from any two regions of memory, perform shifts and logical operations on it, and slap the result into a destination region in memory (whence it could be rendered on the screen). Sequences of block transfers can perform essentially any graphical operation, from simple masking to image rotation. (Indeed, Miner told this writer in 1986, after the machine had been launched, that an enterprising hacker had figured out how to turn the Amiga into a small array processor for number-crunching by using multiple blitter sequences to do arithmetic.) Modern graphics boards still use essentially similar circuitry.

From concept to reality

While the essence of the Amiga’s design—the NTSC-synchronous clock and coprocessor circuitry—was elegant, turning it into a working high-volume product was another matter. The fledgling Amiga Computer Inc. had neither the money for computer-aided engineering workstations to turn logic diagrams into chip layouts nor the time to spend learning how to use them, recalls chip designer Glenn Keller. So the chip layout went forward on huge sheets of Mylar marked up with yellow highlighters.

Keller created hardware emulations of the three custom chips, code-named Portia, Agnus, and Daphne, using high-speed CMOS TTL (complementary metal-oxide-semiconductor transistor-transistor logic). The prototype relied on 19 circuit boards containing nearly 3000 socketed chips wired to create a gate-for-gate mockup. Thanks to precisely calculated wiring that distributed clock signals to all the chips in synchrony, the boards ran at the same 3.59-MHz speed planned for the silicon, so that crucial video and user-interface code could be tested more than a year before chips were ready. Former Amiga engineer David Needle recalls his awe of Keller’s design: “He had the strength of his convictions in the physics to make the boards work.”

The prototype was completed shortly before the January 1984 Consumer Electronics Show, and Amiga engineers took their creation to Las Vegas to raise money. They hid the prototype boards and CPU underneath a table, and put the keyboard, mouse, and monitor on top.

“They all kept looking for the ‘real’ computer,” said former Amiga programmer R. J. Mical—no one could believe that a 68000-based machine could generate the images and animation the Amiga did. (Keller, meanwhile, remembers nervously watching the shimmer of heated air rising from his wire-wrapped circuit boards under the table as more than 300 W of power coursed through them without a cooling fan.)

Almost everyone who saw what the Amiga prototype could do was entranced, but were they willing to pony up $30 million to put the machine into production before the company’s money ran out? The company’s first offer came from Atari: a one-month, million-dollar bridge loan with the custom chips as security. Amiga took the money and spent it. The month passed, and a forced buyout appeared inevitable. But a few days before the deadline for repaying the loan, an unlikely rescuer appeared: Atari’s arch-rival Commodore, or more accurately, Commodore International Ltd. Offering $4 million, more than four times Atari’s offer, Commodore snatched Amiga from Atari’s grasp. The match seemed perfect: Commodore, which was already in the business of making home computers, had its own chip-fabrication line and excellent overseas manufacturing contacts.

Too many tradeoffs?

Soon engineers and marketers were embroiled in the usual arguments about which features of the machine would have to go to cut costs. Perhaps the most important decision was how much RAM the machine would contain and how it would be wired.

Every kilobyte of additional memory meant more and better images, but the choice between 256KB and 512KB of RAM translated to $150 or more in the retail price. This increase the business staff at Commodore considered unacceptable in its attempt to keep the retail price below $1000. Even more expensive was the proposed expansion bus that would accept add-on cards for extra memory, a hard-disk drive, and other extras.

What’s more, the Amiga’s direct-memory-accessheavy design—with Copper, blitter, sprites, and sound circuits all making direct memory accesses to fetch their data—didn’t leave a lot of time for the MC68000. Each television scan line lasted for 228 memory cycles, and the screen display alone could consume up to 180 of them. With Copper and the blitter running, there was no time left for the main CPU to perform useful work, such as running programs. Amiga’s engineers had designed an unusual but effective solution: a second bus accessible to the MC68000 alone. Programs and data in “fast” memory attached to this bus could be accessed at full speed. (Today, in contrast, the CPU typically controls a computer’s RAM, and many graphics cards must either contain their own memory or else wait their turn.)

But a bus and expansion connectors meant a redesigned, larger case for the computer. In the end, the fast-memory bus dwindled to a row of contacts on the edge of the main circuit board, waiting for someone else to build cards that could connect to them.

Meanwhile, to make the Amiga credible as an office computer, Commodore engineers had reworked the video chip to increase resolution from 320 by 400 to 640 by 400 pixels. In the process, they threw out the hue-saturation-luminance color representation and switched to RGB (which eventually became the industry standard for color displays). Changing only the red, green, or blue part of an RGB value does not usually yield subtle shadings, so Miner’s carefully thought-out hold-and-modify mode became mostly useless. The circuitry stayed in only because it would have taken too long to lay out the video chip yet again.

Cowboy coding

Strategic decisions also had to be made on software. Commodore had never been very strong on the software side—the company chose to ship its best-selling C-64 home computer, for example, with only a modest basic interpreter to run programs that users typed in. But even Commodore recognized that that approach would not suffice for the Amiga.

At the very least, the new machine would require a library of routines to communicate with the custom chips. The designers also had to provide a kernel of multi-tasking software so that the machine could display moving graphics and play sounds while reading data from the disk drive, mouse, and keyboard. Commodore software engineer Carl Sassenrath wrote this kernel in the form of Exec libraries, which scheduled the CPU’s low-level tasks and communicated directly with the hardware. Amiga had hired another company to write the rest of the operating system—a file system for the floppy disk drive, routines for communicating with printers and modems, and higher-level structures for programs to interact with one another. But development stalled.

Commodore then turned to a British company, Metacomco, in Bristol, whose Tripos operating system was well-suited for a machine with 256KB of RAM and a single floppy-disk drive. Metacomco finished its assignment quickly, but at a price. Tripos was largely incompatible with programs written in the C language, standard on Amiga’s side of the Atlantic. Every time a C program (such as a word-processing, spreadsheet, database, or graphics application) made use of operating-system functions, such as reading or writing a file, recalls longtime Amiga developer Olaf Barthel, the programmer had to write specially contorted code to translate between the two. This roadblock hindered developers for years.

Meanwhile, Amiga programmer Mical was working alone on Intuition, the routines that provided windows, buttons, dialog boxes, multiple screens, and event handling—along with all the other niceties of a graphical user-interface.

“Get out of my way,” he told his bosses, and finished the Amiga’s public face in only seven months, barely interacting with the other engineers at all.

Mical remembers many parts of Intuition fondly; he is particularly proud of the event system, which lets programs communicate with the user and with one another. His design was very flexible, so that software could easily be installed to handle new input devices (such as digitizing tablets), and any program that used the event system could have its functions controlled by automated scripts. This capability is something that Microsoft and Apple have yet to perfect.

But “cowboy software development,” as Mical called it, also had its downside. Independent code reviews—had there been time for them—would have improved the design substantially, Mical now says. In particular, he regrets his “shabby” error-handling. Users were to become far too familiar with the Guru Meditation image that the Amiga put on the screen when it crashed.

Indeed, as the machine moved toward production—it had to be ready in time for the Christmas 1985 buying season—both software and hardware were still in flux. Running out of time, the engineers took a drastic step. They threw away most of their savings from reducing the Amiga’s main memory and announced that the Amiga would have a writable control store. Where almost every other computer before or since has had a ROM containing the fundamental routines to make the machine operate, the Amiga had RAM: it read all but a few hundred bytes of its operating software from floppy disk. Eliminating the turnaround time for fabricating fixed ROM chips and installing them in each computer on the assembly line bought the team crucial months of software development and vastly aided later bug fixes.

The marketplace fiddles

After all the maneuvering and false starts, the Amiga finally appeared in July 1985. At a gala introduction at Lincoln Center in New York City, artist Andy Warhol demonstrated the paint software while the Amiga’s programmers in the audience desperately hoped he wouldn’t select any unimplemented features. Reviewers were impressed with the machine’s performance, but at $1200, the Amiga was perhaps four times the price of a comparably equipped (although much less powerful) C-64. It was perhaps two-thirds the price of a comparable PC or Macintosh.

Game and other application development lagged; Barthel recalls that many programmers who were used to “bare silicon”—manipulating hardware directly—were uncertain how to employ all the software features the Amiga offered.

By the late 1980s, software developers had gotten the hang of the new machine. It became a prime game platform and also a vehicle for thousands of shareware programs. But the mainstream of computing had largely passed the Amiga by. In the early 1990s, office PCs offered monitor resolutions up to 1024 by 768 and displays capable of 65 000 or even 16 million colors, far above the Amiga’s 4096. And on the home side, game consoles offered the same resolution as Amiga—and better animation—for a lower price. Aficionados hung on because they had grown dependent on Amiga-only software, because the Amiga’s scripting let them customize their applications so easily, or because they still had hope for new, improved models.

As Commodore engineers worked on an advanced chip set that would support more colors and higher resolution, the company began running into financial trouble for reasons unconnected with Amiga. In late 1992, Commodore introduced faster Amigas that could display 256 colors simultaneously out of a palette of 16 million, and images of up to 800 by 600 pixels. But still Commodore’s losses mounted.

Passing the baton

In April 1994, the bankrupt Commodore closed its doors. A year later German electronics conglomerate Escom announced that it would sell new Amiga models by autumn. Instead it managed to ship only renamed versions of existing inventory before shutting down for unrelated reasons in mid-1996. Then VISCorp of Chicago briefly took on the Amiga before it, too, ran into money troubles and dropped out of the picture. Although the Amiga had a loyal user community, even software developers independently upgrading the operating system, it was an orphan.

In 1997, Gateway acquired what was left of Amiga from VISCorp, initially for access to Amiga’s user-interface software patents. According to former Gateway/Amiga engineers, the company flirted with the idea of building new hardware for hundreds of thousands of diehard fans. But ultimately Gateway decided to stick to its Windows/Intel roots and disband its Amiga operation.

So in January 2000, a dozen or so former Gateway/Amiga employees, long-time Amiga software developers, and veterans of other Amiga incarnations formed Amiga Inc. and announced their intention to carry the tattered multimedia banner forward. The company decided to leave hardware to third-party developers and focus its efforts entirely on software for delivering digital content of every kind—still images, text, sound, video, games, and other applications.

Indeed, the new Amiga even left its underlying software foundation—the virtual-machine layer—to another company, Tao. Fittingly, Chris Hinsley, the founder of Tao Group, got his start programming Amiga games. In fact, Hinsley says, he began developing machine-independent programming systems largely because of his frustration in writing games for the Amiga and then having to rewrite them for Ataris and PCs.

Tao’s software provides programmers with a virtual CPU so that the same code can execute on CPUs, including x86, PowerPC, ARM and StrongARM, MIPS, and half a dozen others. The underlying virtual machine that translates programs to snippets of native code, said Hinsley, occupies only about 70KB. Amiga has ported the latest version of its classic OS to Tao's system, said Amiga’s McEwen, so that many existing programs can be moved to the new architecture with minimal disruption, even though the CPU, display hardware, disks, and network interfaces are completely different.

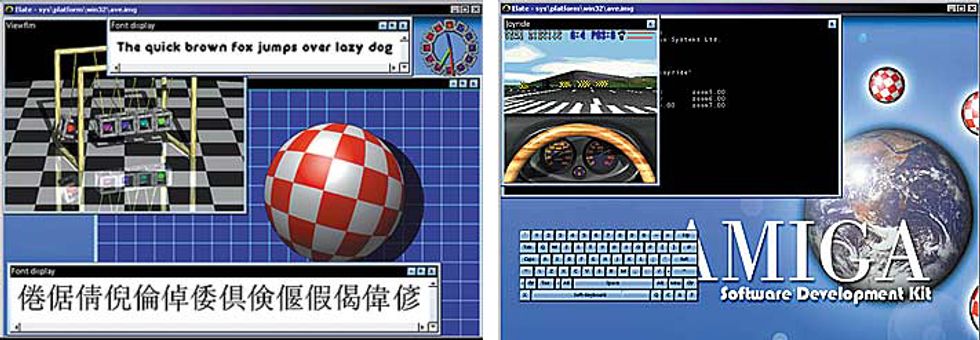

Thus far, Amiga Inc. has released a software development kit that runs under both Windows and Linux [see figure]. It has also signed partnership agreements with Infomedia and another manufacturer of set-top boxes. In response to the demands of the community it inherited, the company has also found a manufacturer for add-in boards that will bring old Amigas up to the standards needed to develop new Amiga software. Two upgrades to the classic OS have also been released.

Ultimately, however, says Amiga’s development vice president Fleecy Moss, almost everything that was left of the original Amiga will disappear. Would the original designers be upset that their brainchild is finally being abandoned? At a recent reunion, none of them seemed concerned. They have gone on to diverse jobs, ranging from designing hand-held video games to digital image sensors to electrical diagnostic equipment. Their original hardware may have been right for its time, was their consensus, but that time is long gone. What remains instead is the notion of hardware and software that, while evolving in response to hacking by designers and users, still somehow retain their essential identities.

—Tekla S. Perry, Editor

About the Author

PAUL WALLICH while a member of the IEEE Spectrum editorial staff in the early 1980s, turned down a job offer from the original Amiga Computer Inc., choosing instead to organize Spectrum’s coverage of “next-generation” computing. He has spent the intervening time writing about science, technology, economics for a variety of publications, including Scientific American, Discover, and Lingua Franca.

To Probe Further

Detailed documentation on Amiga’s hardware and software design as it evolved in later years can be found at www.thule.no/haynie/. Amiga reference manuals are also still available, remarkably enough, from Barnes & Noble at www.bn.com and other online bookstores.

The www.amiga.org Web site hosts Amiga-related discussions and links to other Amiga sites. And, last but not least, www.amiga.com is the Web site of the new Amiga company.