When we hear about manipulation robots in warehouses, it’s almost always in the context of picking. That is, about grasping a single item from a bin of items, and then dropping that item into a different bin, where it may go toward building a customer order. Picking a single item from a jumble of items can be tricky for robots (especially when the number of different items may be in the millions). While the problem’s certainly not solved, in a well-structured and optimized environment, robots are nevertheless still getting pretty good at this kind of thing.

Amazon has been on a path toward the kind of robots that can pick items since at least 2015, when the company sponsored the Amazon Picking Challenge at ICRA. And just a month ago, Amazon introduced Sparrow, which it describes as “the first robotic system in our warehouses that can detect, select, and handle individual products in our inventory.” What’s important to understand about Sparrow, however, is that like most practical and effective industrial robots, the system surrounding it is doing a lot of heavy lifting—Sparrow is being presented with very robot-friendly bins that makes its job far easier than it would be otherwise. This is not unique to Amazon, and in highly automated warehouses with robotic picking systems it’s typical to see bins that either include only identical items or have just a few different items to help the picking robot be successful.

Doing the picking task in reverse is called stowing, and it’s the way that items get into Amazon’s warehouse workflow in the first place.

But robot-friendly bins are simply not the reality for the vast majority of items in an Amazon warehouse, and a big part of the reason for this is (as per usual) humans making an absolute mess of things, in this case when they stow products into bins in the first place. Sidd Srinivasa, the director of Amazon Robotics AI, described the problem of stowing items as “a nightmare.... Stow fundamentally breaks all existing industrial robotic thinking.” But over the past few years, Amazon Robotics researchers have put some serious work into solving it.

First, it’s important to understand the difference between the robot-friendly workflows that we typically see with bin-picking robots, and the way that most Amazon warehouses are actually run. That is, with humans doing most of the complex manipulation.

You may already be familiar with Amazon’s drive units—the mobile robots with shelves on top (called pods) that autonomously drive themselves past humans who pick items off of the shelves to build up orders for customers. This is (obviously) the picking task, but doing the same task in reverse is called stowing, and it’s the way that items get into Amazon’s warehouse workflow in the first place. It turns out that humans who stow things on Amazon’s mobile shelves do so in what is essentially a random way in order to maximize space most efficiently. This sounds counterintuitive, but it actually makes a lot of sense.

When an Amazon warehouse gets a new shipment of stuff, let’s say Extremely Very Awesome Nuggets (EVANs), the obvious thing to do might be to call up a pod with enough empty shelves to stow all of the EVANs in at once. That way, when someone places an order for an EVAN, the pod full of EVANs shows up, and a human can pick an EVAN off one of the shelves. The problem with this method, however, is that if the pod full of EVANs gets stuck or breaks or is otherwise inaccessible, then nobody can get their EVANs, slowing the entire system down (demand for EVANs being very, very high). Amazon’s strategy is to instead distribute EVANs across multiple pods, so that some EVANs are always available.

The process for this distributed stow is random in the sense that a human stower might get a couple of EVANs to put into whatever pod shows up next. Each pod has an array shelves, some of which are empty. It’s up to the human to decide where the EVANs best fit, and Amazon doesn’t really care as long as human tells the inventory system where the EVANs ended up. Here’s what this process looks like:

Two things are immediately obvious from this video: First, the way that Amazon products are stowed at automated warehouses like this one is entirely incompatible with most current bin-picking robots. Second, it’s easy to see why this kind of stowing is “a nightmare” for robots. As if the need to carefully manipulate a jumble of objects to make room in a bin wasn’t a hard enough problem, you also have to deal with those elastic bands that get in the way of both manipulation and visualization, and you have to be able to grasp and manipulate the item that you’re trying to stow. Oof.

“For me, it’s hard, but it’s not too hard—it’s on the cutting edge of what’s feasible for robots,” says Aaron Parness, senior manager of applied science at Amazon Robotics & AI. “It’s crazy fun to work on.” Parness came to Amazon from Stanford and JPL, where he worked on robots like StickyBot and LEMUR and was responsible for this bonkers microspine gripper designed to grasp asteroids in microgravity. “Having robots that can interact in high-clutter and high-contact environments is superexciting because I think it unlocks a wave of applications,” continues Parness. “This is exactly why I came to Amazon; to work on that kind of a problem and try to scale it.”

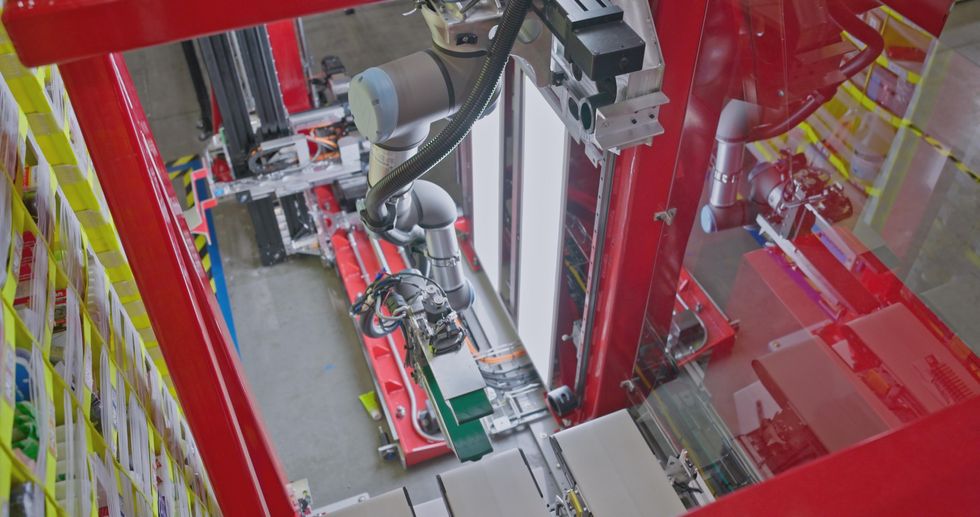

What makes stowing at Amazon both cutting edge and nightmarish for robots is that it’s a task that has been highly optimized for humans. Amazon has invested heavily in human optimization, and (at least for now) the company is very reliant on humans. This means that any robotic solution that would have a significant impact on the human-centered workflow is probably not going to get very far. So Parness, along with Senior Applied Scientist Parker Owan, had to develop hardware and software that could solve the problem as is. Here’s what they came up with:

On the hardware side, there’s a hook system that lifts the elastic bands out of the way to provide access to each bin. But that’s the easy part; the hard part is embodied in the end-of-arm tool (EOAT), which consists of two long paddles that can gently squeeze an item to pick it up, with conveyor belts on their inner surfaces to shoot the item into the bin. An extendable thin metal spatula of sorts can go into the bin before the paddles and shift items around to make room when necessary.

To use all of this hardware requires some very complex software, since the system needs to be able to perceive the items in the bin (which may be occluding each other and also behind the elastic bands), estimate the characteristics of each item, consider ways in which those items could be safely shoved around to maximize available bin space based on the object to be stowed, and then execute the right motions to make all of that happen. By identifying and then chaining together a series of motion primitives, the Amazon researchers have been able to achieve stowing success rates (in the lab) of better than 90 percent.

After years of work, the system is functioning well enough that prototypes are stowing actual inventory items at an Amazon fulfillment center in Washington state. The goal is to be able to stow 85 percent of the products that Amazon stocks (millions of items), but since the system can be installed within the same workflow that humans use, there’s no need to hit 100 percent. If the system can’t handle it, it just passes it along to a human worker. This means that the system doesn’t even need to reach 85 percent before it can be useful, since if it can do even a small percentage of items, it can offload some of that basic stuff from humans. And if you’re a human who has to do a lot of basic stuff over and over, that seems like it might be nice. Thanks, robots!

But of course there’s a lot more going on here on the robotics side, and we spoke with Aaron Parness to learn more.

IEEE Spectrum: Stowing in an Amazon warehouse is a highly human-optimized task. Does this make things at lot more challenging for robots?

Aaron Parness: In a home, in a hospital, on the space station, in these kinds of settings, you have these human-built environments. I don’t really think that’s a driver for us. The hard problem we’re trying to solve involves contact and also the reasoning. And that doesn’t change too much with the environment, I don’t think. Most of my team is not focused on questions of that nature, questions like, “If we could only make the bins this height,” or, “If we could only change this or that other small thing.” I don’t mean to say that Amazon won’t ever change processes or alter systems. Obviously, we are doing that all the time. It’s easier to do that in new buildings than in old buildings, but Amazon is still totally doing that. We just try to think about our product fitting into those existing environments.

I think there’s a general statement that you can make that when you take robots from the lab and put them into the real world, you’re always constrained by the environment that you put them into. With the stowing problem, that’s definitely true. These fabric pods are horizontal surfaces, so orientation with respect to gravity can be a factor. The elastic bands that block our view are a challenge. The stiffness of the environment also matters, because we’re doing this force-in-the-loop control, and the incredible diversity of items that Amazon sells means that some of the items are compressible. So those factors are part of our environment as well. So in our case, dealing with this unstructured contact, this unexpected contact, that’s the hardest part of the problem.

“Handling contact is a new thing for industrial robots, especially unexpected, unpredictable contact. It’s both a hard problem, and a worthy one.”

—Aaron Parness

What information do you have about what’s in each bin, and how much does that help you to stow items?

Parness: We have the inventory of what’s in the bins, and a bunch of information about each of those items. We also know all the information about the items in our buffer [to be stowed]. And we have a 3D representation from our perception system. But there’s also a quality-control thing where the inventory system says there’s four items in the bin, but in reality, there’s only three items in the bin, because there’s been a defect somewhere. At Amazon, because we’re talking about millions of items per day, that’s a regular occurrence for us.

The configuration of the items in each bin is one of the really challenging things. If you had the same five items: a soccer ball, a teddy bear, a T-shirt, a pair of jeans, and an SD card and you put them in a bin 100 times, they’re going to look different in each of those 100 cases. You also get things that can look very similar. If you have a red pair of jeans or a red T-shirt and red sweatpants, your perception system can’t necessarily tell which one is which. And we do have to think about potentially damaging items—our algorithm decides which items should go to which bins and what confidence we have that we would be successful in making that stow, along with what risk there is that we would damage an item if we flip things up or squish things.

“Contact and clutter are the two things that keep me up at night.”

—Aaron Parness

How do you make sure that you don’t damage anything when you may be operating with incomplete information about what’s in the bin?

Parness: There are two things to highlight there. One is the approach and how we make our decisions about what actions to take. And then the second is how to make sure you don’t damage items as you do those kinds of actions, like squishing as far as you can.

With the first thing, we use a decision tree. We use that item information to claim all the easy stuff—if the bin is empty, put the biggest thing you can in the bin. If there’s only one item in the bin, and you know that item is a book, you can make an assumption it’s incompressible, and you can manipulate it accordingly. As you work down that decision tree, you get to certain branches and leaves that are too complicated to have a set of heuristics, and that’s where we use machine learning to predict things like, if I sweep this point cloud, how much space am I likely to make in the bin?

And this is where the contact-based manipulation comes in because the other thing is, in a warehouse, you need to have speed. You can’t stow one item per hour and be efficient. This is where putting force and torque in the control loop makes a difference—we need to have a high rate, a couple of hundred hertz loop that’s closing around that sensor and a bunch of special sauce in our admittance controller and our motion-planning stack to make sure we can do those motions without damaging items.

Since you’re operating in these human-optimized environments, how closely does your robotic approach mimic what a human would be doing?

Parness: We started by doing it ourselves. We also did it ourselves while holding a robotic end effector. And this matters a lot, because you don’t realize that you’re doing all these kinds of fine-control motions, and you have so many sensors on your hand, right? This is a thing. But when we did this task ourselves, when we observed experts doing it, this is where the idea of motion primitives kind of emerged, which made the problem a little more achievable.

What made you use the motion primitives approach as opposed to a more generalized learning technique?

Parness: I’ll give you an honest answer—I was never tempted by reinforcement learning. But there were some in my team that were tempted by that, and we had a debate, since I really believe in iterative design philosophy and in the value of prototyping. We did a bunch of early-stage prototypes, trying to make a data-driven decision, and the end-to-end reinforcement learning seemed intractable. But the motion-primitive strategy actually turned me from a bit of a skeptic about whether robots could even do this job, and made me think, “Oh, yeah, this is the thing. We got to go for this.” That was a turning point, getting those motion primitives and recognizing that that was a way to structure the problem to make it solvable, because they get you most of the way there—you can handle everything but the long tail. And with the tail, maybe sometimes a human is looking in, and saying, “Well, if I play Tetris and I do this incredibly complicated and slow thing I can make the perfect unicorn shaped hole to put this unicorn shaped object into.” The robot won’t do that, and doesn’t need to do that. It can handle the bulk.

You really didn’t think that the problem was solvable at all, originally?

Parness: Yes. Parker Owan, who’s one of the lead scientists on my team, went off into the corner of the lab and started to set up some experiments. And I would look over there while working on other stuff, and be like, “Oh, that young guy, how brave. This problem will show him.” And then I started to get interested. Ultimately, there were two things, like I said: it was discovering that you could use these motion primitives to accomplish the bulk of the in-bin manipulation, because really that’s the hardest part of the problem. The second thing was on the gripper, on the end-of-arm tool.

“If the robot is doing well, I’m like, ‘This is achievable!’ And when we have some new problems, and then all of a sudden I’m like, ‘This is the hardest thing in the world!’ ”

—Aaron Parness

The end effector looks pretty specialized—how did you develop that?

Parness: Looking around the industry, there’s a lot of suction cups, a lot of pinch grasps. And when you have those kinds of grippers, all of a sudden you’re trying to use the item you’re gripping to manipulate the other items that are in the bin, right? When we decided to go with the paddle approach and encapsulate the item, it both gave us six degrees of freedom control over the item, so to make sure it wasn’t going into spaces we didn’t want it to, while also giving us a known engineering surface on the gripper. Maybe I can only predict in a general way the stiffness or the contact properties or the items that are in the bin, but I know I’m touching it with the back of my paddle, which is aluminum.

But then we realized that the end effector actually takes up a lot of space in the bin, and the whole point is that we’re trying to fill these bins up so that we can have a lot of stuff for sale on Amazon.com. So we say, okay, well, we’re going to stay outside the bin, but we’ll have this spatula that will be our in-bin manipulator. It’s a super simple tool that you can use for pushing on stuff, flipping stuff, squashing stuff.... You’re definitely not doing 27-degree-of-freedom human-hand stuff, but because we have these motion primitives, the hardware complemented that.

However, the paddles presented a new problem, because when using them we basically had to drop the item and then try to push it in at the same time. It was this kind of dynamic—let go and shove—which wasn’t great. That’s what led to putting the conveyor belts onto the paddles, which took us to the moon in terms of being successful. I’m the biggest believer there is now! Parker Owan has to kind of slow me down sometimes because I’m so excited about it.

It must have been tempting to keep iterating on the end effector.

Parness: Yeah, it is tempting, especially when you have scientists and engineers on your team. They want everything. It’s always like, “I can make it better. I can make it better. I can make it better.” I have that in me too, for sure. There’s another phrase I really love which is just, “so simple, it might work.” Are we inventing and complexifying, or are we making an elegant solution? Are we making this easier? Because the other thing that’s different about the lab and an actual fulfillment center is that we’ve got to work with our operators. We need it to be serviceable. We need it to be accessible and easy to use. You can’t have four Ph.D.s around each of the robots constantly kind of tinkering and optimizing it. We really try to balance that, but is there a temptation? Yeah. I want to put every sensor known to man on the robot! That’s a temptation, but I know better.

To what extent is picking just stowing in reverse? Could you run your system backwards and have picking solved as well?

Parness: That’s a good question, because obviously I think about that too, but picking is a little harder. With stowing, it’s more about how you make space in a bin, and then how you fit an item into space. For picking, you need to identify the item—when that bin shows up, the machine learning, the computer vision, that system has to be able to find the right item in clutter. But once we can handle contact and we can handle clutter, pick is for sure an application that opens up.

When I think really long term, if Amazon were to deploy a bunch of these stowing robots, all of a sudden you can start to track items, and you can remember that this robot stowed this item in this place in this bin. You can start to build up container maps. Right now, though, the system doesn’t remember.

Regarding picking in particular, a nice thing Amazon has done in the last couple of years is start to engage with the academic community more. My team sponsors research at MIT and at the University of Washington. And the team at University of Washington is actually looking at picking. Stow and pick are both really hard and really appealing problems, and in time, I hope I get to solve both!

This article appears in the March 2023 print issue as “Amazon Tells Warehouse Robots Where to Stow It.”

- Brad Porter, VP of Robotics at Amazon, on Warehouse Automation, Machine Learning, and His First Robot ›

- Amazon Acquires Kiva Systems for $775 Million ›

- Amazon Shows Off Impressive New Warehouse Robots ›

- Team Delft Wins Amazon Picking Challenge ›

- Kicking It With Robots - IEEE Spectrum ›

- Vulcan Robots: Amazon's Solution to Picking Challenges - IEEE Spectrum ›

- Vulcan Robots: Amazon's Stowing Game-Changer - IEEE Spectrum ›

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.