A movie montage for modern artificial intelligence might show a computer playing millions of games of chess or Go against itself to learn how to win. Now, researchers are exploring how the reinforcement learning technique that helped DeepMind’s AlphaZero conquer chess and Go could tackle an even more complex task—training a robotic knee to help amputees walk smoothly.

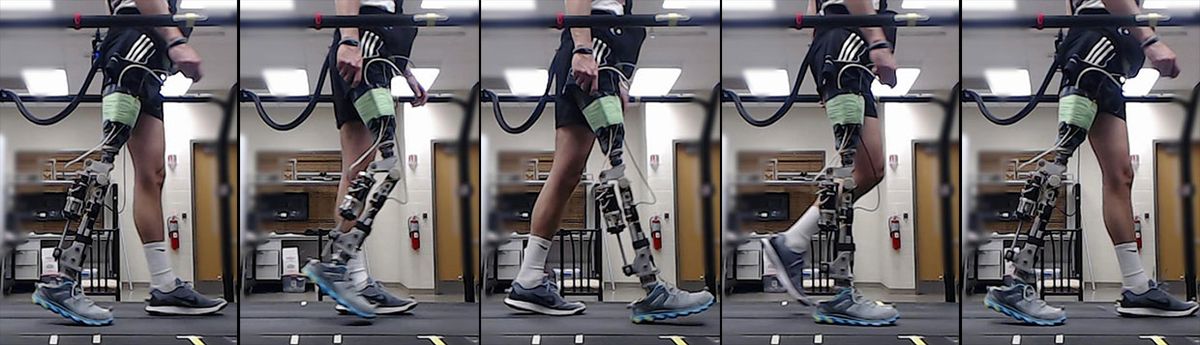

This new application of AI, which is based on reinforcement learning—an automated version of classic trial-and-error—has shown promise in small clinical experiments involving one able-bodied person and one amputee whose leg was cut off above the knee. Normally, human technicians spend hours working with amputees to manually adjust robotic limbs to work well with each person’s style of walking. By comparison, the reinforcement-learning technique automatically tuned a robotic knee, enabling the prosthetic wearers to walk smoothly on level ground within 10 minutes.

“If you wanted to make this clinically relevant, there are many, many steps that we have to go through before this can happen,” says Helen Huang, a professor in biomedical engineering at both North Carolina State University and the University of North Carolina. “So far it’s really just to show it’s possible. By itself that’s very, very exciting.”

Huang and her colleagues published their findings on 16 January 2019 in IEEE Transactions on Cybernetics. Their study marks a possible first step toward automating the typical manual tuning process that requires costly and time-consuming clinic visits whenever robotic limbs need adjusting—something that could also eventually allow prosthetic users to tune their robotic limbs at home or on the go.

The tuning process focuses on specific parameters that define the relationship between force and motion in using a robotic limb. For example, some parameters may define the stiffness of the robotic knee joint or the range of motion allowed in swinging a leg back and forth. In this case, the robotic knee had a dynamic combination of 12 parameters that required trial-and-error tuning. The starting point for such parameters are usually far from perfect for freshly unboxed robotic limbs, but enough for wearers to stand up and make simple walking movements.

Training a robotic limb is a complex process of co-adaptation that requires the limb to learn how to cooperate with the human brain controlling most of the body. That process can involve much initial clumsiness: not unlike the first time people strap skis onto their feet and try to move around on a snow-packed surface.

“Our body does weird things when we have a foreign object on our body,” says Jennie Si, professor of electrical, computer, and energy engineering at Arizona State University and coauthor of the paper. “In some sense, our computer reinforcement learning algorithm learns to cooperate with the human body.”

To complicate matters further, the reinforcement-learning algorithm had to prove its worth with a fairly limited set of training data from prosthetics users. When DeepMind trained its AlphaZero computer program to master games such as chess and Go, AlphaZero had the benefit of being able to simulate millions of games during its marathon training montage. By comparison, individual human amputees cannot keep walking forever for the sake of training a reinforcement algorithm—those who visit Huang’s lab may walk just 15 or 20 minutes before taking a rest break.

The training data also faced other limitations. At the beginning of the research project, Si wondered if it was possible to allow the prosthetics users to fall down during some trial runs so that the algorithm could learn from those cases. But Huang rejected that idea for safety reasons.

Despite such challenges, initial results have proven promising. The researchers trained the reinforcement-learning algorithm to recognize certain patterns in the data collected from sensors embedded in the prosthetic knee and set some initial constraints on their algorithm to avoid more undesirable situations that could cause the wearer to fall down. Eventually, the algorithm learned to focus on certain data patterns that matched fairly stable and smooth walking patterns.

This automated approach to tuning robotic limbs is far from ready for widespread deployment. For now, the researchers plan to train the algorithm to help prosthetic users walk up and down steps. They also hope to create a wireless version of the system that could extend training sessions beyond in-person visits to the lab.

One of the biggest next steps for the research project involves somehow giving prosthetics users a way to tell the algorithm that a particular walking pattern feels better or worse. But early attempts to allow for human input via a button or other simple controls have proven somewhat clumsy in practice—perhaps in part because such input fails to capture the complex coordination of human perception and cognition in choreographing a body’s movements.

“It hasn’t really worked out well because we don’t really understand humans well,” Huang says. “I definitely see a lot of basic science that needs to be done to jump there.”

Jeremy Hsu has been working as a science and technology journalist in New York City since 2008. He has written on subjects as diverse as supercomputing and wearable electronics for IEEE Spectrum. When he’s not trying to wrap his head around the latest quantum computing news for Spectrum, he also contributes to a variety of publications such as Scientific American, Discover, Popular Science, and others. He is a graduate of New York University’s Science, Health & Environmental Reporting Program.