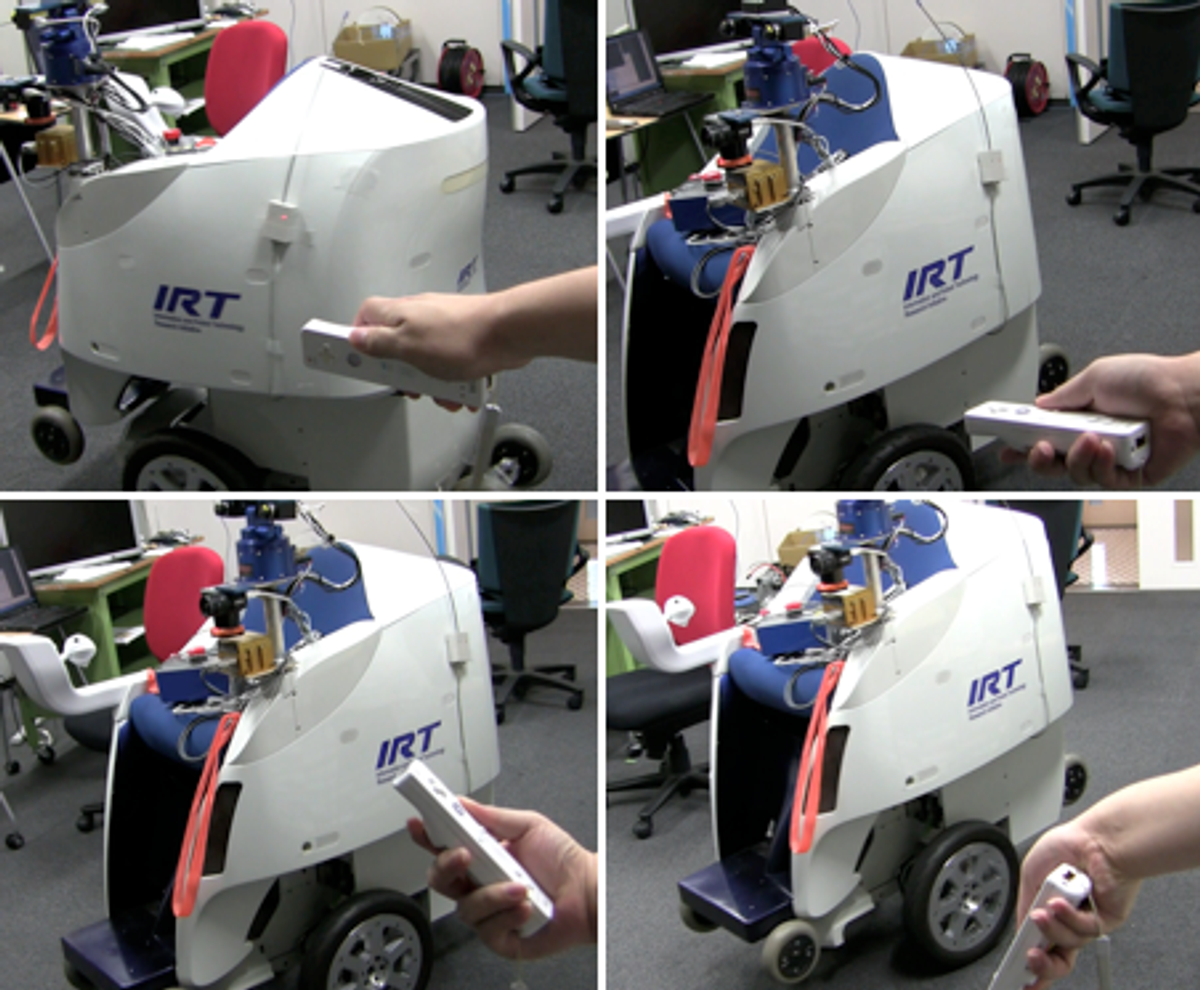

The Personal Mobility Robot, or PMR, is a nimble robotic wheelchair that self-balances on two wheels like a Segway. The machine, based on a platform developed by Toyota, has a manual controller that the rider uses to change speed and direction [see photo, inset].

Last year, when I visited the JSK Robotics Laboratory, part of the university’s department of mechano-informatics and directed by Professor Masayuki Inaba, researchers Naotaka Hatao and Ryo Hanai showed me the PMR under Wii control. They didn’t let me ride it while they’re piloting the machine, but it was fun to watch.

Watch:

Watch in HD here.

The PMR project is part of the Information and Robot Technology (IRT) research initiative at the University of Tokyo. Researchers developed the machine to help elderly and people with disabilities remain independent and mobile. The machine is designed to be reliable and easy to operate, being capable of negotiating indoor and outdoor environments -- even slopes and uneven surfaces. It weighs 150 kilograms and can move at up to 6 kilometers per hour.

The PMR is a type of robot known as a “two-wheeled inverted pendulum mobile robot” -- like the Segway and many others. The advantage of a self-balancing two-wheeled machine is its smaller footprint (compared to, say, a four-wheeled one) and its ability to turn around its axis, which is convenient in tight spaces.

In addition to Wii-mote controllability, the JSK researchers have been working on an advanced navigation system that is able to localize itself and plan trajectories, with the rider using a computer screen to tell the robot where to go [photo, right].

The navigation system runs on two laptop computers in real time, one for localization and the other for trajectory planning. Laser range sensors and SLAM algorithms detect people and objects nearby, and it can distinguish between static and moving obstacles. It does that by successively scanning its surroundings and comparing the scans, which allows it to detect elements that are moving as well as occluded areas that only become “visible” as the robot moves.

The system can rapidly detect pedestrians who suddenly start to move as well as people appearing from blind spots. In those cases, the robot can do two things: recompute the trajectory to avoid a collision [image below, left, from a paper they presented at last year's IEEE RO-MAN conference] or stop for a few seconds, wait for the pedestrian, and then start moving again [below, right].

The navigation system uses a deterministic approach to plan the trajectory. Basically it assigns circles to all vertexes of static objects and then tries to draw a continuous line that is tangent to the circles, going from origin to destination. Of course, there might be a lot of possible routes, so the system uses a A* algorithm to determine the path to be taken. You can see a visual representation of this approach below:

And although I didn’t get to see it, researchers told me they’re also developing a PMR model specific for indoor use. It’s lighter (45 kg) and more compact and the rider can control it by shifting his or her body, just like the Segway.

That means, the researchers say, that you can ride it hands-free: Just tell it where to go and enjoy the ride while sipping a drink or reading a book.

Photos: Information and Robot Technology/University of Tokyo, JSK Robotics Lab

Erico Guizzo is the director of digital innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.