Bedeviled by the challenges of measuring nanofeatures on ever-shrinking chips? A new hybrid statistical approach might let you combine a variety of optical methods to improve measurements, reduce uncertainty, and boost throughput.

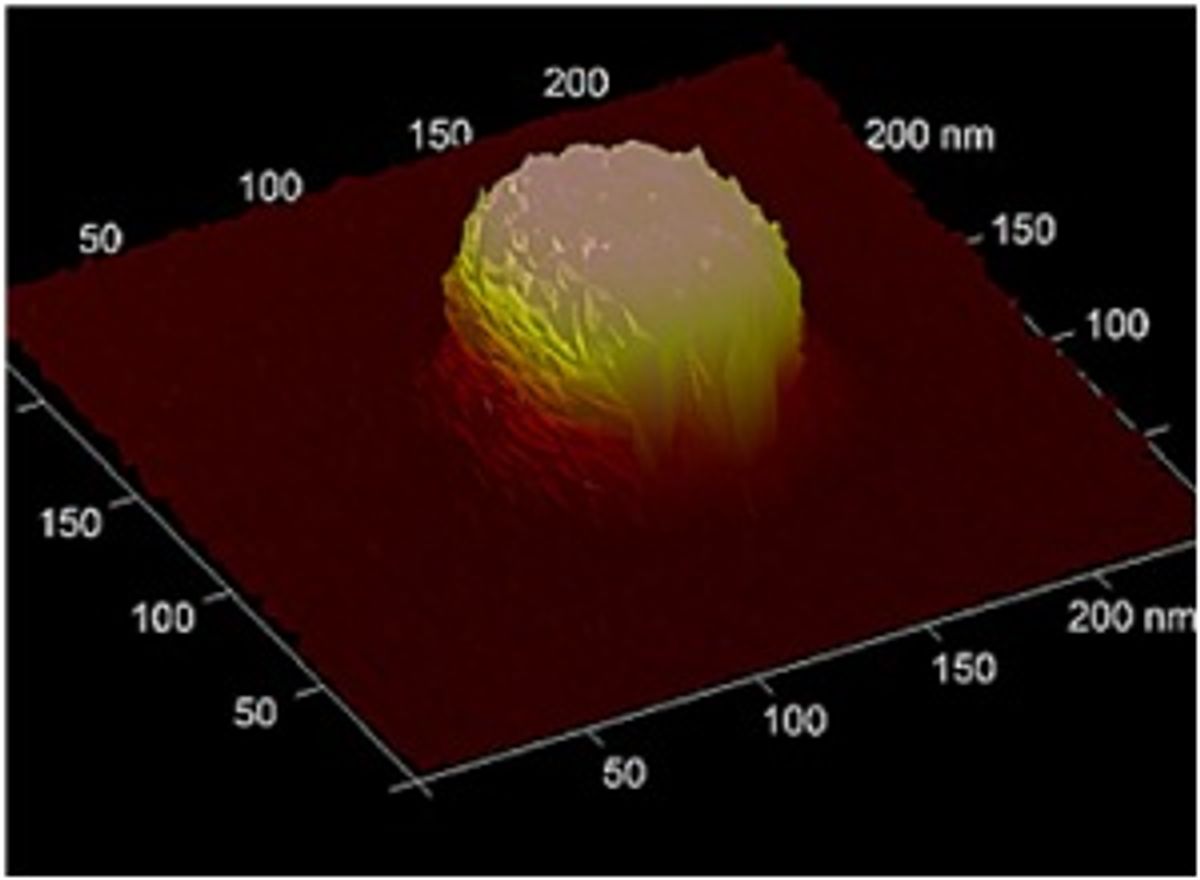

They tested their approach on optical critical dimension (OCD) measurements of arrays of etched ridges on silicon chips 60-70 nanometers tall and about as wide at the base. (Sixty nanometers is very roughly 300 silicon-atom diameters.)

At this scale, there is no ruler. Scatterometrists generate curves—typically, of the intensity of reflected light—for a wide range of input variables (wavelength, incident angle, or polarization, for example). They compare these data to a much wider array of curves derived from models—each having a particular combination of, for example, line height, width, edge roughness, sidewall height, sidewall angle, etc. When they find a best-fit match between the real-world curve and the theoretical curve, they figure that the real-world array pretty much matches the model. The measurement isn’t exact, of course—there are always uncertainties and noise in the system, and different parameter combinations can yield similar results.

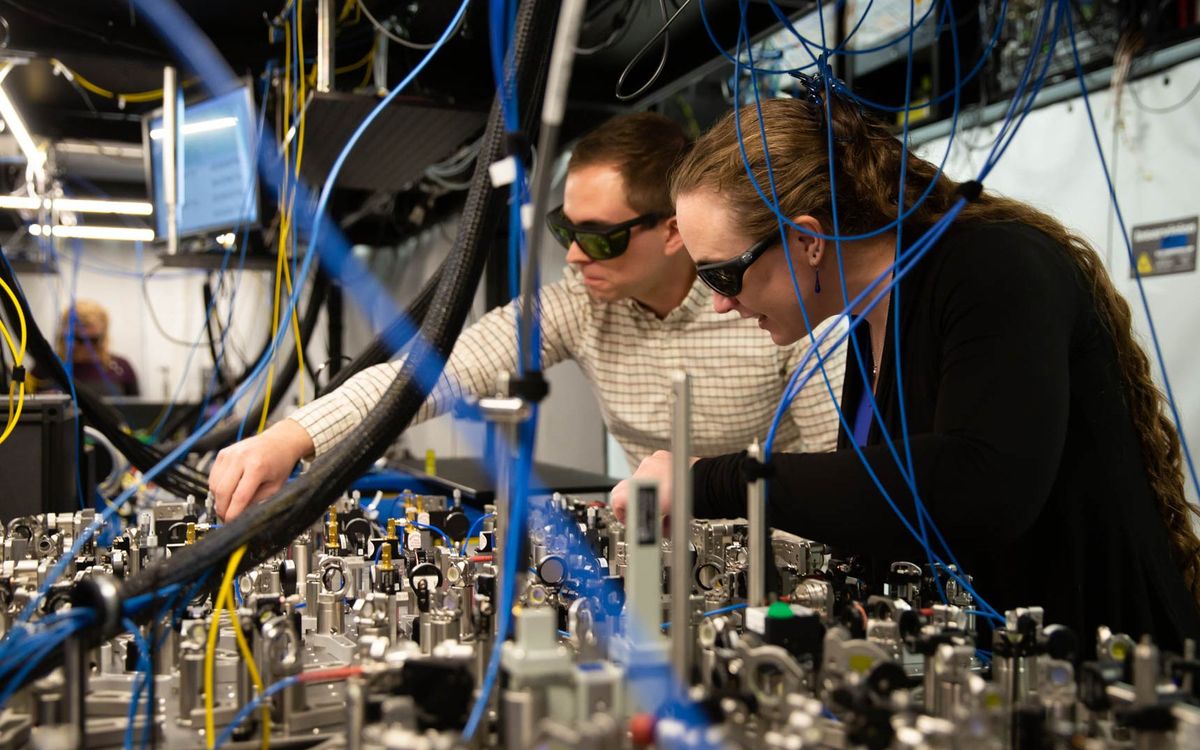

In testing their approach, the NIST researchers started with scatterometric measurements. In one case, for example, they made four scans of a silicon nitride line array etched on a chip. Each scan plotted light intensity for 21 incident angles, changing the polarization or the orientation of the light source with each scan.

The best fit to the parametric model indicated that the top of the trapezoid measured 33.7 nm wide (with a 10.8 nm standard deviation), the middle width (halfway between base and top) was 48.9 nm (6.0 nm), and the height was 60.0 nm (2.2 nm).

A subsequent AFM measurement gave somewhat different readings: top, 37.6 nm (0.9 nm); middle, 48.0 nm (1.9 nm); height, 57.5 nm (0.7 nm).

Enter Thomas Bayes (with an assist from Pierre-Simone LaPlace) and Bayes’ Theorem—the mathematical tool for refining initial assessments of the probability of an event (for example, that a measurement will produce a certain value) with information from later or other events.

The approach seems intuitively obvious, at first—experience is always reshaping our assumptions after all. And, indeed, iterative approximations are no strangers in the math world. But there’s something about real-world applications of Bayes’ Theorem that seems, well, spooky—like something derived in the arithmancy department at Hogwarts. I’m focusing on Event A, right here in front of me, but my expectation of results can be changed greatly by a null result for Event B, over there on the other side of the room. It can seem as maddening as the Monty Hall problem...but more complicated. (For an entertaining, if sometimes unmathematical, history of Bayes’ Theorem, see Sharon McGrayne's book, The Theory That Would Not Die.)

So the researchers (under the mathematical guidance of statistician Nien Fan Zhang) applied a Bayesian manipulation to the scatter data, using all of the AFM measurements to modify each of the scatterometric data points. The result: top width, 38.0 nm (0.9 nm); middle width, 48.9 nm (1.8 nm); height, 58.6 nm (0.4 nm).

There are three take-aways. The combined Bayesian measurement has a higher probability of being correct than either the scatter or AFM value alone. The expected error is much smaller (improving on the scatterometric uncertainty by more than an order of magnitude in once instance). Finally, one would expect that combining measurement methods (especially a relatively demanding method like atomic force microscopy) would slow down the measurement process. Not necessarily, the authors say: Well-chosen sampling strategies can, in fact, improve measurement throughput—all while improving measurement and reducing uncertainty.

The approach, the paper concludes, is suitable for a wide range of new metrological combinations—combining model-based scanning electron microscopy, quantitative ellipsometry, and other methods. Overall, “the new hybrid methodology has important implications in devising measurement strategies that take advantage of the best measurement attributes of each individual technique.” And, indeed, researcher Richard Silver says that at least two manufacturers have deployed the hybrid methodology and are already improving their measurement results.

Douglas McCormick is a freelance science writer and recovering entrepreneur. He has been chief editor of Nature Biotechnology, Pharmaceutical Technology, and Biotechniques.