Video Friday is your weekly selection of awesome robotics videos, collected by your Automaton bloggers. We’ll also be posting a weekly calendar of upcoming robotics events for the next two months; here’s what we have so far (send us your events!):

Gigaom Change – September 21-23, 2016 – Austin, Texas, USA

RoboBusiness – September 28-29, 2016 – San Jose, Calif., USA

HFR 2016 – September 29-30, 2016 – Genoa, Italy

ISER 2016 – October 3-6, 2016 – Tokyo, Japan

Cybathlon Symposium – October 07, 2016 – Zurich, Switzerland

Cybathalon 2016 – October 08, 2016 – Zurich, Switzerland

Robotica 2016 Brazil – October 8-12, 2016 – Recife, Brazil

ROSCon 2016 – October 8-9, 2016 – Seoul, Korea

IROS 2016 – October 9-14, 2016 – Daejon, Korea

NASA SRC Qualifier – October 10-10, 2016 – Online

ICSR 2016 – November 1-3, 2016 – Kansas City, Kan., USA

Social Robots in Therapy and Education – November 2-4, 2016 – Barcelona, Spain

Let us know if you have suggestions for next week, and enjoy today’s videos.

Frankly, I’m not sure why this is only now a thing:

Be right back: need to resurrect my lost childhood.

[ Flybrix ]

This video documents our field experiments at White Sands National Monument [in New Mexico] with the RHex robot, in March 2016. It demonstrates the great potential for RHex to assist aeolian scientists in desert research. By collecting data through sensors mounted on RHex, we gather transformative datasets that are required to calibrate and verify existing and future dune dynamics and sand transport models.

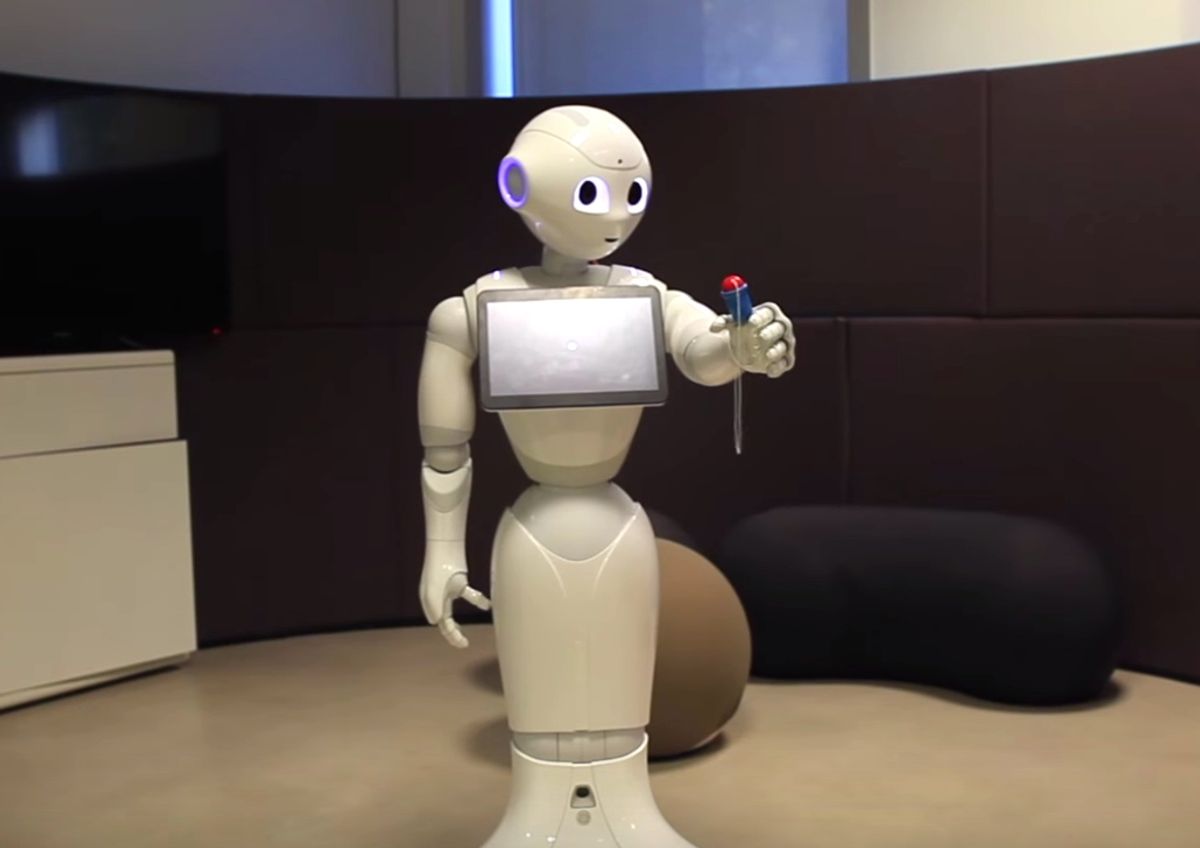

After just 100 trials, Pepper masters one of the most complicated and difficult games ever created:

Thanks for totally ruining that for us humans, Pepper. Geez.

Welcome to the world of tomorrow!

And that is how you play Jenga.

[ HEBI Robotics ]

If NASA sends a robot to explore one of Saturn’s moons, it might look like this:

I especially appreciate the utter disregard that this robot has for its own physical safety.

[ NASA ]

Here’s a clever way of collaboratively transporting an object with MAVs: have them act like they’re being tugged around on a leash.

[ ETH Zurich ]

I wish my parents loved me this much when I was little:

It’s basically an incredibly expensive See ’n Say. Also, I want that kid’s shirt.

[ SoftBank Robotics ] via [ Taylor Veltrop ]

Researchers at the Robot Cognition and Control Lab (RCCL), part of the Korea Institute of Industrial Technology (KITECH), have been teaching their dual-arm robot some neat manipulation skills. Dr. Ji-Hun Bae, principal research scientist at RCCL, tells us that the only sensors the robot is using to perform the tasks shown in the video are a Kinect for vision and the joint-angle encoders on its arms. That’s right: no force/torque or tactile sensors.

It’s impressive that the robot can perform these tasks using only limited sensor information. That said, the RCCL researchers might end up adding more sensing capabilities to its robot, including force and tactile sensors, to allow it to do even more complex manipulations. Next they’re planning to teach the robot how to use human tools like a screwdriver and scissors. Dr. Bae says their goal is demonstrating the usefulness of dual-arm robots with multi-fingered hands and developing techniques that can be used in industry and daily life applications.

[ KITECH ]

Thanks Dr. Bae!

If you’re using heavy equipment remotely, wouldn’t it be nice if you could get the perfect view of everything you’re doing? If you tether a drone to your robot excavator, you can totally do that, and it works flawlessly:

When sediment disasters occur, unmanned construction machines are useful for emergency rehabilitation from the point of view of worker’s safety. However, in case of initial response, operators feel difficulty because of a lack of visual information caused by no moving camera vehicles. In order to add a third party’s point of view, we developed a power-feeding helipad for tethered multi-rotor UAV mounting on an unmanned construction machine. The helipad controls the tension of the tether for adjustment of its feeding length. In this movie-clip, we introduce our helipad, and show an initial field test.

Brace yourself for the blistering speed and incredible display of skill that is TeenSize robot soccer:

[ NimbRo ]

The post-Garmin version of Lidar Lite, the same hardware that’s in the Sweep low-cost lidar from Scanse, is now being offered by SparkFun for $150:

If you want it all packaged up for you (with software and motor so you can do full 360-degree sensing), Sweep is $250.

[ SparkFun ]

Not news: drone flies 12 centimeters. News: drone without any batteries flies 12 centimeters.

This video show a quadrotor being powered completely wirelessly via magnetic induction. The quadrotor’s battery has been removed. The transmitter was implemented on a two layer PCB and was driven by a load independent Class EF inverter. The inverter operates at 13.56 MHz and uses a single GaN transistor from GaN Systems. The receiving coil consists a single turn of copper tape which was wound around the shield of the quadrotor. The receiver board consists of a Class D rectifier and a DC/DC converter.

[ Imperial College London ] via [ Hackaday ]

In September 2016, the team at ESA’s deep-space tracking station at Cebreros, near Madrid, Spain, ran a series of test flights to image the antenna using a Dji Phantom 3 drone controlled with an iPad. The team were trialling use of a drone to perform routine maintenance inspections of the station’s massive 35-m dish antenna and the supporting structure. It may also be an effective option for inspecting the site’s fencing and other facilities.

Somebody take one to Arecibo, please.

[ ESA ]

Mobile robots can be used in many applications, they are especially suited for environments that are unreachable or too dangerous for humans. In many cases, these environments have to be explored and mapped before robots can carry on with their mission. Mobile robots are generally limited in their run time and the travel range because they are battery operated. To increase the time robots can work, their batteries can be recharged at docking stations (DSs). Recharging at DSs has the additional advantage of increasing autonomy, reducing the need for human intervention. Nevertheless, robots still have a limited range they can travel before they have to return for recharging. This limits the reachable area by the robots. To overcome this threshold, robots can form teams in which they take on different tasks, allowing some robots to further explore while others form a supply chain to deliver energy to the exploring robots.

UBTECH’s Alpha 2 should be shipping to early backers within the next month or so, but in the meantime, here’s an unboxing of the new Alpha 1 Pro:

[ UBTECH ]

This may not be the future of drone delivery, but it’s definitely the present of drone delivery:

[ Woot ]

CMU RI Seminar: Brenna D. Argall, assistant professor of physical medicine & rehabilitation, Northwestern University.

It is a paradox that often the more severe a person’s motor impairment, the more challenging it is for them to operate the very assistive machines which might enhance their quality of life. A primary aim of my lab is to address this confound by incorporating robotics autonomy and intelligence into assistive machines---to offload some of the control burden from the user. Robots already synthetically sense, act in and reason about the world, and these technologies can be leveraged to help bridge the gap left by sensory, motor or cognitive impairments in the users of assistive machines. However, here the human-robot team is a very particular one: the robot is physically supporting or attached to the human, replacing or enhancing lost or diminished function. In this case getting the allocation of control between the human and robot right is absolutely essential, and will be critical for the adoption of physically assistive robots within larger society. This talk will overview some of the ongoing projects and studies in my lab, whose research lies at the intersection of artificial intelligence, rehabilitation robotics and machine learning. We are working with a range of hardware platforms, including smart wheelchairs and assistive robotic arms. A distinguishing theme present within many of our projects is that the machine automation is customizable---to a user’s unique and changing physical abilities, personal preferences or even financial means.

Evan Ackerman is a senior editor at IEEE Spectrum. Since 2007, he has written over 6,000 articles on robotics and technology. He has a degree in Martian geology and is excellent at playing bagpipes.

Erico Guizzo is the Director of Digital Innovation at IEEE Spectrum, and cofounder of the IEEE Robots Guide, an award-winning interactive site about robotics. He oversees the operation, integration, and new feature development for all digital properties and platforms, including the Spectrum website, newsletters, CMS, editorial workflow systems, and analytics and AI tools. An IEEE Member, he is an electrical engineer by training and has a master’s degree in science writing from MIT.